This report from The Brookings Institution’s Artificial Intelligence and Emerging Technology (AIET) Initiative is part of “AI and Bias,” a series that explores ways to mitigate possible biases and create a pathway toward greater fairness in AI and emerging technologies.

Introduction

When it comes to gender stereotypes in occupational roles, artificial intelligence (AI) has the potential to either mitigate historical bias or heighten it. In the case of the Word2vec model, AI appears to do both.

Word2vec is a publicly available algorithmic model built on millions of words scraped from online Google News articles, which computer scientists commonly use to analyze word associations. In 2016, Microsoft and Boston University researchers revealed that the model picked up gender stereotypes existing in online news sources—and furthermore, that these biased word associations were overwhelmingly job related. Upon discovering this problem, the researchers neutralized the biased word correlations in their specific algorithm, writing that “in a small way debiased word embeddings can hopefully contribute to reducing gender bias in society.”

Their study draws attention to a broader issue with artificial intelligence: Because algorithms often emulate the training datasets that they are built upon, biased input datasets could generate flawed outputs. Because many contemporary employers utilize predictive algorithms to scan resumes, direct targeted advertising, or even conduct face- or voice-recognition-based interviews, it is crucial to consider whether popular hiring tools might be susceptible to the same cultural biases that the researchers discovered in Word2vec.

In this paper, I discuss how hiring is a multi-layered and opaque process and how it will become more difficult to assess employer intent as recruitment processes move online. Because intent is a critical aspect of employment discrimination law, I ultimately suggest four ways upon which to include it in the discussion surrounding algorithmic bias.

Developing an initial understanding of the problem

Should bias exist in currently operating recruitment software, anti-discrimination statutes would ideally offer job seekers a mechanism by which to pursue remedies. In practice, however, AI presents unique challenges in interpretation under current equal employment laws, such as the Civil Rights Act of 1964, the Age Discrimination in Employment Act of 1967, the Americans with Disabilities Act of 1990, and the Genetic Information Non-Discrimination Act of 2008.

For instance, in order to pursue a gender discrimination claim under Title VII of the Civil Rights Act, potential plaintiffs would need to demonstrate that a private-sector employer had either purposefully intended to discriminate, or engaged in actions—including unintentional actions—that had a disproportionate impact on a particular gender group. These two mechanisms are termed “disparate treatment or intentional discrimination” and “disparate impact,” respectively.

In two separate briefs in Brookings’s AI and Bias series, researchers Manish Raghavan and Solon Barocas and Mark MacCarthy address how algorithms can result in systematic “disparate impact” on protected classes, as well as the technical and legal issues involved. This policy brief considers the related analysis of “intentional discrimination” and offers a framework, in the context of AI and hiring, to contemplate the role and responsibilities of employers in administering fair hiring processes.

Stages of hiring

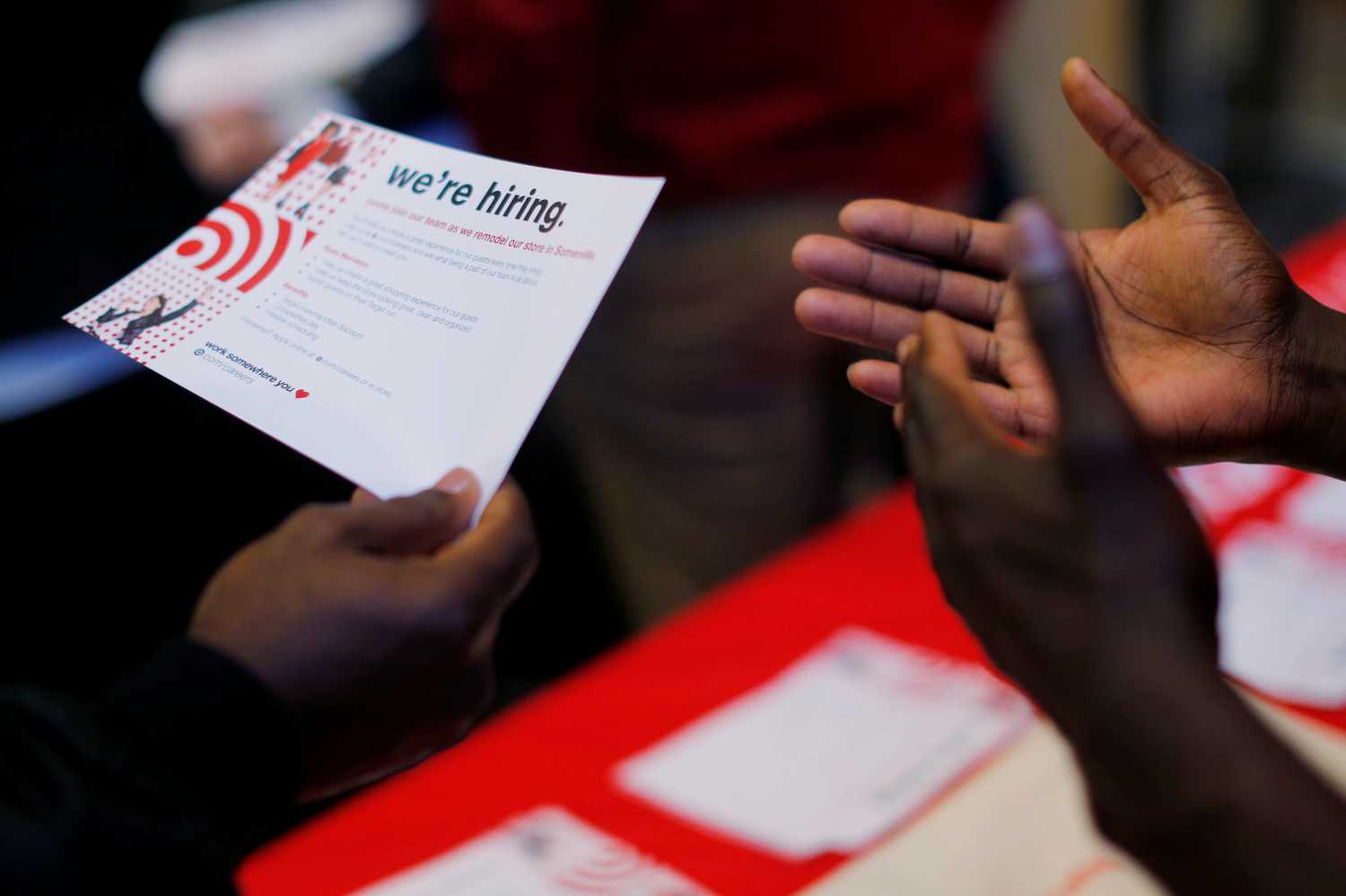

In any analysis of employer intentions, it is relevant to ask why employers might turn to automated recruiting systems in the first place. For one matter, companies have seen high recruitment volume in recent years (due to low retention, frequent external promotion, and ease of electronic application) and computer filtering is often a measure of practicality. Additionally, employers might utilize computer-assisted hiring to reduce human biases, especially since multiple academic studies have suggested some degree of human bias in manual hiring with respect to gender, race, or even seemingly random factors like birth month.

“[M]ultiple academic studies have suggested some degree of human bias in manual hiring with respect to gender, race, or even seemingly random factors like birth month.”

The contemporary hiring process typically consists of multiple rounds of screening, many of which are automatable. Job seekers often start by identifying job vacancies through the internet, including through search engines and targeted advertisements. In this initial stage, online advertising platforms can collect data on users’ search histories, usage patterns, and demographics, and utilize predictive analytics to infer individuals whom companies might want to recruit.

Assuming a candidate identifies a prospective job opening and submits a resume, not all completed applications might be looked at by a human hiring manager. Depending on business needs and recruiting volume, employers may utilize predictive resume review software, such as Mya, to automatically scan resumes for certain keywords and rank applicants based on their predicted suitability for the position.

The potential for automation continues into the next stage of hiring. After a candidate’s resume is selected for further review, the candidate can undergo multiple rounds of interviewing and screening before selection, a process which no longer requires human intervention. Companies can use third-party interview analysis software, like HireVue and Predictim, to automatically score interviewees’ facial expressions, choice of vocabulary, and tone. Furthermore, companies can use algorithmic assessments, such as Pymetrics, to predict a candidate’s job performance before they even step into the office.

“[C]ompanies can use algorithmic assessments … to predict a candidate’s job performance before they even step into the office.”

It is not uncommon for both employers and applicants to be unfamiliar with how these algorithms—and especially unsupervised learning models—work. Although some large software companies, including Google and Amazon, develop in-house algorithms, the majority of employers are more likely to rely on third-party algorithmic hiring tools. Third-party algorithms are almost universally opaque due to factors like proprietary software, patented technologies, and/or general complexity. Non-developers can be unaware of which specific factors motivate algorithmic rankings, as well as what training data and de-biasing techniques are utilized. Additionally, non-developers (in this case, most hiring managers) often lack the ability to modify the third-party algorithms that they use. Because of this possible disconnect, employer intent is a relevant consideration when judging potential discrimination in algorithmic software.

Evidentiary frameworks for discriminatory intent

Traditionally, federal agencies have identified three types of evidence to detect discriminatory intent: direct, indirect, and statistical. Plaintiffs can use a single category or a combination of the three to allege intentional discrimination—which, in the context of algorithms, would imply that the employer had some agency in any biased hiring outcomes affiliated with an algorithm’s design and execution.

- Direct evidence: If an employer explicitly limits employment preferences against a protected class, this action could constitute direct evidence of purposeful discrimination. In general, courts have yet to set legal precedent in the context of AI, although a recent lawsuit illustrates one party’s argument for what might be considered direct evidence of algorithmic bias. In 2018, Facebook faced a lawsuit that alleged that the social media platform’s practice of allowing job advertisers to consciously target online users by gender, race, and zip code constituted evidence of intentional discrimination—however, the parties reached a settlement before a court could issue a ruling on this argument.

- Indirect evidence: Plaintiffs can also use indirect evidence, otherwise known as circumstantial evidence, to support claims of discriminatory intent. Indirect evidence could suggest or imply an alleged offense—as such, “suspicious timing, inappropriate remarks, and comparative evidence” of inequitable treatment might qualify. For instance, an improper remark by a human hiring manager could be construed as indirect evidence of discriminatory intent, even if using automated software to rank candidates.

- Statistical evidence: To supplement direct and indirect evidence, plaintiffs may demonstrate an employer’s historical hiring discrepancy between protected groups. Although statistical evidence generally isn’t solely determinative of malicious intent, it could potentially suggest an employer should have reasonably been aware of bias. Several federal agencies have adopted a “4/5ths rule of thumb” to quantify a “substantially different rate of selection” between groups, although they acknowledge that selection rates are primarily guidelines.

Ways to consider human intent in automated hiring

Because direct evidence of motive is usually difficult to obtain, most attempts to determine discriminatory intent in algorithmic bias cases will likely involve some uncertainty and weighing of the facts. A recruitment algorithm would generally be considered discriminatory if, holding all other factors equal, one protected variable (be it race, gender, etc.) would affect a person’s likelihood of receiving a job offer. But in practice, this definition does not easily apply to intent. The challenge lies not only in detection, but in remedy—it is not only necessary to distinguish an employer’s motive from algorithmic outcomes, but also to decide how to address it. However, even with so many uncertainties, a few relevant questions emerge when identifying and addressing discriminatory intent in the context of algorithms.

First, has the hiring manager demonstrated a voluntary will to treat protected classes in an inequitable manner? Traditional direct evidence of unlawful bias, such as a deliberate action to target a protected class or a public admission of guilt, could establish negative intent; thus providing a potentially irrefutable means of holding employers accountable.

Second, has the hiring manager exhibited efforts to treat applicants equitably to the best of their knowledge? Even if employers are unaware of how third-party software operates, they can still enforce standards to mitigate bias, such as human supervision, diversity trainings, self-audits, or outcome analysis. Employers who decline to enact reasonable equal employment standards, or who are unable to demonstrate that a chosen algorithm is relevant to their hiring needs, may find their intentions in question.

Third, would either party experience a significant degree of harm in the event of an error in judgement of intent? In other words, if an employer were inaccurately deemed to harbor either benign or negative intent, would either the plaintiff or defendant suffer any unjust reputational, defamatory, or financial losses? This consideration does not include impact on an entire protected class, but rather the potential harm to the specific named plaintiff and specific named defendant in the event of an untrue allegation or ruling.

Fourth, are there reasonable methods available for both the plaintiffs and the employers to prove their cases? If a particular case lacks direct evidence, plaintiffs bear a risk of error when relying on indirect or statistical evidence to allege intent. However, both job applicants and employers face systematic challenges (most notably, asymmetric information regarding patented algorithms) in compiling evidence. Therefore, another consideration is the strength of the presented evidence given a reasonable, case-by-case feasibility of gathering it.

These four questions do not intend to emulate a judicial balancing test, but they do offer a preliminary framework upon which to consider questions of discriminatory intent. The first two questions provide a more conclusive mechanism of identifying intent: If an employer directly implies discriminatory intent or cannot demonstrate reasonable hiring policies, it is easier to distinguish human negative intent amongst algorithmic-based outcomes. On the other hand, the third and fourth questions are more subjective and weigh the unique possibilities of opacity and harm in algorithmic bias to determine appropriate redress in an intent claim. Going forward, it is possible that Congress or the courts will provide further legislative or judicial clarity on the topic of algorithmic bias—but in the meantime, these are some factors to consider.

The Brookings Institution is a nonprofit organization devoted to independent research and policy solutions. Its mission is to conduct high-quality, independent research and, based on that research, to provide innovative, practical recommendations for policymakers and the public. The conclusions and recommendations of any Brookings publication are solely those of its author(s), and do not reflect the views of the Institution, its management, or its other scholars.

Microsoft provides support to The Brookings Institution’s Artificial Intelligence and Emerging Technology (AIET) Initiative, and Amazon, Apple, Facebook, and Google provide general, unrestricted support to the Institution. The findings, interpretations, and conclusions in this report are not influenced by any donation. Brookings recognizes that the value it provides is in its absolute commitment to quality, independence, and impact. Activities supported by its donors reflect this commitment.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).