Current drafts of the reauthorized Elementary and Secondary Education Act (ESEA) fall short of a commitment to use research to improve education. The bills—the “Student Success Act” in the House and the “Every Child Achieves Act” in the Senate—no doubt represent compromises and tradeoffs as any major legislation would. But who is arguing for less research and innovation in education?

Much of what is debated about No Child Left Behind is its accountability structure—annual tests, “annual yearly progress,” and the goal of moving every student to proficiency by 2014. But another important theme in NCLB was using “scientifically-based research.” Its steady drumbeat of “use research, use evidence, use scientific methods,” represented an embrace of education research and especially the practice of using causal methods to study program effectiveness. NCLB did not go as far as requiring research evidence as a basis for program funding, and in 2002 not much evidence would have met that standard. Related legislation that year created the Institute of Education Sciences, which followed through on the vision of building and using evidence to improve education.

Evidence from studies showed that some of what had been thought did not prove to be true. For example, after-school programs did not improve outcomes; using education software to support teaching did not raise test scores; teacher professional development did not raise test scores; voucher programs did not raise test scores; a range of programs to promote social and emotional learning had little effect on outcomes. It seems like a list of negatives, but evidence is useful one way or the other. And, as Tom Kane has argued, more than 80 percent of clinical trials fail to show effectiveness. Why would education be different? And some things that were not known became evident: for example, parents and students attending charter schools were more satisfied with the schools but the schools themselves ranged widely in their effectiveness; math textbooks can affect math skills differently, and NCLB itself raised test scores.

Research is included in the current ESEA drafts, but to no greater extent than it was for NCLB, and in some ways, it’s to a lesser extent. The House bill substitutes a new term, “evidence based,” for NCLB’s “scientifically based.” The substitution seems innocuous, but could prove problematic because the bill does not further define “evidence.” (NCLB defined “scientifically based” in Title IX.) If a state conducts a survey and finds that many students participating in a program think it is effective, is that evidence the program is effective? Under some definitions, yes. Under others, not so much. Opinions about effects are not the same as measures of effects.

Adding a definition of evidence will clarify what meets it and what does not. There is language in the House bill that says programs that receive funding under Title II to prepare new teachers need to “reflect evidence-based research, or in the absence of a strong research base, reflect effective strategies in the field, that provide evidence that the program or activity will improve student academic achievement.” So, what are “effective strategies in the field?” How is it determined that they improve academic achievement? Would it not be “evidence-based research” that shows the practices led to improvement?

The House and Senate bills also call for evaluations. The Senate bill calls for a national evaluation of its new literacy program, an evaluation of a program that serves students in foster care, and a demonstration program of innovative assessment systems that states can pilot. That demonstration is likely to be evaluated so it is mentioned here. The House bill calls for an evaluation of the charter school program and the magnet schools program, neither of which is new. It would be the third evaluation of magnet schools.

Of course, ESEA does not have to be directive about what should be studied. It can set aside money that can be used for studies, and allow their topics and focus to emerge elsewhere. Both bills include the key clause that funds research and evaluation. It states that the Secretary of Education can set aside to use for evaluation up to 0.5 percent of funds for all except the first title. Using 2014 appropriations, the set-aside amount is roughly $35 million. IES also receives funding to carry out the National Assessment of Education Progress, to support state development of their data bases, and for other purposes such as studies of special education. The $33 million is to support studies that relate to ESEA.

This is not a lot of money for research, for three reasons. One is that research is a uniquely federal responsibility, not just for education but generally. Fiscal federalism assigns the federal government responsibility for research because states and localities have incentives to underinvest in research. Its costs accrue to them and its benefits accrue to everybody. When the federal government invests in research, costs and benefits align.

A second perspective for viewing the education-research investment as paltry is to compare it with the federal investment in the National Institutes of Health. In 2014, that investment was about $30 billion. It’s hard to argue that there is “too much” investment in health research. It’s vital to the nation’s population. But it’s also hard to argue that it is hundreds of times more important to invest in health research than education research for America’s low-income students. Education is vital to the nation’s population too. There is much more federal spending on health than on education, through the Medicare and Medicaid programs, particularly, but that is not a basis for why federal spending for health research is so much larger than education research. The federal role in supporting research is primary regardless of which level of government spends on services.

A third perspective on research funding also points to enlarging it. On its own, the K-12 public education system is static. It wants to do the same thing. Taxes flow in, students and teachers come to school in the morning, there are classes and graduation ceremonies and sports events, and it is repeated next year. The system wants to be in equilibrium, and when it is pushed out of equilibrium, it wants to get back to it.

For example, in the past decade, how states and districts evaluate the performance of their teachers has seen rapid changes. Many teachers are now being evaluated partly based on how their students score on tests. But has anything really changed? The new systems replaced previous systems believed to rate teachers too positively. Nearly all teachers were “effective.” After putting new systems in place, Rhode Island reported that 98 percent of its teachers were effective; Florida, 97 percent, New York, 96 percent. The system returned to where it was.

The point is not about teacher evaluation per se. It is that research has the potential to put energy into the system. What is thought to be best practice for teaching reading, or math, or any subject, might change if research shows that new methods improve on current methods. Or a program of social and emotional development might prove effective in reducing student behavior problems. Or an approach to teaching English to non-English speakers might prove effective in promoting their language acquisition and academic achievement. The list is nearly endless.

Of course, research needs to be conducted and disseminated, and its timeframes can seem slow to policymakers. But here is a margin for bringing innovation to a system that does not have much incentive to innovate. It seems at least as useful to push on this margin as it is to study how education is delivered in Finland and Singapore, which happened after those two countries had the top scores on the Programme for International Student Assessment. Their systems might have some attractive features, but generalizing them to a vast country with a heterogeneous population and a highly decentralized education system is problematic. (Tom Loveless has warned of the perils of “edu-tourism.”)

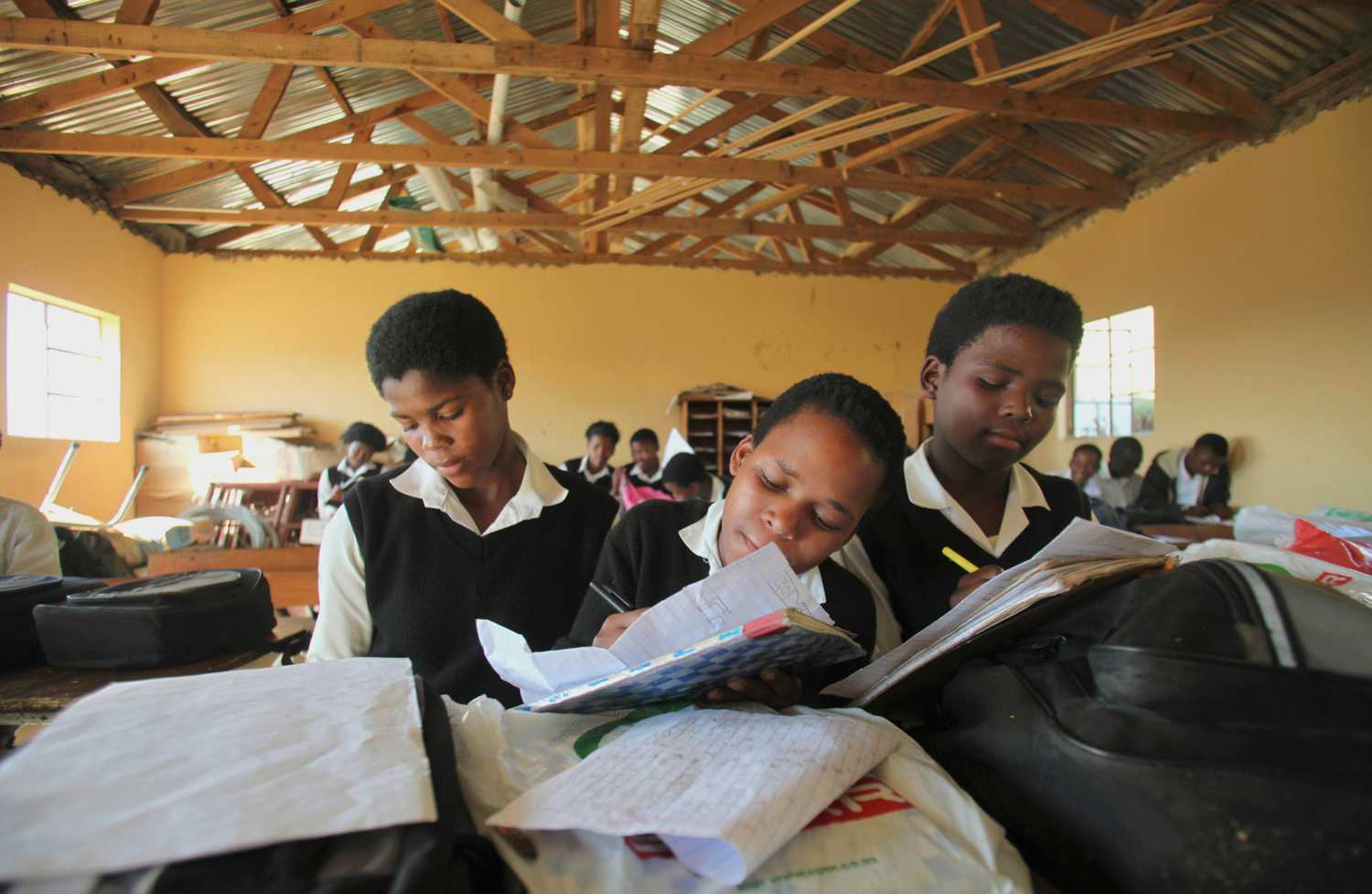

America is entering a new phase in which the majority of its public school students are from low-income households. That’s about 25 million students. Suppose that for each of these students, ESEA set aside $10 a year—a dollar for each month they are in school—for federal research to improve education. That’s $250 million. It sounds like a lot of money, but the scale of the K-12 enterprise is vast and seemingly large numbers can be misleading. Comparing it to the more than $600 billion we are spending each year for K-12 education, it’s four-tenths of a percent.

The draft ESEA legislation will be modified as it moves to the floor of the respective chambers and then to conference. Increasing funds for research could be done two ways. One would be simply to have the set-aside apply to all spending under the bill. It then would include Title I, which is larger than all the other titles combined. For the president’s 2015 budget request, the change would increase the amount set aside for research to $90 million. Or the set aside itself could be increased, to, say, three percent. It’s not getting to the one-dollar-a-month set-aside, but it’s something.

In the 13 years since NCLB was passed, we’ve seen more clearly that research is essential to improve education, just as clinical trials are essential to improve health care. A commitment to equity lies at the heart of ESEA, and spending $10 a year on research for each of America’s low-income students will help meet that commitment.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).