Executive summary

Implicit in recent Evidence Speaks postings is the need to develop evidence-based interventions for improving student achievement. Comparative analysis of the education research literature versus the educational neuroscience literature suggests that education research, grounded in the behavioral and cognitive sciences, is currently the better research base for instructional design, particularly if our goal is to improve educational outcomes in the near to intermediate future.

Introduction

In recent Evidence Speaks postings, authors have discussed the implications of NAEP scores (Dynarksi, Kane, Whitehurst), the apparent inability of a pre-K curriculum to deliver lasting academic improvement (Farran & Lipsky), and the small percentage of educational interventions that produce positive classroom effects (Jacobs).[i] These data point to concerns among educators and policy makers about the need to improve classroom instruction. The learning sciences are vast, including areas of neuroscience and psychology, as well as education research itself.[ii] Where might we best look for research that might provide bases for developing evidence-based classroom interventions? Here I offer a small first step toward answering that question by comparing educational neuroscience with more traditional education research. If the goal is to improve instruction in the near to intermediate future, education research remains the most likely source.

Since its inception in the mid-1990s, educational neuroscience has evolved into an active research front. Educational neuroscientists believe that elucidating the brain mechanisms underlying cognition and behavior can improve classroom teaching and learning. Over a much longer history, education research has also attempted to apply its behavioral findings to improve educational outcomes.

The literatures and document co-citation

The Web of Science (WoS) subject field “Education and Educational Research” contains articles published in 231 education research journals. I use the 500 top-cited articles in this subject field, published between 1997 and 2015, as the set of publications to generate what I will call the education research literature. The 500 top-cited articles over the same time period indexed as “Education and Educational Research” that also contain “brain” or a variant of “neuro” in their topic word field generate the educational neuroscience literature. The two article sets contain only three common items. Note that the generating sets contain only articles and would tend to under-represent research areas that depend heavily on books rather than journal articles. What I will call the education research literature and the educational neuroscience literature are all the articles cited by the two generating sets. Together the two literatures cite 44,000 unique documents.

Document co-citation analysis is a bibliometric method used to delineate a discipline’s semantic or intellectual structure. If document A cites documents B and C, then B and C are co-cited. Two documents that have high co-citation counts are likely to be conceptually or thematically related. For a set of cited references, like those representing education research, the co-citation counts can be presented as co-citation networks, where the nodes are cited articles and the weighted edges the strength of the co-citation link. The co-citation network depicts what the field is about by mapping relationships among a literature’s cited references; that is, the prior research upon which the literature is based. The components, or sub-networks, of the co-citation network represent research specializations within the larger field. Smaller communities of interest can sometimes be identified within these components.[iii]

To assess how education research and educational neuroscience are related; the two literatures are combined in a single co-citation analysis. To facilitate visualization and interpretation, articles appearing in the network have minimum co-citation counts of five. Nodes designating the most highly connected articles are labeled to characterize the content of a component or community.

In interpreting this analysis, there are several things to keep in mind. First, the literatures are dependent upon WoS indexing practices, which may not always cohere with expert opinion on what a field is about. Second, the co-citation analysis covers a period of nearly 20 years; the literature generated by the top-cited papers published in the last five years might look quite different. Third, the co-citation threshold results in components that represent the peaks of the research terrain and excludes foothills and flatlands that are also interesting and important. In addition, only the four largest components are discussed here. A big-picture comparison of two literatures omits much fine structure. Note that the literatures do contain the work of at least 75 of the 200 university-based education researchers judged most influential in 2015.[iv] This figure under-represents their presence, because cited references in WoS provide only first authors.

Findings

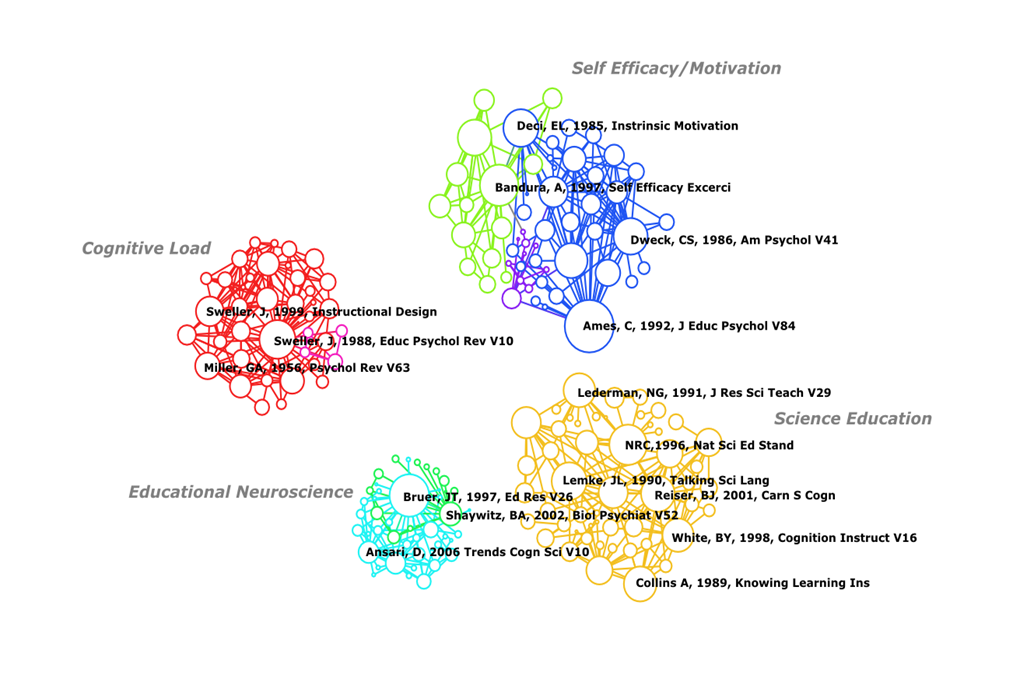

Figure 1 shows the largest four components resulting from the analysis.[v] Each of the four components represents a major research focus: Self-efficacy/Motivation, Cognitive Load Theory, Science Education, and Educational Neuroscience. The first three components arise solely from the education research literature. Educational Neuroscience arises solely from that literature. The map would have been the same if the literatures had been analyzed separately. That is, the two literatures are distant in co-citation space.[vi]

Figure 1 Document co-citation network for the education research and educational neuroscience literature 1997–2015

Education research

The Self-efficacy/Motivation component contains two major research communities. Albert Bandura’s work on the influence of self-efficacy on student and teacher classroom performance is central to this community (green nodes). Central authors in the other community (blue) are C. Ames, E.L. Deci and C.S. Dweck. This research examines the effects of student and teacher beliefs about the nature of intelligence on motivation and adoption of classroom learning strategies. The majority of the 55 articles in this component are experimental studies done within learning environments. These articles are published primarily in educational psychology journals (e.g., Journal of Educational Psychology). Its connection to psychology is via social psychology (e.g. Journal of Personality and Social Psychology).

John Sweller is the central author within the Cognitive Load theory component (red). Researchers in this tradition attempt to design classroom instruction sensitive to limitations on human short-term, or working, memory. This work includes studies investigating the optimal use of technology and multimedia in instructional design. The 41 articles in this component overwhelmingly report results of experimental studies on student learning. They tend to be published in educational psychology journals (e.g., Journal of Educational Psychology). This applied work is linked to cognitive psychological research on working memory as can be seem by centrality of George A. Miller’s classic “Magic Number Seven” article within this community.

The most highly co-cited article in the Science Education component (gold) is the 1996 National Research Council’s report National Science Education Standards. This component contains articles on the efficacy of teaching techniques like inquiry-based learning, the value of teaching students about scientific argumentation, and the effects of student beliefs about science on learning. The articles include publications by science educators, such as N.G. Lederman and J.L. Lemke, as well as cognitive psychological research by authors such as A. Collins, B.J. Reiser, and B.Y. White. These two categories are reflected in the source journals that appear in the component. Twenty-five of the 51 articles in this component appear in science education journals (e.g. Science Education) and 13 in more cognitively oriented journals (e.g., Journal of the Learning Sciences). This cluster indicates an interaction between research on classroom science instruction and psychological research on expertise and conceptual change.

Educational neuroscience

The Educational Neuroscience component contains two major research communities. The dominant nodes in the larger community (aqua) are J.T. Bruer and D. Ansari. The articles in this community primarily debate the question posed in Bruer (1997): “Is educational neuroscience a bridge too far?” Twenty-six of the community’s 31 articles, mostly reviews and editorial material, address the promise and pitfalls of educational neuroscience. They are published in a variety of neuroscience and education journals, most commonly in Mind Brain and Education. B.A. Shaywitz is the central author in the smaller research community (green). It contains 12 research articles (11 are brain imaging studies) on dyslexia or word recognition. Typically, these articles appear in the educational neuroscience literature as examples of the promise of educational neuroscience. Note that the exemplars do not present a wide array of educational issues. They address neither subject matter learning nor instructional interventions, and are published in basic neuroscience journals.

What differentiates the educational neuroscience literature from the education research literature is that the educational neuroscience core articles are about issues confronting the field of educational neuroscience, not about issues confronting educators concerned with improving classroom instruction. The educational neuroscience literature is about itself, not about education. The core literature of educational neuroscience is a meta-literature.

Conclusions

Document co-citation analysis suggests that education research is applying behavioral science to study how motivation, memory limitations, and beliefs about science affect classroom learning. The highly co-cited articles report results of experimental studies in classrooms and learning environments. Currently, educational neuroscience appears to be a meta-literature supported by examples of cognitive neuroscientific research on word recognition and dyslexia. There are no significant co-citation links between the two literatures.

It would seem that if one is interested in improving classroom learning—addressing the problems implicit in the Evidence Speaks postings referred to above—education research provides the most likely source for insights into designing evidence-based interventions.

This is particularly true if our goal is improvement in the near to intermediate future. Educational neuroscience is still at an early stage of development. At a January 2015 White House Office of Science and Technology Policy Workshop, Bridging Neuroscience and Learning, participants were asked to list successful neuroscience-based interventions that addressed real-world educational problems.[viii] No examples were forthcoming. It would appear that educational neuroscience remains a promissory note for improving instruction, whereas results from the behavioral and cognitive sciences might be more immediately useful.

One can neither predict the course of research, nor the possible, eventual contributions of neuroscience to education. Nonetheless in formulating funding guidelines, we should first be clear about what problems we are trying to solve and the time scale over which we desire to affect change. We can then apportion resources between education research and educational neuroscience accordingly.

[i] https://www.brookings.edu/about/centers/ccf/evidence-speaks.

[ii] This posting is derived from a larger study, Bruer (2015), comparing the neuroscience of learning, the psychology of learning, education research, and educational neuroscience, “Where is Educational Neuroscience?” to appear in Educational Neuroscience 1:1:1-13.

[iii] The co-citation network is an undirected weighted graph, the sub-networks are weak components, and the research communities are generated using the Small Local Moving algorithm. The labeled nodes are nodes having the highest total degree in the components and communities. The graph is generated using a Force Atlas algorithm; Linked nodes attract, unlinked nodes repel. Sci2 and Gephi were used to generate and visualize the graph.

[iv] http://blogs.edweek.org/edweek/rick_hess_straight_up/2015/01/2015_rhsu_edu-scholar_public_influence_rankings.html.

[v] Nodes are sized by total degree (i.e. number of papers with which they are co-cited). Nodes with highest degree in each component are labeled. Edges are weighted by co-citation level. Colors indicate research communities within components identified using the Slow Local Moving algorithm. A Force Atlas algorithm generated the visualization.

[vi] One might think that the educational neuroscience literature is embedded in the neuroscience literature, but analysis of the 500 top-cited articles in neuroscience containing variants of “learn” or “education” in their topic words results in research components: neural mechanisms of learning and memory and reward conditioning. The educational neuroscience literature does not appear. See the citation in note ii above.

[vii] Bruer, J. T. (1997). Education and the brain: A bridge too far. Educational Researcher, 26, 4–16.

[viii] I participated in the workshop. I could find no information on the Internet about it, other than press releases from universities whose faculty members attended. It is mentioned in a statement to Congress that says neuroscience is poised to inform educational practice. http://docs.house.gov/meetings/AP/AP19/20150326/103218/HHRG-114-AP19-Wstate-HandelsmanJ-20150326.PDF

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).