The rulemaking process has received a great deal of attention over the course of Trump’s presidency, in part because of the administration’s commitment to deregulation—and, in particular, its focus on repealing Obama-era rules.

Today, a new report from the Brown Center on Education Policy takes a deep dive into the process behind the Accountability and State Plans rule (the Accountability rule). This regulation was proposed by the Department of Education “to provide clarity and support” to state and local education agencies in implementing core provisions of the Every Student Succeeds Act (ESSA), the law that replaced No Child Left Behind. The final rule was published in the final days of the Obama administration and subsequently repealed via the Congressional Review Act. This case study provides insight into how the public, agencies, and Congress shape the content and lifespan of final rules. In particular, it examines the relationship between agency responsiveness and rule durability.

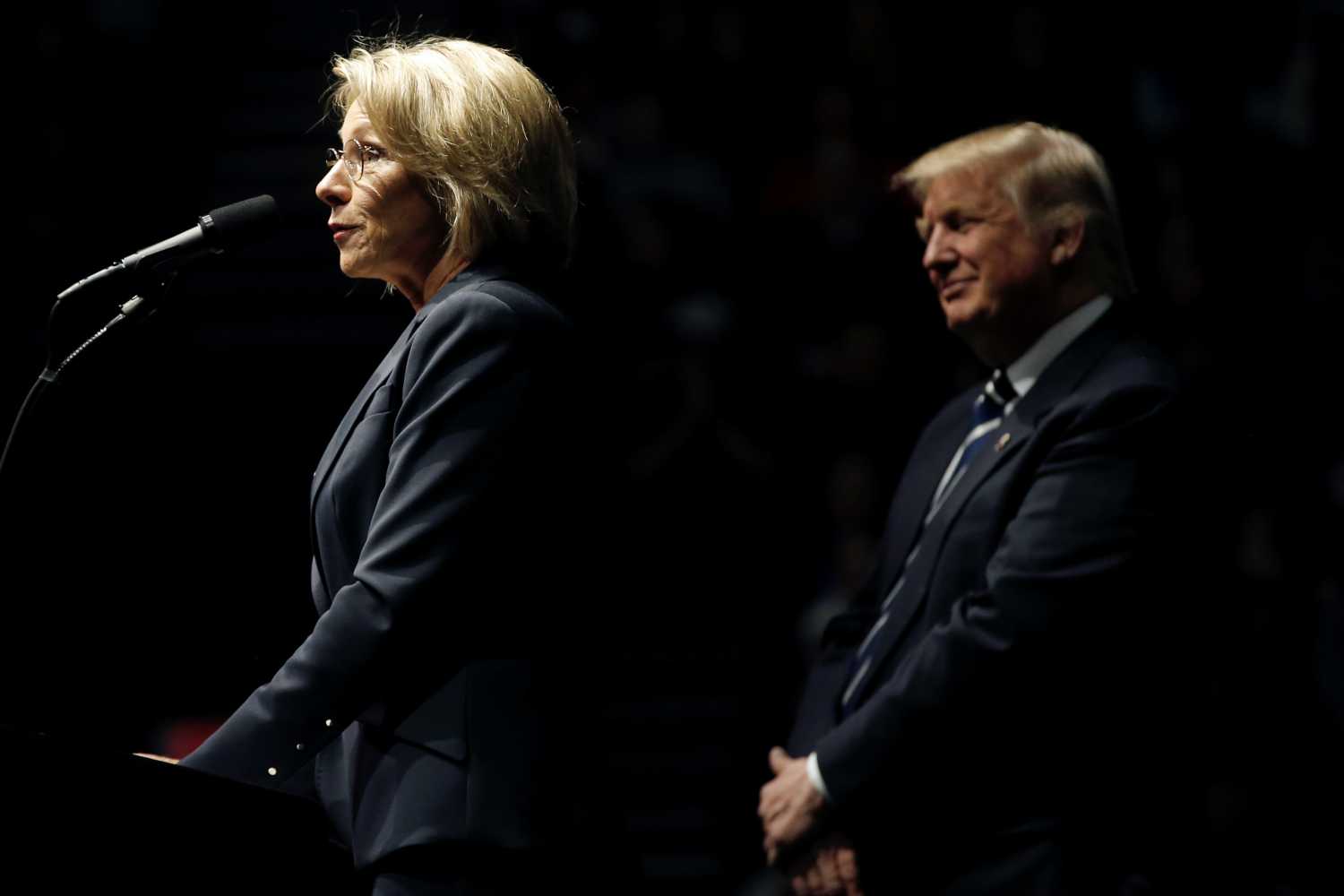

In the report, Brown Center Fellow Elizabeth Mann Levesque explores the input that the department received from organizations via public comments and members of Congress via oversight hearings, as well as how the department revised the draft rule in response to this feedback. As a preview, the report finds that the department received a good deal of criticism on several high-profile aspects of the rule. In turn, the department was relatively responsive to this feedback. However, the changes the department made to the draft rule were ultimately not enough to satisfy Republican members of Congress, who repealed the final rule early in 2017.

Input from organizations

In the process of revising this rule, the Department of Education received feedback from a number of different sources. This report focuses specifically on feedback from organizations who submitted public comments. The research team identified 512 comments submitted by organizations, representing a small but important subset of the total 21,000 comments on the rule; this is because prior research suggests that agencies generally give more weight to sophisticated or technical comments. Compared to individuals, organizations are typically in a better position to provide this type of feedback given their resources and expertise.

As shown by the figure above (taken from the report), the department received input from a wide variety of organizations. Slightly more than half of the comments come from advocacy organizations or local education agencies. Although only 13 percent of comments came from state governments, 82 percent of the states had a least one state official submitting comments. Other organizations including research centers, labor unions, and faith-based organizations comprised a smaller share of the comments.

To analyze the input that organizations provided, the research team coded each comment to identify each section(s) the commenter provided feedback on. For each section within a comment, researchers identified whether the commenter requested a major change, minor change, or expressed support. Major changes include requests to heavily revise the substantive requirements of a section, statements of opposition to the regulation section, and recommendations to delete a section entirely. Minor changes include requests for clarification, definitions, examples, guidance, or other small adjustments consistent with the substance of the proposed section. In sections coded as support, the commenter explicitly expressed support for a specific section of the regulation. While 44 percent of mentions were coded as major change and 34 percent as minor changes, only 22 percent were coded as support. (Please see the full report for additional details on the coding process.)

Based on these data, the report analyzes which sections of the regulation received the most requests for “major changes” and then discusses where consensus emerged on high-profile issues. For example, the analysis finds a consensus in favor of providing states with flexibility to decide whether to use a summative school rating in their accountability systems.

In addition to the comment process, agencies may also receive input via congressional oversight hearings. In this case, Republican members of Congress held nine oversight hearings that focused on the department’s approach to rulemaking under ESSA. Several hearings focused on the Accountability rule. Republican members of Congress objected to the department’s approach to the rulemaking process, arguing that the regulations went beyond the letter and intent of the law. Members of Congress also expressed concern about several of the issues that surfaced in the comments, such as the implementation timeline and the summative rating requirement.

Overall, the analysis in the report suggests that the department was relatively responsive to feedback from commenters and members of Congress, yet also held firm in certain cases. For example, the department decided to move back the implementation timeline back one year, as many of the commenters and members of Congress requested. On the other hand, it retained the summative rating requirement despite the strong criticism it received from commenters and Republicans in Congress.

Agency responsiveness and rule durability

The final rule was published in the waning days of the Obama administration, on Nov. 29, 2016. Early in 2017, the Republican-controlled Congress and President Trump repealed the rule under the authority of the Congressional Review Act. The case of the Accountability rule thus illustrates the trade-offs agencies face when revising a draft rule. To create a durable Accountability rule, the Department of Education may have had to eliminate what it considered key provisions of the regulation that were central to the department’s oversight role under ESSA. The broader implication is that credible threats to final rules, either via judicial review or congressional action, may force agencies to choose between substantive revisions to the final rule or the risk of subsequent repeal.

This analysis also speaks to important questions for future study. Are agencies more likely to revise the draft rule when bipartisan critiques arise? Faced with credible threats to rule durability from Congress, how do agencies decide whether to revise the rule or pursue an alternative strategy, such as manipulating the timing of the rulemaking process? The analysis in this report points to the importance of understanding these and related questions about when and how the relationship between agency responsiveness and rule durability shapes the public policies articulated in final rules.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).