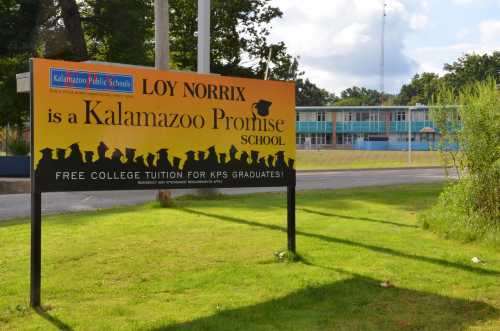

In her Sept. 18, 2018 blog post on Brookings’s Brown Center Chalkboard, Meredith Billings takes on the important issue of how variation in the design of promise programs might shape student outcomes. The moniker of “promise program” has grown into a catch-all term to describe place-based college scholarship opportunities for which eligibility is geographically determined, at least in part. As Dr. Billings rightly notes and as has been detailed by Michelle Miller-Adams of the Upjohn Institute, these programs are proliferating rapidly nationwide, with huge variation in design features. However, Dr. Billings’s conclusion that the Pittsburgh Promise has not been able to replicate the positive impacts observed in the original and exemplary Kalamazoo Promise program overlooks more recent research and is indicative of the complexity in drawing conclusions across a set of disparate programs.

First, despite the establishment of several promise programs a decade or more ago, their research and evaluation evidence is still developing. This lagging evidentiary base is due, in part, to the timeframe inherent in their associated theories of change. Most promise programs are guided by a theory of change that posits both immediate and accrued impacts. Immediate impacts result from the influx of new financial resources that help to solve immediate college affordability challenges. Accrued impacts build over time as younger cohorts of students progress through middle and high school with knowledge of these resources. We posit that this knowledge may motivate changed behavior and increased academic focus among students, families, teachers, PK-12 schools, and institutions of higher education.

From their Degree Project study, Doug Harris and colleagues concluded that promise programs that both aim to catalyze changes in high school and provide financial resources likely have greater efficacy than those designed without the broader educational environment in mind. In such system-oriented programs, at least four years of program implementation may be needed to realize and assess the full impact (both immediate and accrued) on student postsecondary enrollment outcomes. Thus, for a program like the Pittsburgh Promise, first implemented with the high school graduating class of 2008, the class of 2012 is the first cohort to experience all of high school under “promise” conditions. This group of students is just now at the six-year post-high school mark, a common benchmark for examining postsecondary degree completion. Thus, research that draws on data only for the first few cohorts of students assesses the immediate impacts but is premature for understanding a program’s accrued impact.

As Dr. Billings notes, the Pittsburgh Promise is indeed different from the Kalamazoo Promise in a number of ways. In addition to residency requirements—which both programs have—Pittsburgh Promise eligibility is dependent on grade point average (GPA) and attendance criteria, which Kalamazoo does not employ. However, the GPA requirement for some level of support is 2.0 (lower than the 3.0 GPA criterion of the New Haven program with which Dr. Billings pairs the Pittsburgh Promise), and students graduating with a 2.5 GPA can use the scholarship to attend any accredited postsecondary institution in Pennsylvania, including trade and technical schools. The Pittsburgh Promise is a last-dollar program, which means that students must apply for financial aid and promise dollars are applied after all other aid extending to the full cost of college attendance, including room and board. Some last-dollar programs cover only up to tuition, thus limiting the size of financial benefit students receiving other sources of aid can receive. We hypothesize that coverage of non-tuition expenses is critically important for economically disadvantaged students to afford four-year colleges.

Early studies of the Pittsburgh Promise drew on data from the first few cohorts of students who graduated high school between 2008-2010 as well as pre-promise cohorts. As Dr. Billings noted, these studies found inconsistent impacts on college enrollment, but clear patterns of shifting students’ enrollments to in-state eligible institutions. However, our more recent impact evaluation incorporated two additional cohorts, as well as multiple strategies for drawing causal inferences to investigate the effect of the Pittsburgh Promise on students’ immediate postsecondary enrollment and early college persistence outcomes.

Both analytic approaches yielded similar conclusions. As a result of promise eligibility, Pittsburgh Public School graduates are approximately 5 percentage points more likely to enroll in college, particularly four-year institutions; 10 percentage points more likely to select a Pennsylvania institution; and 4 to 7 percentage points more likely to enroll and persist into a second year of postsecondary education

Although the 5 percentage point effect on postsecondary enrollment that we estimate for the Pittsburgh Promise is lower than the 9-11 percentage point estimate for Kalamazoo, conservative cost-benefit calculations nevertheless indicate positive returns for Pittsburgh.

As the body of research on individual promise programs continues to mature, a clearer picture of the import of various design features will likely emerge. It is critical, however, to ensure that early evidence is not treated as definitive. This is especially so when programs’ own theories of change articulate a longer timeframe to maximum impact. As evidence accumulates on individual programs, we may be able to articulate clearly which design features support or impede efficacy. Doing so, however, is contingent upon aligning the research with the programs’ theories of change.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).