Edutourism is not new. For American education professors in the 1920s, nothing certified one’s progressive credentials like a trip to the Soviet Union. Diane Ravitch presents a vivid account in Left Back: A Century of Failed School Reforms. She describes how John Dewey, the most famous progressive educator of the era, visited Soviet schools in 1928 and returned full of admiration. He appreciated the emphasis on collectivism over individualism and the ease with which schools integrated curricula with the goals of society. One activity that he singled out for praise was sending students into the community to educate and help “ignorant adults to understand the policies of local soviets.” William Heard Kilpatrick, father of the project method, toured Russian schools in 1929. He applauded the ubiquitous use of project-based learning in Soviet classrooms, noting that “down to the smallest detail in the school curriculum, every item is planned to further the Soviet plan of society.” Educator and political activist George Counts shipped a Ford sedan to Leningrad and set out on a three-month tour, extolling the role Soviet schools played in “the greatest social experiment in history.”[i]

In hindsight these scholars seem incredibly naïve. Soviet schools were indeed an extension of the state, but as such, they served as indoctrination centers for one of history’s most monstrous regimes. Stalin’s plan for society was enforced by a huge secret police force and included the mass execution of political opponents, the forced starvation of millions of peasants, and a vast network of prison camps (gulags) erected to house slave labor.

To their credit, Dewey and Kilpatrick turned on Stalinism. Counts held on longer, even praising Stalin’s Five Year Plan as a “brilliant and heroic success.” In 1932-1933, as the first Five Year Plan transitioned into the second, an estimated 25,000 Ukrainians died daily of starvation from the forced famine that Stalin imposed on the region. Later, Counts would recognize Stalin’s schools as tools of totalitarianism, and he became, in one biographer’s words, “a determined opponent of Soviet ideology.”[ii]

Today we have a new outbreak of edutourism. American adventurers have fanned out across the globe to bring back to the United States the lessons of other school systems. Thomas L. Friedman of the New York Times visited Shanghai schools on a junket organized by Teach for All, an offshoot of Teach for America, and declared “I think I found The Secret”—The Secret being how Shanghai scored at the top on the 2009 PISA tests. After declaring, “there is no secret,” Friedman fell back on some stock explanations for high achievement, focusing in particular on changing how teachers are trained and re-organizing their work day to allow for less instruction, more professional development, and ample time for peer interaction. Elizabeth Green, author and editor-in-chief of Chalkbeat, toured schools in Japan, and she, too, embraced the idea that the key to better teaching could be informed by observing classrooms abroad. For Green, lesson study and resurrecting controversial pedagogical reforms from the 1980s and 1990s would surely boost mathematics learning. Finland has been swamped with edutourists, spurred primarily by that nation’s illustrious PISA scores. The Education Ministry of Finland hosted at least 100 delegations from 40 to 45 countries per year from 2005 to 2011.

International tests identify the highest scoring nations of the world. What’s wrong with visitors going to top preforming nations and seeing with their own eyes what schools are doing? Contemporary edutourists aren’t blinded by political ideology in the same way as Dewey and his colleagues were. So what’s the problem? The short answer: Edutourism might produce good journalism, but it also tends to produce very bad social science.

The people named in the paragraphs above are incredibly smart. But they succumbed to the worst folly of edutourism. Three perils, explained below, mislead edutourists into believing that what they observe in a particular nation’s classrooms is causally related to that country’s impressive academic achievement.

Peril #1: Selecting on the dependent variable

Singling out a top achieving country—or state or district or school or teacher or some other “subject”—and then generalizing from what this top performer does is known as selecting on the dependent variable. The dependent variable, in this case, is achievement. To look for patterns of behavior that may explain achievement, a careful analyst examines subjects across the distribution—middling and poor performers as well as those at the top. That way, if a particular activity—let’s call one “Teaching Strategy X”—is found a lot at the top, not as much in the middle, and rarely or not at all at the bottom, the analyst can say that Teaching Strategy X is positively correlated with performance. That doesn’t mean it causes high achievement—even high school statistics students are taught “correlation is not causation”—only that the two variables go up and down together.

Edutourists routinely select on the dependent variable. They go to countries at the top of international assessments, such as Finland and Japan. They never go to countries in the middle or at the bottom of the distribution. If they did and found Teaching Strategy X used frequently among low performers, the positive correlation would evaporate—and they would have to seriously question whether Teaching Strategy X has any relationship with achievement.

Jay P. Greene concisely describes the problem in a review of Marc Tucker’s book, Surpassing Shanghai: An Agenda for American Education Built on the World’s Leading Systems. Tucker uses a “best practices” approach in the book, an analytical strategy that marks his entire career. Tucker describes what top performing nations do in education and builds an agenda based on the practices that he thinks made the nations successful.

Here’s Greene’s critique:

The fundamental flaw of a “best practices” approach, as any student in a half-decent research-design course would know, is that it suffers from what is called “selection on the dependent variable.” If you only look at successful organizations, then you have no variation in the dependent variable: they all have good outcomes. When you look at the things that successful organizations are doing, you have no idea whether each one of those things caused the good outcomes, had no effect on success, or was actually an impediment that held organizations back from being even more successful. An appropriate research design would have variation in the dependent variable; some have good outcomes and some have bad ones. To identify factors that contribute to good outcomes, you would, at a minimum, want to see those factors more likely to be present where there was success and less so where there was not.[iii]

Jay Greene is right. “Best practices” is the worst practice.

Peril #2: Small, non-random samples

Typically, visitors to schools see what their hosts arrange for them to see. If the host is a governmental agency responsible for school quality—and those range from national ministries to local school administrations—it has a considerable amount of time, effort, public funds, and political prestige wrapped up in a particular set of policies. Policy makers are not indifferent to the impressions that visitors take away from school visits, no more so than the military is indifferent to the impressions reporters take away from visits to bases or the battlefield. Outside observers should consider with skepticism the representativeness of the schools or classrooms they visit—or that a handful of schools can ever serve as a proxy for an entire nation. Some might try to visit some randomly selected schools, but more than likely they will be steered to a pre-selected set. The desire to present a rosy picture to outsiders need not be the only motive. There is also the practical matter that schools must be prepared to receive a group of observers. Schools have work to do and visitors can be a distraction.

One way to check for representativeness is to compare edutourists’ observations to data collected from larger samples that have been drawn scientifically so as to be representative. In a previous chalkboard post, I critiqued Elizabeth Green’s reporting on math instruction in Japanese and American schools. The kids she saw in Japanese classrooms were happily engaged in mathematics—boisterous, energetic, with arguments abounding about solutions to problems—whereas in the United States, she saw dull classrooms where children unhappily practiced procedures. The stark contrast Green painted is refuted by decades of survey data from the two countries. Instructional differences do exist, but they don’t appear to be related to achievement. As for joy of learning, there is a mountain of evidence that American kids enjoy learning math more than Japanese kids, evidence collected from large, random samples of students of different ages and grades. That evidence should be trusted over observations conducted in a small number of non-randomly selected settings.

Peril #3: Confirmation bias

Diane Ravitch explains how John Dewey was misled in the 1920s: “Like so many other travelers to the Soviet Union both then and later, Dewey saw what he wanted to see, particularly the things that confirmed his vision for his own society.” This is called confirmation bias, the tendency to see what confirms an observer’s prior expectations.

Thomas Friedman is not an education expert, so he relied on experts to guide his thinking—and his visit—when he observed schools in Shanghai. Experts such as Andreas Schleicher of the OECD and Wendy Kopp of Teach for America are quoted in his column as pointing him towards Shanghai’s teaching reforms to explain the municipality’s sky high PISA scores. Those reforms have never been evaluated using rigorous methods of program evaluation. It’s a shame that Friedman overlooked the role that China’s social policies have in boosting Shanghai’s PISA scores. In particular, he overlooked the Chinese hukou, an internal passport system that culls migrant children from Shanghai’s student population as they approach the age for PISA sampling, fifteen years old.

Poor migrants from rural villages flock to China’s big cities for jobs. The hukou system rations public services in China, including education. Hukous were originally issued to families based on their place of residence in 1958. Hukou privileges are inherited, creating a huge urban-rural divide. It doesn’t matter if the child of migrants is born in Shanghai, or even if her parents were, she will still hold a rural hukou. Recent reforms have allowed migrants greater access to primary and lower secondary schools, but high schools are still largely out of reach. Kam Wing Chan, a Chinese demography expert at the University of Washington, has shown that as Shanghai children from migrant families approach high school age, their numbers in the school system drop precipitously.[iv] Migrants who do not hold a Shanghai hukou send their children back to ancestral regions, even if the kids have never stepped foot in a rural village. There they join an estimated 61 million children, known as “left behinds,” who never made the journey to cities in the first place.[v]

The hukou creates an apartheid system of education. Human Rights Watch and Amnesty International have condemned the hukou system for its discriminatory treatment of migrant children. It is shocking that OECD documents from its PISA experts hold up Shanghai as a model of equity that the rest of the world should emulate, praising policies for treating migrants as “our children.” Reports from OECD economists have taken the opposite position, sharply criticizing Shanghai”

The Shanghai Education Committee justifies local high schools’ refusal to admit the children of migrant workers on the grounds that “if we open the door to them, it would be difficult to shut in the future; local education resources should not be freely allocated to immigrant children.” As a result, few migrant children attend general high schools and those who do return to their registration locality find it hard to adapt and often fail to complete the course.[vi]

If Tom Friedman had talked to different experts, even different experts in the OECD, he would have left Shanghai with a very different impression of its school system.

Conclusion

The perils of edutourism discussed above—selecting on the dependent variable, relying on impressions taken from small non-random samples, and confirmation bias—corrupt key features of sound policy analysis. Impressions from a few observations do not have the same evidentiary standing as carefully collected data from a scientifically selected sample. And the statement, “I’ve been to high achieving countries and have seen with my own eyes what they are doing right” cannot substitute for research designs that rigorously test causal hypotheses.

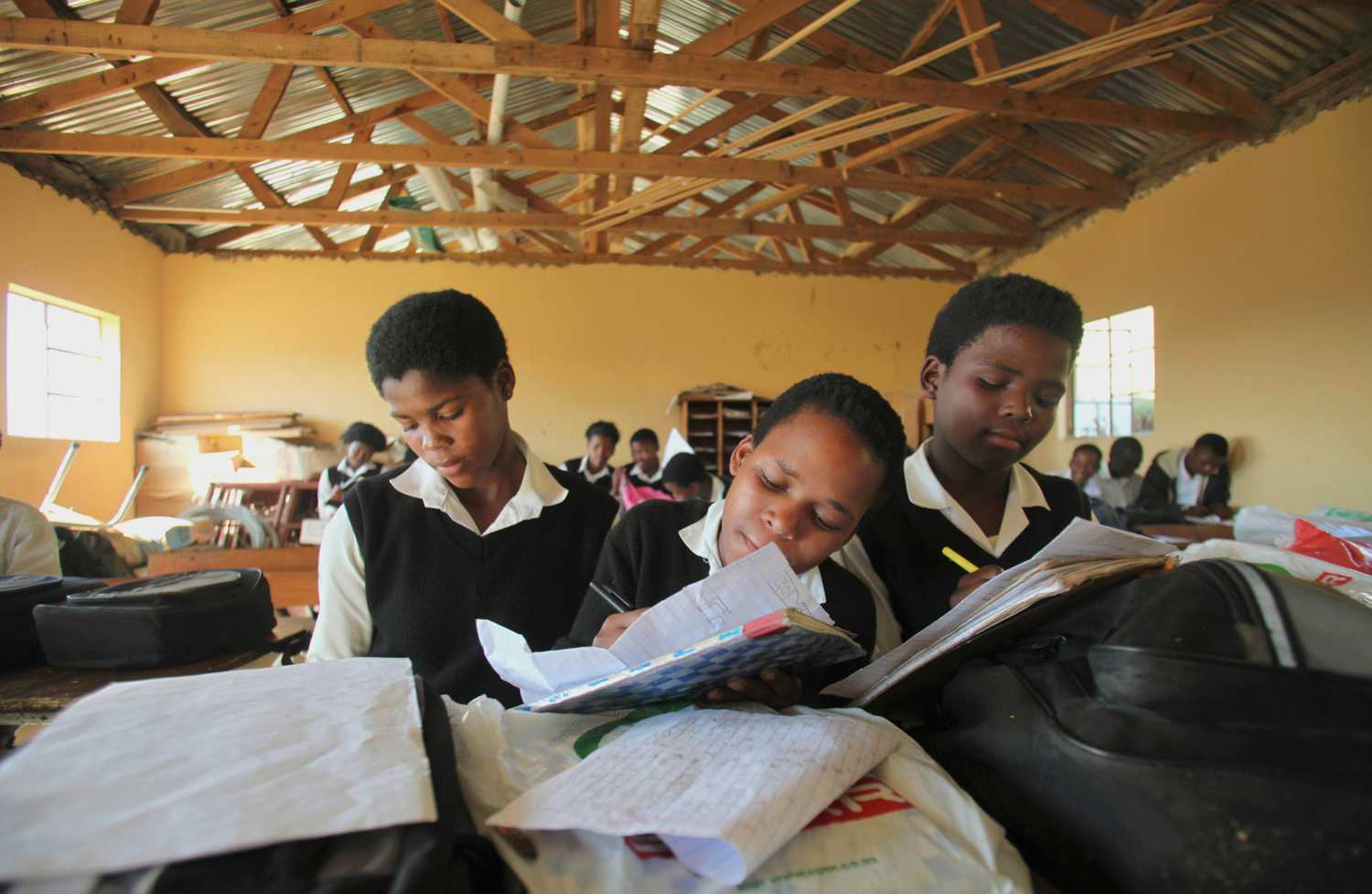

Let me end on a personal note. The critique above is not meant to discourage edutourism, but to identify its vulnerability to misuse. I have had the good fortune to visit many schools abroad during my career. One of the first opportunities was in 1985 when, as a classroom teacher, I chaperoned a group of California high school students on a tour of several Asian countries, including China, Korea, and Japan. American tourism in China was a rare event in those days. The classrooms I observed were profoundly impressive. I visited schools in Helsinki, Finland in 2005, before the hype about Finland reached today’s ridiculous levels, and witnessed wonderful teaching and learning.

I have conducted several studies and written extensively about international education but have not mentioned my personal visits to schools abroad until the sentences that you just read. It’s not that they weren’t important to me. I treasure the memories, and as a former classroom teacher, hope to add to them with future visits.

But policy analysis must be built on a sturdier foundation than personal impressions. Education policies affect the lives of hundreds, thousands, sometimes even millions of students. Those of us in the business of informing the policy process—whether it’s talking to policy makers or the public about its schools—must gather strong evidence that can be generalized to large numbers of students. First person accounts of visiting schools abroad are entertaining to read, but a careful reader will exercise skepticism when edutourists start giving advice on how to improve education.

[i] Diane Ravitch, Left Back: A Century of Failed School Reforms (Simon & Schuster, 2000): pp. 202-218. Also see The Later Works of John Dewey, Volume 3, 1925 – 1953: 1927-1928 / Essays, Reviews, Miscellany, and Impressions of Soviet Russia (Southern Illinois University Press, November 1988).

[ii] Gerald Lee Gutek, George S. Counts and American Civilization: The Educator as Social Theorist (Mercer University Press, June 1984).

[iii] Jay P. Greene, “Best Practices Are the Worst,” Education Next, vol. 12, no. 3 (Summer 2012). http://educationnext.org/best-practices-are-the-worst/

[iv] Kam Wing Chan, Ming Pao Daily News, January 3, 2014, http://faculty.washington.edu/kwchan/ShanghaiPISA.jpg.

[v] Li Tao, et al. They are also parents: A Study on Migrant Workers with Left-behind Children in China (Beijing: Center for Child Rights and Corporate Social Responsibility, August 2013).

[vi] OECD Economic Surveys: China 2013 (OECD Publishing, March 2013): pp. 91-92.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).