Higher education’s gatekeepers are well aware of lopsided K-12 systems that disadvantage many of America’s youth and contribute to inequities in selective college admissions. Many have embraced holistic admissions processes that consider a host of factors beyond high school GPA, test scores, and the rigor of high school coursework in an attempt to both widen the set of factors signaling applicants’ potential and fit at an institution and to more fairly evaluate students in the context of educational and environmental opportunities.

To address opportunity gaps and improve equity in admissions, many selective colleges in the U.S. consider the backgrounds of applicants as part of their holistic admissions process. However, background information is usually not standardized nor available for all applicants. Furthermore, since many selective colleges are flooded with thousands of applications from students who live and learn in settings that can be unfamiliar to admissions staff, applicants may be considered for admission without full consideration of their background. Because such applicant information is often spotty and incomplete, applicants from higher-opportunity backgrounds may ultimately have a leg up in the admissions process.

Landscape: A Tool that Puts Applicants in Context

In a paper recently published in Educational Evaluation and Policy Analysis, we investigated whether providing colleges with new or better information about each applicant’s background can improve equity in selective college admissions. Our analysis examines if the chances of admission and enrollment changed for applicants after selective colleges gained access to a new web-based tool called Landscape. The College Board developed Landscape to provide admissions staff with standardized information on educational disadvantage at the neighborhood and high school level for each student who applies.

Two main components of Landscape are the neighborhood and high school challenge indicators. These are provided: (i) at the neighborhood level, which is defined by a student’s census tract and (ii) at the high school level, which is a roll-up of the census tracts of college-bound seniors at a high school. Applicants from the same census tract share the same neighborhood data and indicators; applicants from the same high school share the same high school data and indicators. The challenge indicators are placed on a 1-100 scale that reflect comparative percentiles. A higher value on the scale indicates a greater level of challenge related to educational opportunities and outcomes compared to other neighborhoods/high schools in the United States.[1]

To estimate how this information influenced the admission decisions of colleges and enrollment decisions of students, we compared the outcomes of colleges that piloted Landscape over four admission cycles: three cycles before each college gained access to Landscape and in the tool’s first cycle of use. Our results are based on the admission decisions of 43 selective institutions for over 3.7 million applicants who applied to attend in the 2014-2015 to 2019-2020 school years.

The Chances of Admission Increased for Applicants from High-Challenge Backgrounds After Landscape Adoption

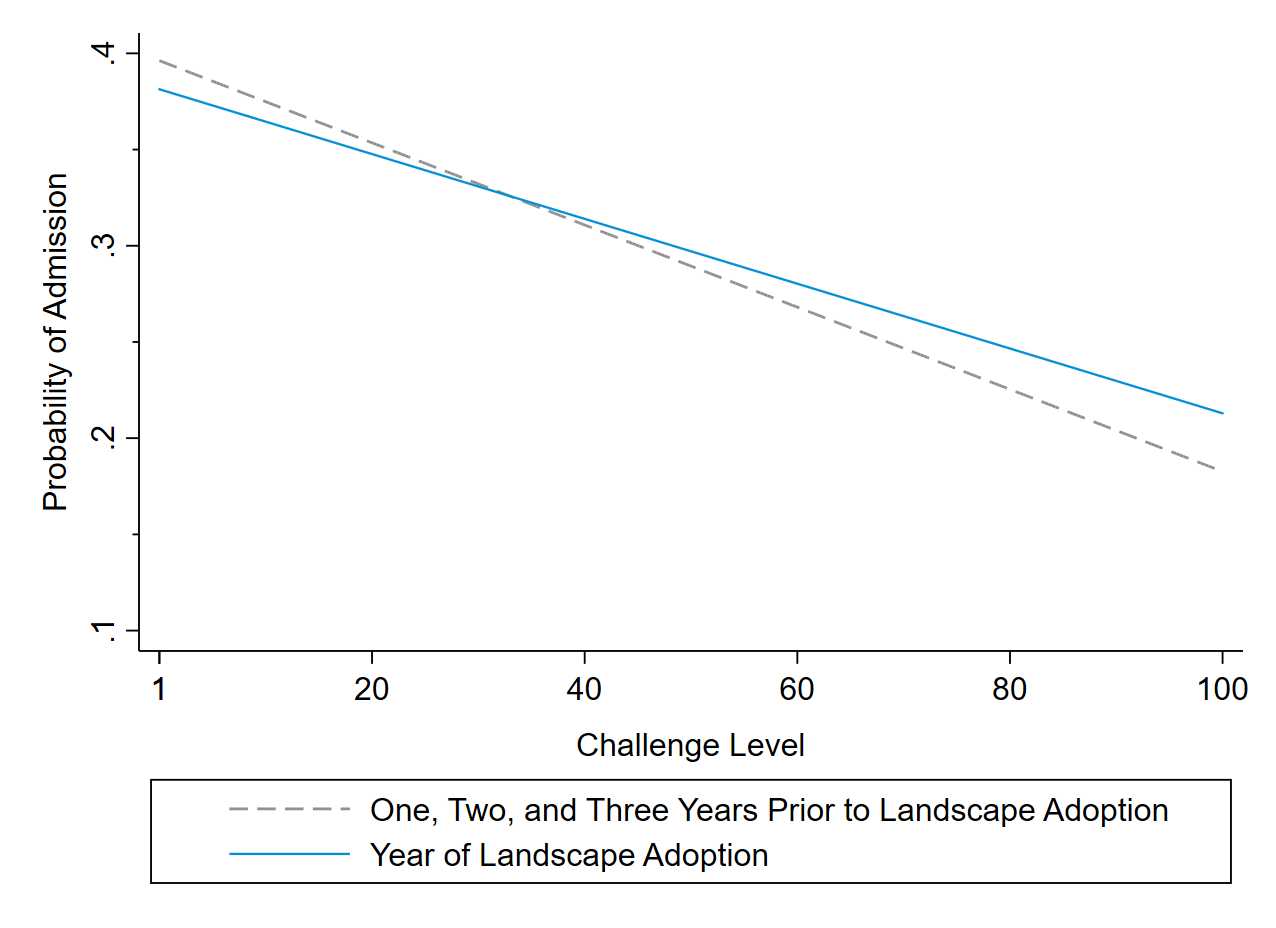

As shown in Figure 1, incorporating Landscape into the admissions process is associated with a large increase in the chances of admission for applicants from more challenging backgrounds. On average, applicants from the most challenging backgrounds experienced a five-percentage point increase in the probability of admission in the year of Landscape adoption. This amounts to a 25 percent increase relative to otherwise similar applicants who applied the previous year.

Figure 1. Students from more challenging backgrounds are more likely to be admitted upon Landscape tool adoption.

Even though applicants from high-challenge backgrounds were much more likely to gain admission to pilot institutions after the introduction of Landscape, most applicants have relatively low challenge levels. Thus, the overall composition of admitted students changed only modestly after tool adoption. On average, these selective pilot institutions admitted 2.4 percent fewer applicants from lower-challenge (bottom-tercile) backgrounds and 8.7 percent more applicants from higher-challenge (top-tercile) backgrounds after gaining access to Landscape.

The Chances of Enrollment Did not Change for Applicants from High-Challenge Backgrounds After Landscape Adoption

In contrast to the change in admission chances, the likelihood of applicants from higher-challenge backgrounds enrolling at pilot institutions did not change after Landscape was used in the admissions process. In fact, because the probability of admission increased for students from high-challenge backgrounds while the probability of enrollment stayed the same, the yield rate for high-challenge applicants decreased after colleges gained access to the tool. In other words, the additional students from high-challenge backgrounds who were admitted in the year of Landscape adoption were less likely to enroll than otherwise similar students who were admitted before Landscape was used in the admissions process.

Although Landscape did not change the chances of enrollment across the full sample of pilot colleges, at nine of the 43 institutions in the study, the probability of enrollment increased by 4-10 percentage points for applicants from the most challenging backgrounds after the tool’s introduction. Survey results from admissions leaders at partner colleges illuminated that most of these institutions used Landscape to inform institutional scholarship decisions.

The Implications for Diversifying Selective Colleges

Several policy insights emerge from the findings in our study. First, better information on applicant background can modestly offset opportunity-based admission inequities at selective colleges. Such inequities stem from components like college essays and other application materials that are strongly related to family, school, and neighborhood resources.

Second, achieving more diversity at selective colleges requires diversifying the pool of qualified applicants. Tools like Landscape can help colleges identify qualified applicants from underrepresented backgrounds only after they have applied; they have no influence over diversifying the applicant pool. More effective recruitment efforts and more equitable K-12 academic preparation remain the cornerstones to shifting the overall racial and socioeconomic composition of admitted students at selective colleges.

Third, and consistent with other research, our findings suggest that socioeconomic-based admission practices and tools may not be effective at increasing racial diversity in higher education. While there is an association between race and class, neither is an accurate proxy for the other. Increasing both racial and socioeconomic diversity across selective colleges is likely to require explicit consideration of both race and class in the admissions process.

Lastly, the findings in our study show that diversifying the admitted class does not guarantee greater diversity on campus. Matriculation barriers after admission are also a challenge to diversifying selective colleges. Financial barriers, in particular, may prevent admitted students from historically marginalized groups from attending selective colleges. Thus, in addition to tools like Landscape, complementary policies and practices that make attendance at selective colleges more affordable and attractive are likely needed to convince admitted students from underrepresented backgrounds to attend.

Footnotes:

1. Details about the College Board’s Landscape tool are available here.

The authors are or were previously employed by the College Board, a mission-driven not-for-profit organization that connects students to college success and opportunity. Landscape is a free tool produced by the College Board for colleges and universities. The authors receive no financial benefit from the tool’s adoption or use, and the College Board did not have editorial control over the contents of this research brief or the accompanying article published in Educational Evaluation and Policy Analysis.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).