This report from The Brookings Institution’s Artificial Intelligence and Emerging Technology (AIET) Initiative is part of “AI Governance,” a series that identifies key governance and norm issues related to AI and proposes policy remedies to address the complex challenges associated with emerging technologies.

In the early 1970s, the fledgling credit card industry routinely and shortsightedly held cardholders liable for fraudulent transactions, even if their cards had been lost or stolen. In response, Congress passed the 1974 Fair Credit Billing Act to limit cardholder liability. This protection increased public trust in the new payment system and spurred growth and innovation. Because they could no longer just pass fraud losses on to cardholders, payment networks devised one of the first commercial applications of neural networks to detect out-of-pattern card usage and reduce their fraud losses.

Smart regulation, like the above example, that gets out in front of emerging technology can protect consumers and drive innovation. In the last several decades, however, policymakers have forgotten this beneficial side effect of regulation, preferring to give industry players free rein to deploy emerging technologies as they see fit.

The grim results of that laissez-faire philosophy are all around us today in the form of a still-growing backlash against tech companies. The public darkly suspects that these companies are interested primarily in promoting their own dominance and not dealing with deleterious ramifications. As a result, policymakers at the state and local levels are beginning to consider technology bans on AI applications such as facial recognition. The path forward is not deregulation or prohibitions, but smart, proactive regulation that establishes a framework for both public protection and innovation growth.

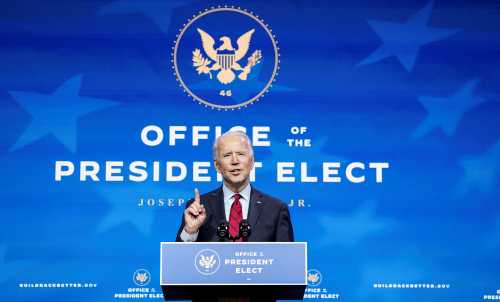

The White House AI guidance has good and bad news

The White House recently released guidance for the regulation of AI applications, establishing a framework that future rulemaking or legislation can build upon. The good news is that the administration is committed to a sectoral approach. Since AI is just a collection of statistical techniques that can be used throughout the economy, it makes no sense to have a federal AI commission to enforce one-size-fits-all rules. The White House report wisely encourages sectoral regulators to formulate rules for the AI applications within their jurisdiction. In a recent op-ed, former White House official R. David Edelman makes a similar point about not regulating AI as if it were a single thing.

“[I]n today’s world, the real task for AI regulators is to create a rules structure that both protects the public and promotes industry innovation—not to trade off one against the other.”

Unfortunately, the report also perpetuates the out-of-date, hands-off approach. It encourages regulators to think of their activity as one which holds innovation back. Regulators are told that they must “avoid regulatory or non-regulatory actions that needlessly hamper AI innovation and growth.” Regulation is seen as a cost, a hindrance, a delay, or a barrier which must be reluctantly accepted as a last resort only if absolutely necessary.

The idea that measures such as transparency, accountability, and fairness might promote AI growth and innovation is foreign to this framework. But in today’s world, the real task for AI regulators is to create a rules structure that both protects the public and promotes industry innovation—not to trade off one against the other.

New legislation is needed

Many AI applications cry out for before-the-fact legislation, not just application of existing rules. When Illinois passed its Artificial Intelligence Video Interview Act last year, some commentators thought it was overreacting to science fiction speculations. But the law, which established requirements for notice, consent, and explanations when employers use AI to analyze videos of job applicants, is already behind the curve. A host of companies, such as HireVue, are already using AI video analysis to score job applicants.

Employment screening is riddled with insular, clubby judgments that perpetuate a uniform workplace rather than finding talented or creative types. Companies are right to look for fairer and more accurate algorithmic screening techniques.

Still, except for the new Illinois state law, AI hiring algorithms are devoid of consumer protections. Vendors provide neither validity tests to show that these techniques detect traits relevant to job performance, nor disparate impact assessments to reveal potential discriminatory effects. Employers can turn job applicants down on the basis of these screenings without ever having to explain the basis for these adverse actions.

Policymakers used to know what to do when faced with such a promising emerging technology: They would throw a regulatory net around it to provide for growth and consumer protection. When computerized credit bureaus began to spread in the late 1960s, Congress got ahead of the emerging technology and put in place the 1970 Fair Credit Reporting Act, which established consumer-protection rights and shielded the bureaus from defamation suits. The industry expanded rapidly, but consumers remained safe. Passing a national law now to regulate AI-driven employment tests might similarly provide win-win benefits to AI firms, employers, and job applicants.

The backlash against facial recognition

The troublesome experience with facial recognition shows what can happen when companies rush AI applications to market without a regulatory safety net. Tests at the National Institute for Standards and Technology have demonstrated that the technology on the market now has discriminatory effects. Nevertheless, with almost no public scrutiny, local law enforcement agencies have been using the technology. The latest such story concerns widespread law enforcement access to Clearview’s trove of (illegally obtained!) photos in pursuit of lawbreakers—apparently oblivious of the civil liberties risks involved.

As a result of this rush to market, facial recognition technology is in trouble both here and abroad. Privacy and civil liberties groups have urged a suspension of federal government use of facial recognition systems, pending further review. Scholars have called for a ban, and some states and cities have already implemented partial bans.

A ban might be throwing out the baby with the bathwater. But, if the only alternative is after-the-fact regulation to correct whatever mistakes turn up, a ban or moratorium might make sense. In a welcome, if belated, development, key industry participants have come out in favor of a proactive regulatory framework.

Proactive regulation is needed

Machine learning is the “most important general-purpose technology of our era.” The calls for modest regulation that lets industry take the lead are part of a failed regulatory philosophy, one that saw its natural experiment over the past several decades come up lacking. AI is too important and too promising to be governed in a hands-off fashion, waiting for problems to develop and then trying to fix them after the fact.

“AI is too important and too promising to be governed in a hands-off fashion, waiting for problems to develop and then trying to fix them after the fact.”

It is time to return to the way we used to regulate emerging technologies. Industry leaders like Google CEO Sundar Pichai have recently recognized the advantages of proactive, sector-by-sector regulation of AI applications. Thoughtful, far-sighted policymakers, like those in the 1970s who regulated and jump-started new payment systems and credit bureaus, need to set the rules and priorities for this vital technology in a way that protects consumers and provides for innovation and growth.

The Brookings Institution is a nonprofit organization devoted to independent research and policy solutions. Its mission is to conduct high-quality, independent research and, based on that research, to provide innovative, practical recommendations for policymakers and the public. The conclusions and recommendations of any Brookings publication are solely those of its author(s), and do not reflect the views of the Institution, its management, or its other scholars.

Microsoft provides support to The Brookings Institution’s Artificial Intelligence and Emerging Technology (AIET) Initiative, and Google provides general, unrestricted support to the Institution. The findings, interpretations, and conclusions in this report are not influenced by any donation. Brookings recognizes that the value it provides is in its absolute commitment to quality, independence, and impact. Activities supported by its donors reflect this commitment.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).