In a series of papers, the authors have explored comparative differences in the design, deployment, and governance of artificial intelligence (AI) technologies among countries. Our previous papers have explored how different countries viewed their adoption of AI, their relative spending on technology versus human capital, and what the United States needs to do to win the AI race. In our most recent paper, we shifted our analysis to focus on the commonalities of different plans across countries. We wanted to determine if culturally similar countries adopted similar approaches to addressing AI. In that paper, we uncovered relatively few similarities across culturally similar countries and speculated that this might be due to the relative immaturity of AI.

However, we did uncover startling differences between how Western nations and China were approaching AI. In the West, countries—with the U.S. leading—are largely focused on the dangers of AI and are working hard to ensure that appropriate “guardrails” are being put in place to ensure that the technology is properly managed from the onset. By contrast, China is almost exclusively focused on research and development (R&D) and is devoting very little energy to constraining possible negative outcomes from AI development. This creates a situation where China, already in the lead on AI, has the capability to further extend its lead, while the U.S. and other countries build guardrails.

In this paper, we continue to uncover similarities in how different countries are approaching different aspects of AI development and to examine what countries are most similar (and most dissimilar) in their approaches and then link these clusters to national AI performance.

As with our recent blog post, we have included the 34 countries that have produced and published a national AI strategy: Australia, Austria, Belgium, Canada, China, Czechia, Denmark, Estonia, Finland, France, Germany, India, Italy, Japan, South Korea, Lithuania, Luxembourg, Malta, Mexico, the Netherlands, New Zealand, Norway, Poland, Portugal, Qatar, Russia, Serbia, Singapore, Spain, Sweden, the U.A.E., the U.K., Uruguay, and the U.S.

In our previous paper, we studied data management, algorithmic management, AI governance, research and development (R&D) capacity development, education capacity development, and public service reform capacity development. For the current paper, we have used the first three strategy aspects, grouped the three forms of capability development into a single index, and added separate industry and public services measures. Therefore, in the current study we examine the following six aspects that are part of each strategic AI plan:

- Data management refers to how the country envisages capturing and using the data derived from AI. Data management is composed of the sub-elements of data exchange between governmental entities, with other stakeholders, and with other nations, exchange regulations, data privacy, and data security.

- Algorithmic management refers to a country’s awareness of algorithmic issues or algorithmic ethics. There are four components to algorithmic management: ethics, bias, transparency, and trust.

- AI governance refers to the inclusivity, transparency, and public trust in AI and the need for appropriate oversight. AI governance involves AI security, regulations, social inequality impact, risks, intellectual property rights protection, and interoperability.

- Capacity development refers to the sources that each country is going to use to develop its AI. There are 14 sources to develop these AI skills: multisector R&D, R&D funding, R&D institutes, primary and secondary education sources, higher education, lifelong learning, vocational, SMEs and startups, tax incentives, procurement, business model innovation, pilot projects, and attracting experts.

- Industries reflect private sector industries that are targets of the national AI effort. There are eight industries that are mentioned in the strategic plans: financial, manufacturing, defense, technology, energy and natural resources, agriculture, health care, and tourism.

- Public services refer to the administrative functions of public service that AI is intended to address. In total, 11 foci of public service were mentioned in the plans: immigration, courts, public safety, revenue/tax, education, environmental, defense, transportation, healthcare, and ICT.

Taken together, these six elements broadly reflect how a country approaches AI governance and management (data management, algorithmic management, and AI governance), how they intend to develop their AI skills (capacity development), and how they deploy or focus their AI efforts (industries and public service). As such, these six elements provide a snapshot of several different phases of AI for each country.

In the analysis for this paper, we cluster the countries of interest to determine which were using similar approaches. First, we identify what components for each element are present. For example, algorithmic management is made up of four components—ethics, bias, transparency, and trust—and each country is rated on the presence or absence of discussion of each. For this element, Canada and China are low in all four components, whereas India and the U.S. are high in all four. Second, we run the analysis for each element individually. With each analytic run, we calculate whether each country is high, medium, or low for the analysis and then group similar countries. For example, in algorithmic management, there is a high cluster where all four components are present, a low cluster where none are present, and a medium cluster where only bias and transparency are present. The distance of each country from each cluster is calculated, and the country is assigned the cluster it is closest to. In conclusion, we offer some commentary on why clustering can help with improved AI governance.

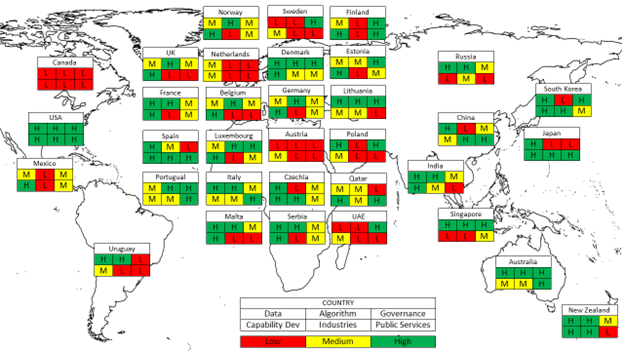

Our clusters ranged from all high (U.S.) to all low (Canada). Only three sets of countries had identical profiles (Belgium and the U.K., France and Serbia, and Sweden and the U.A.E.). Our overall map of these is shown below.

The authors’ visualization of their categorization of six attributes of 34 countries’ national AI strategies as High, Medium, or Low.

Our high cluster features countries that are high in data exchange between governmental entities, with other stakeholders, have exchange regulations, and are focused on data privacy and security. These countries, including China, India, Japan, Spain, and the U.S., are only low on data exchange with other nations. In other words, these countries see the value in data (as evidenced by their internal attention paid towards it) but are also reluctant to share it with other countries, given its value and sensitivity. In short, they likely view their data as country-specific assets that must be guarded as such.

The medium cluster features countries that are high in data exchange between agencies, with other stakeholders, and with other nations, have data exchange regulations, and are concerned about privacy but not about security. These countries, which include Belgium, Finland, Mexico, and the U.K., are more likely to share data internationally than the high cluster countries but do not have a strong emphasis on data security. In short, they see the value of data exchange, but do not view it as something that needs to be safeguarded.

The third cluster features countries that are low in everything other than data privacy. These countries include Austria, Canada, Sweden, and the U.A.E. Unlike the other countries in our analysis, they seem to have little interest in data management but do see the need to protect their data. We speculate that these countries are significantly less mature in their AI development and do not yet see its full value.

The high cluster, which includes Australia, Russia, and the U.S., is high in all four of the data elements and this reflects a clear eye towards the value in having algorithms that are clearly known and understood. While the majority of the countries in this high cluster are well known for the value in these sub-elements, we were surprised to discover that Russia also fit into this category, as Russia would appear to be less interested in ensuring that algorithms are free from bias and contain transparency—things not normally associated with Russia, given its authoritarian tendencies.

The medium cluster, which only includes Estonia, Qatar, and Spain, is high in bias and transparency and low in ethics and trust. Given that there are only three countries total in this cluster, it suggests that most countries either strongly value the idea of AI algorithms or do not value it at all.

The low cluster, which includes Austria, Canada, and China, is low in all sub-elements. Given their non-democratic orientation, we expected to find countries like Russia and China in this cluster and indeed found China. Although, we were fairly surprised that some countries who are well known for concern about similar things, like Canada, Sweden, and Japan, were not found in this cluster as we would expect that their adherence to democratic principles would lead them to take steps to guard against abuses of AI that can threaten democracies.

There were no sub-elements that all countries agreed were important. Ignoring the small number of countries in the middle band, it is easy to conclude that while many countries appreciate the problem of algorithmic management, other countries have little interest or worry in it.

The high cluster includes expected countries like Germany, the U.K., and the U.S., but also some unexpected countries like China and Russia. This cluster is high in each of the sub-elements. While not exclusively so, this cluster appears to be made up of AI-experienced countries that understand the value in strong AI governance but perhaps do not intend to fully follow it. This suggests a standards-driven orientation.

The medium cluster, which includes Australia, Poland, and Sweden, is high in security, regulations, social inequality impact, and risks but is low in intellectual property rights protection and interoperability. This suggests a control orientation.

The low cluster, which includes Austria, Canada, and Japan, is only high in regulations, suggesting an interest in just basic governance or a lack of investment in governance in general.

The first cluster, which included India, Mexico, and the U.S., plans to make use of all of the capability development sources except business model innovation. The second cluster, which included Australia, China, and Poland, plans to use seven of the sources and was generally focused on education as opposed to research and development. The third cluster, which is comprised of Canada, Russia, and Singapore, is primarily focused on R&D and is only interested in providing basic education.

There is a sizeable amount of commonality across the clusters. Several sub-elements, including R&D multisector, R&D funding, primary/secondary education, and higher education, were found in all the plans. It is likely that countries view these as relatively traditional ways to launch a new innovation—in this case, AI. Business model innovation, which is the changing of the underlying structure of the entity, was not seen in any strategic plans, and we speculate that this is because AI is still too young for countries to understand how to apply business model innovations to it.

The high cluster, which includes China, Spain, and the U.S., plans to deploy AI in all industries other than defense and tourism. The medium cluster, which includes Australia, India, and Russia, is focused on technology, energy and natural resources, agriculture, and health care. The third cluster, which includes Canada, Mexico, and Sweden, does not plan to focus on any of the industries.

No countries reported plans to use AI to support either tourism (which is expected as tourism is not a typical use case for AI) or defense (which is unexpected given the amount of money that many countries, including the U.S., invest in defense AI spending). We have reason to challenge the lack of focus on defense spending since, at least in the U.S., as we reported in a previous article, the U.S. Department of Defense is responsible for approximately 85% of the total amount of federal AI spending in 2022. We suspect that the U.S. is not an outlier in that spending approach.

In the high cluster, which included Australia, Spain, and the U.S., most of the public sector foci were included in the plans, only excluding immigration, courts, revenue/taxes, and education. In the medium cluster, the foci were health care, transportation, and ICT. The low cluster countries only had a focus on health care.

Common across all was the absence of support for immigration and courts and the presence of health care. The low cluster showed only education to health care—these could be viewed as social welfare states. The medium cluster focused on infrastructure, both transportation and ICT—this could be moderate growth oriented. The high cluster added energy, defense, safety, and natural resources to this base—this could be all-in growth oriented, including a defensive posture.

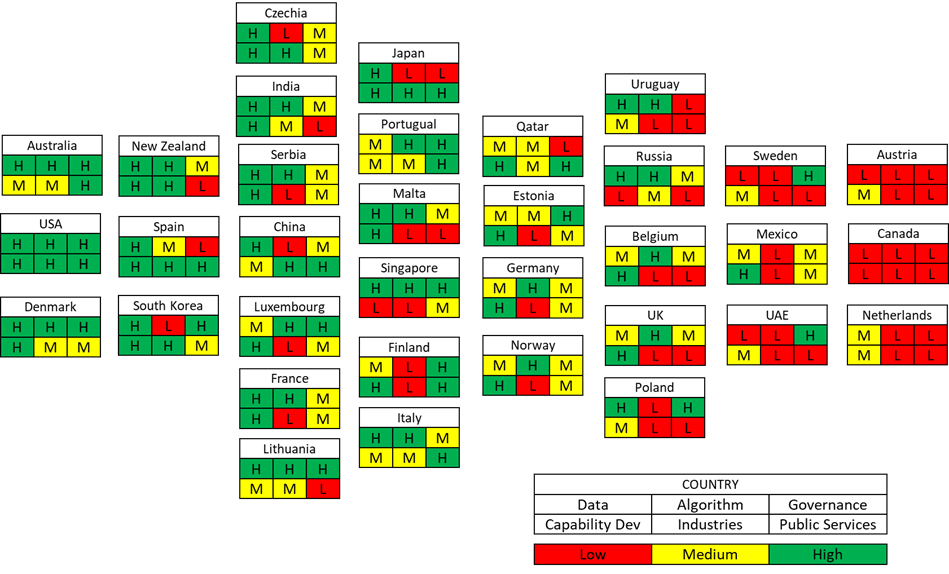

There are clear leaders and laggards in each of the categories examined, and we can see that many do not group as we might expect, such as the difference between neighbors, allies, and G7 members U.S. and Canada. Further analysis will be required to explain such differences and see how these countries evolve as AI strategies mature. Laying out the countries by degree of sophistication of plans instead of geographically, we can see who is leading and who is lagging. Understanding the differences in AI strategy maturity can allow nations to identify where they need to invest, allies they might learn from, and who their closest competitors are in the AI space.

The authors’ visualization of the national AI strategies of 34 countries grouped in descending order, from left to right, from those that are the highest in six specific attributes, collectively, to those that are the lowest.

It is clear from these initial plans, which are heavily aspirational, that each cluster provides insight into the current and future governance and technical directions that each country intends. For example, some countries, such as China, India, and Japan, are largely focused on the promise of AI while others, with the U.S. more notable in its efforts, are much more concerned with the potential perils of AI and so are developing strategies to guard against possible dangers. There is strong justification for both approaches but, over time, we expect that all countries will at least address the other dimension.

We next took the clusters and linked them to an outcome metric by calculating the proximity of each country to the ideal cluster profiles (low, medium, and high) and then correlating that distance to the Global AI Index. This index is underpinned by 111 indicators, collected from 28 different public and private data sources and 62 governments, including all those from our sample with the exception of Serbia. The items are split across three pillars—Implementation, Innovation, and Investment—and seven sub-pillars—Talent, Infrastructure, Operating Environment, Research, Development, Government Strategy, and Commercial. Within Implementation, Talent focuses on the availability of skilled practitioners in artificial intelligence solutions; Infrastructure assesses the reliability and scale of access infrastructure, from electricity and internet to supercomputing capabilities; and Operating Environment focuses on the regulatory context and public opinion on artificial intelligence. Within Innovation, Research looks at the extent of specialist research and researchers, including numbers of publications and citations in credible academic journals; while Development focuses on the development of fundamental platforms and algorithms upon which innovative artificial intelligence projects rely. Within Investment, Government Strategy gauges the depth of commitment from national governments to artificial intelligence; investigating spending commitments and national strategies; while Commercial focuses on the level of startup activity, investment, and business initiatives based on artificial intelligence. We found three major findings in examining the relationships between the clusters and AI performance as measured by the Global AI Index.

First, within Implementation, the medium Governance cluster both was positively correlated with Infrastructure and outperformed either the high or low cluster on this dimension. All three clusters stressed regulations and social inequities, where the differentiator was that high and medium stressed security while high also stressed intellectual property and interoperability. Looking at the causality from the opposite side, we posit that having a higher Infrastructure capacity already in place leads to a higher focus on security and risk by governments to secure those systems, while countries with somewhat lagging Infrastructure either focus on broad policies to set the condition for Infrastructure development (the high cluster) or those with significantly lagging Infrastructure lack the technical depth to set the conditions for securing their systems (the low cluster). There was a complementary situation with the medium cluster of Industry negatively correlated with Infrastructure. The medium cluster focused on energy, agriculture, and health care, while the high cluster added technology, financial, and manufacturing. We posit that the AI drivers for Infrastructure development are in these latter sectors. In other words, a minimum existing technology base in AI in general, and in the right sectors in particular, is required to make informed policy decision around it.

Second, again within Implementation, the high and low algorithmic management clusters were, respectively, negatively and positively correlated with the Operating Environment. This suggests that countries with strong regulatory regimes and generally positive citizen views of AI need to invest less effort on policy development in the area of AI use and controls than do countries with weaker regulatory regimes and a more strident national discourse on AI. Simply put, governments who see discontent with the advent of AI from the citizens need to invest more effort in influencing the narrative.

Third, within both Innovation and Investment, we saw strong indications of governments modelling positive behaviors towards AI deployment. Specifically, low development of Public Services by governments was negatively correlated to Research, Development, Government Strategy, and Commercial domains. This demonstrates that governments need to lead in the adoption and funding of AI as their leadership in this area will encourage wider public and private sector innovation and investment in AI.

While the U.S. currently has one of the more complete plans, this does not mean that it is capable of fully executing it. Rather, to use a restaurant analogy, the U.S. has the most complete menu, but this does not mean that all dishes (or capabilities) are equally good. In fact, there may be some downsides to trying to do all things well: It likely inhibits the focus on the highest priorities. Our cumulative research, which includes this report, has already identified that China is an AI juggernaut whose investment strategy in capability development may position them for dominance and that they are near-peer to the U.S. in investment and R&D. Those who are lagging, such as Canada, Austria, and the Netherlands, will be reinvesting in policy and capacity and recognizing the potential growth and impacts in this area, if they have not already started. While we doubt that any country will fulfill all its aspirations, what is included and what is excluded in this first volley of AI plans is enlightening in terms of the future path of AI.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

A cluster analysis of national AI strategies

December 13, 2023