We live in an age where big data forecasting is everywhere. Around the world, scientists are assembling huge data sets to understand everything from the spread of COVID-19 to consumers’ online shopping habits. However, as models to forecast future events proliferate, few people understand the inner workings or assumptions of these models. Forecasting systems all have weaknesses, and when they are used for policymaking and planning, they can have drastic implications on people’s lives. For this reason alone, it is imperative that we begin to look at the science behind the algorithms.

By examining one such system, it is possible to understand how the seemingly innocuous use of theories, assumptions, or models are open to misapplication.

Beginning in 2012, a system called Early Model Based Event Recognition using Surrogates (EMBERS) was developed by teams of academics from over 10 institutions to forecast events, such as civil unrest, disease outbreaks, and election outcomes in nine Latin American countries for the Intelligence Advanced Research Projects Agency (IARPA) Open Source Indicators (OSI) program.[1] While only a research activity, it was deployed over several years and expanded beyond its initial focus on Latin America to include countries in the Middle East and North Africa.[2]

EMBERS is an events-based forecasting model. It retrieves data from sources such as Twitter, newspapers, and government reports and estimates probabilities of event types—such as civil unrest, disease outbreaks, and election outcomes—occurring in particular locations and time horizons.

While we cannot fully discuss the entirety of the EMBERS architecture here, this narrative will draw attention to one of its key subcomponents. This subcomponent attempts to attribute sentiment scores to the text ingested into and processed by the model. In other words, the artificial intelligence (AI) processing the natural language produces a score of the text’s relative emotional affect. To understand the sentiment of the material it analyzes, EMBERS relies on a lexicon called the Affective Norms for English Words [ANEW].

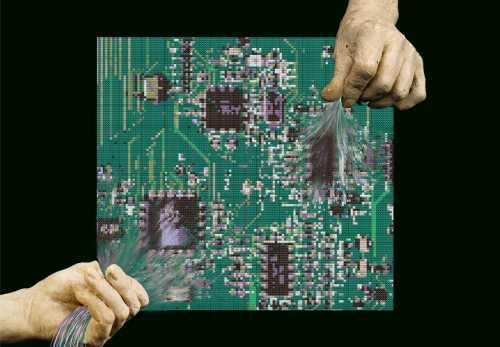

By relying on ANEW, however, the designers of EMBERS built a house of cards ready to come tumbling down at the slightest breeze of cultural difference. Let’s see why.

For more information, read Heather Roff’s November 2020 report, “Uncomfortable ground truths: Predictive analytics and national security“

ANEW was created by Margaret Bradley and Peter Lang at the University of Florida in 1999 and was designed to provide some metric of emotional affect (how much pleasure, dominance, or excitement a particular word carries with it) to a range of words. To accomplish this, Bradley and Lang surveyed a group of college students to provide their responses to a set of 100 to 150 English words. The students were shown the words and asked to supply their reaction by filling in bubbles on a scale of 1 to 9 with corresponding figures that ranged from a smile to a frown. Scores were summed for each word, and the mean was used as “the sentiment” score for that word.

How much weight do we want to put on the ANEW lexicon to determine sentiment scores for EMBERS, though?

Well, let’s look to the research design, methodology, and findings that Bradley and Lang provide. First, the obvious point is that the lexicon was originally designed for English. While the lexicon can certainly be translated, those translations may in fact not carry the same meaning, weight, or affect in different populations or dialects.[3] Indeed, there are various studies that have shown that translations of ANEW do not provide the same scores or meanings.

Second, the experiments were conducted on introductory psychology students at the University of Florida as part of a course requirement. In short, the population used to generalize sentiment of populations on several different continents was a group of 18- to 22-year-old students with all the demographic, cultural, and linguistic particularities of that group. In no way was this group of respondents representative of all English-speaking peoples, let alone non-English speakers from the global south.

For example, when we examine the scores for words in the ANEW lexicon, the preferences of a group of American college students are immediately on display. “Diploma” and “graduate” garner some of the highest scores in the lexicon. Other high-ranking scores align with the values of Western liberal democracy, capitalism, Christianity, heternormativity, and education.

The words provided to the students also indicate bias. The religious terms used in the lexicon all refer to the Christian faith: Christmas, angel, heaven, hell, church, demon, God, savior, devil, etc. No terms in the lexicon refer to other faiths or belief systems. Gender bias also appears present: 12 words apply to or associate with women (vagina, hooker, whore, wife, woman, girl, mother, rape, breast, abortion, lesbian, bride), while five words were for men (penis, man, brother, father, boy).[4] There appears to be at least a bias in terms of omitting corresponding words to the lexicon.

The old adage of “garbage in, garbage out” clearly applies, but what is most alarming is not the problems in ANEW but the fact that the designers of EMBERS decided to use the lexicon in the first place. Those designers did not take the time to investigate if the ANEW lexicon was appropriate for their purposes or to question whether the multiple translations of ANEW over the years actually showed that there are significant differences between cultures and populations, notwithstanding the already existing bias of the survey instrument itself.

EMBERS’ system architecture may be computationally exquisite and novel in how it analyzes a diverse and high volume of data, but it may not matter if the science behind the data is dubious. This could be for a variety of reasons, such as that the assumptions implicit in models are not carefully considered, or that causality in the social sciences, and thus prediction, is elusive. In such cases, the analysis may at best be off, and at worst, it will provide decision-makers with incorrect information to formulate their policy interventions.

One might argue that this analysis is unfair to EMBERS or that it is not socially or politically significant. However, as we have seen time and time again, predictive analytic systems, relying on increasingly complex AI systems are not always accurate or correct, and they may in fact be quite harmful. For policymakers, foreign-policy analysts, and others relying on the forecasts of systems that may have serious foreign-policy implications, the stakes may be even higher, and so we should be equally vigilant in examining these systems too.

Heather M. Roff is a senior research analyst at the Johns Hopkins Applied Physics Laboratory, a nonresident fellow in Foreign Policy at Brookings Institution, and an associate fellow at the Leverhulme Centre for the Future of Intelligence at the University of Cambridge.

[1] Doyle, Andy. Graham Katz, Kristen Summers, Chris Ackermann, Illya Zavorin, Zunsik Lim, Sathappan Muthiah, Patrick Butler, Nathan Self, Liang Zhao, Chang-Tien Lu, Rupinder Paul Khandpur, Youssef Fayed, Naren Ramakrishnan. (2014). “Forecasting Significant Societal Events Using the Embers Streaming Predictive Analytics System.” Big Data. Mary Ann Liebert, Inc. Vol. 2, No. 4 (December): 185-195. Doyle, Andy. Graham Katz, Kristen Summers, Chris Ackermann, Illya Zavorin, Zunsik Lim, Sathappan Muthiah, Patrick Butler, Nathan Self, Liang Zhao, Chang-Tien Lu, Rupinder Paul Khandpur, Youssef Fayed, Naren Ramakrishnan. (2014). “The EMBERS Architecture for Streaming Predictive Analytics” IEEE International Conference on Big Data. Gupta, Dipak. Sathappan Muthiah, David Mares, Naren Ramakrishnan. (2017). “Forecasting Civil Strife: An Emerging Methodology” Third International Conference on Human and Social Analytics. Saraf, Parang and Naren Ramakrishnan. (2016). “EMBERS AutoGSR: Automated Coding of Civil Unrest Events” Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data (August): 599-608.

[2] EMBERS originally looked to Argentina, Brazil, Chile, Columbia, Ecuador, El Salvador, Mexico, Paraguay, and Venezuela.

[3] In 2007, several scholars translated the ANEW lexicon into Spanish and conducted their own analysis. Like Bradly and Lang, they too sampled undergraduate students, but from several Spanish universities. Their sample, however was again, limited to a particular demographic and particular dialect, and their sample was grossly over-represented by women (560 women and 160 men). The authors also found “remarkable” statistical differences between the translated versions of ANEW and the original version. In short, and unsurprisingly, there is a lot of emotional difference between the Spanish and the English. Cf. Redondo, Jaime, Isabel Fraga, Isabel Padrón, Montserrat Comesaña. 2007. “The Spanish Adaptation of ANEW (Affective Norms for English Words)” Behavior Research Methods, Vol. 39, no. 3: 600-605. A later (2012) study translated ANEW into European Portuguese, with greater attention on language representativeness for their respondents. However, this study also relied on undergraduate and graduate students as well. They too found statistically significant differences in their population from the American and Spanish studies after translation, and in some cases they were unable to even translate some of the English words to retain the same meaning. Cf: Soares, Ana Paula, Montserrat Comesaña, Ana P. Pinheiro, Alberto Simões, Carla Sofia Frade. 2012. “The Adaptation of Affective Norms for English Words (ANEW) for European Portuguese” Behavior Research Methods, Vol. 44: 256-269. However, even taking these two translations would still limit their generalizability to Latin America.

[4] For example, when asked about how much pleasure the word “lesbian” elicited, female students ranked the word at a 3.38. When male students were asked the same, they responded with a mean score of 6.00. Likewise, the word “whore” was scored by female students at 1.61, while their male counterparts ranked it at a 3.92.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

Forecasting and predictive analytics: A critical look at the basic building blocks of a predictive model

September 11, 2020