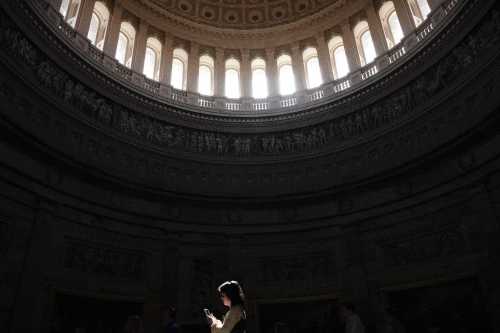

The troubling information provided by Facebook whistleblower Francis Haugen continues to generate new insights into the failure of the social media platform to police bad actors and moderate harmful content. As a result, the broader question of whether and how government intervention into platform governance should be pursued remains an ongoing topic of deliberation, with the latest in a series of Congressional hearings on the topic taking place last week.

However, in the U.S., these deliberations are unlikely to lead to action due to our strong First Amendment jurisprudence, which maintain extensive protections for both disinformation and hate speech. In addition, the unprecedented hostility that the Trump administration held for the news media has served as a powerful reminder of why we should be leery of any kind of new government intervention in the media sector. That being said, if, in the ultimate cost-benefit calculus, we see our commitment to First Amendment absolutism actually undermining the democracy that the Amendment is intended to protect, then perhaps some reconsideration of how we treat speech on social media may be warranted. One possible path forward in this reconsideration is to revisit how we regulate indecency in broadcasting.

Why indecency? And why broadcasting? Because broadcast indecency represents the only time in which the Federal Communications Commission (FCC) and the Supreme Court have agreed to the creation of a category of speech that exists exclusively within the confines of a specific medium from a regulatory and legal standpoint. Unlike, for example, obscenity, which is an unprotected category of speech regardless of how it is disseminated, indecency is only less protected within the context of broadcasting. There are no federal restrictions regarding indecency in any other communicative context. This is reflected in the FCC’s definition of indecency “material that, in context, depicts or describes sexual or excretory organs or activities in terms patently offensive as measured by contemporary community standards for the broadcast medium.” (emphasis added).

What relevance might a category of speech developed for an old and increasingly irrelevant medium have for the issue of social media regulation? From a substantive standpoint, not much. Indecency, as defined above, is at best on the periphery of concerns around social media, where issues of hate speech and disinformation are proving to be the most profound and impactful problem areas.

What is potentially relevant though—and perhaps worth considering—is the underlying notion that one or more distinctive categories of speech could be carved out exclusively for the social media context, in the same way that indecency is a regulatable category of speech exclusively within the broadcast context. Could we imagine a regulatory environment in which hate speech and disinformation continue to go largely unregulated except within the specific and narrow context of social media? This is a question I have been exploring as part of a larger research program that has been investigating whether regulatory frameworks and rationales developed within the traditional media sector might have useful lessons that can guide our approach to social media platforms.

In keeping with the model of media regulation that has been developed in the U.S., this kind of special treatment of speech within the exclusive context of a particular medium must be accompanied by a valid rationale. That is, what makes a medium sufficiently distinctive that it merits differential treatment from a regulatory standpoint? In the broadcast context, the two rationales that have been most relevant in relation to indecency are: 1) that broadcasters utilize a scarce public resource (the broadcast spectrum), and as public trustees of this resource, are thus entitled to lower levels of First Amendment protection; and 2) that broadcasting is “uniquely pervasive,” and this pervasiveness provides a rationale for treating broadcasting differently than other media. It is worth noting that the Supreme Court has rejected subsequent efforts by regulators to apply the indecency standard to telephony, cable TV, and the Internet.

I have argued at length elsewhere that a compelling case can be made that, like broadcasters, social media platforms utilize a public resource (in this case, aggregate user data) and so should be treated like public trustees—similar to how broadcasters must abide by certain conditions in exchange for their access to the collectively-owned broadcast spectrum. Whether social media platforms are “uniquely pervasive” in the ways that the FCC and the Supreme Court once considered broadcasting to be is another issue. The two media do share key criteria that the Supreme Court has brought to bear in characterizing broadcasting as uniquely pervasive. They are both free, widely available, easily accessible, and operate in such a way that unexpected, accidental exposure to harmful content is always a legitimate possibility.

Imagine for a moment that one of these rationales for treating social media differently from other media did gain traction. Might, then, allowing the government to play a more active oversight role over certain categories of speech in this narrow, but profoundly significant, context make sense? This more active role need not involve the kind of case-by-case adjudication and intervention associated with broadcast indecency, but rather, as those like Mark MacCarthy have advocated, some degree of accountability to a federal authority in regards to meeting independently-measured effectiveness thresholds for systems for policing disinformation and hate speech.

Certainly, the problems of hate speech and disinformation are not unique to social media. Hate speech and disinformation emanate from traditional and partisan news outlets, politicians, and political organizations, activists, and bad actors of various types. Yet social media represent an important mechanism by which the voices of all of these stakeholders are amplified, as platforms such as Facebook, Instagram, YouTube, and Twitter have evolved into some of the most pervasive, unconstrained, and engaging media outlets the world has ever known.

The bottom line is that a precedent has been established for constructing less-protected categories of speech exclusive to the confines of a particular medium. As we continue to struggle with the destabilizing repercussions of disinformation and hate speech, should policymakers and the courts consider creating explicit definitions of these speech categories that operate exclusively within the social media context? Could doing so provide a path forward for some form of intervention by which the government is better able to hold these platforms accountable for the content that they host and disseminate? This could certainly be considered an extreme, and ultimately misguided, approach to the problems of hate speech and disinformation, but in light of the magnitude of the challenges that we currently face, it may at least be a conversation worth having.

Meta, the parent company of Facebook and Instagram, and Google are general, unrestricted donors to the Brookings Institution. The findings, interpretations, and conclusions posted in this piece are solely those of the author and not influenced by any donation.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

Broadcast indecency may offer a path forward for social media regulation

December 15, 2021