Federal statistics are critical at every stage and level of policymaking, yet their production faces significant challenges, including declining survey response rates, constrained budgets, and growing expectations for timely, granular data. The public servants who produce these federal statistics have responded to these challenges by innovating in several ways, such as integrating administrative and private sector datasets and modernizing metadata. However, the introduction of new methods can create new problems. Considering the importance of innovation to federal statistics as well as the potential risks, it is critical to create a set of metrics that show what success looks like.

What makes for a successful innovation?

Before evaluating the success of a particular innovation, we must agree on what success means. Any proposed innovation should be judged against four core values of proper data governance.

- Accuracy ensures that society has high-quality data and statistics that meaningfully represent the data subjects. Inaccurate data can lead to flawed analyses, poor decisions, and misrepresentation.

- Accessibility addresses what data and statistics are available, to whom, and under what conditions. Data should be accessible to those who need it for legitimate purposes.

- Usability means data must be understandable, actionable, and fit for purpose. Even high-quality data can be ineffective if users cannot interpret or apply it.

- Privacy safeguards sensitive information from illegitimate access or misuse. Numerous laws and regulations—from local to federal—require data curators to protect privacy rights and maintain public trust.

These four values pull against each other. For example, making data more accessible can increase privacy risks. Stronger privacy protections can reduce usability. Any honest evaluation of a proposed innovation must grapple with these trade-offs directly, rather than optimizing for one value while quietly sacrificing another.

Innovation at every stage of the data life cycle

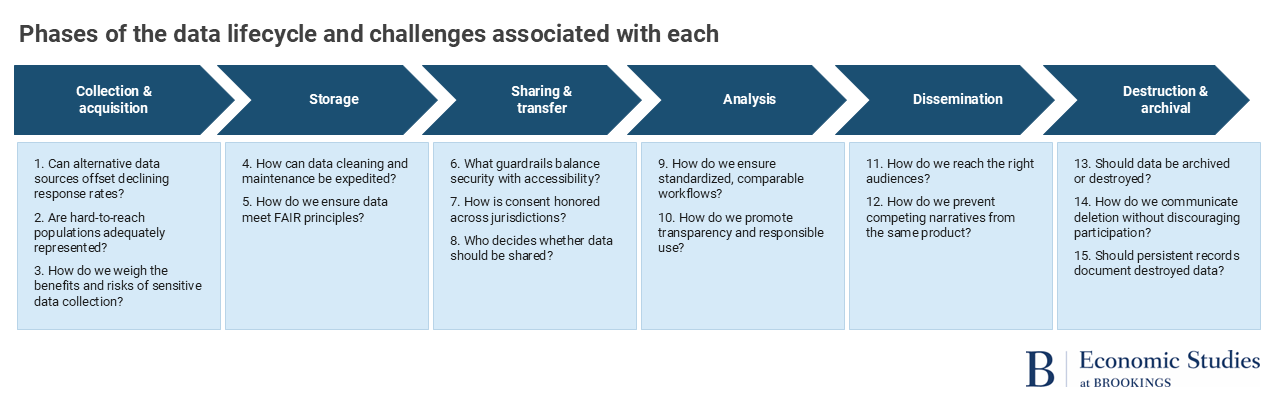

Almost everyone benefits from federal data and statistics—often without realizing it. Most public discussion of federal statistics only focuses on the analysis or dissemination of data, rarely considering the other parts of the data life cycle. Each stage of that life cycle presents particular challenges, which also represent areas where potential solutions or innovations can be applied. Evaluating innovation means looking across the full data life cycle, which spans six phases (see Figure 1), and how that innovation addresses a challenge within that phase.

Take, for example, the decennial census, one of the most important data collection efforts in the United States. Collecting data (phase 1) on such a massive scale requires numerous and varied data collectors—from methodologists who design the census to field agents who go door-to-door verifying responses. Next is storage and access (phase 2), which depends on the type of data requested. Depending on the data type, some datasets are transferred and shared (phase 3) with other entities to create different data products or stored in other secure facilities like ICPSR.

The analysis and dissemination stage (phases 4 and 5) is the responsibility of data users and practitioners. For example, census data are used by the Department of Education to determine Title I funding for schools and school districts with high percentages of students from low-income families (see, for example, Moulton and Luong, 2023; National Academies of Sciences, Engineering, and Medicine, 2015). Finally, archival and destruction (phase 6) occur when data are no longer actively used. Decennial census data are archived and made publicly accessible after 72 years, as seen most recently with the release of the 1950 Census.

Across these six phases, there are challenges to grapple with: declining survey response rates, the difficulty of integrating administrative and private-sector data that were never designed to work together, the absence of consistent metadata standards, ambiguities about consent when data cross jurisdictions, and more. Recent innovations address the collection and use of federal data, but there are largely unaddressed issues in the destruction and archival phase. For example, there is a lack of policies that persist beyond individual staff members and adapt as the data ecosystem involves, like systematic checks on whether data should continue to be archived.

A closer look: Re-Engineering Statistics Using Economic Transactions (RESET)

Among the recent innovations in federal economic statistics, the Re-Engineering Statistics using Economic Transactions (RESET) project, a collaboration among the University of Maryland, University of Michigan, and the U.S. Census Bureau “…aims to provide the architecture for re-engineering official economic statistics—literally to build key measures such as GDP and consumer inflation from the ground up” using transaction-level data. The project is motivated by the persistent challenge of declining survey response rates, rising costs for traditional data collection methods, and the difficulty of keeping up with changes in the economy.

One aspect of the RESET project is to reconsider how key economic indicators—such as the Personal Consumption Expenditures (PCE) and the Consumer Price Index (CPI)—should be measured. The Bureau of Economic Analysis produces these measures through a highly decentralized system, relying on the Census Bureau for retail sales data and the Bureau of Labor Statistics for price data. Because of this reliance on diverse data sources, inconsistencies often arise when integrating them, as datasets differ in aggregation levels and sampling rates—making micro-level analysis nearly impossible and making industry-level analysis difficult at best.

RESET’s solution is to replace this system with a unified process that draws directly on digital transaction data we already have (addressing Challenges 1, 4, and 5). This approach enables the simultaneous collection of price and quantity information directly from the original source—the businesses and individuals themselves. RESET’s work can increase the consistency, granularity, and frequency of data measured and produced (Challenges 2, 4, and 9).

To assess the feasibility (and Challenges 9 and 10), the RESET team examined multiple methodological approaches for measuring prices and real quantities using transaction data from NielsenIQ and the NPD Group (now Circana), including accounting for product quality and item substitution using hedonic methods and superlative price indexes. The team also demonstrated the usefulness of machine learning techniques to support implementation at scale.

Despite the advances the RESET team has made, challenges remain. More data means more information to process (Challenges 1, 4, and 5) and a need to consider how to best ensure the sharing and transfer of information is safe and secure (Challenges 1, 4, 5, 6, 7, & 8). While this investment adds short-term costs and runs counter to efforts to reduce expenses, such investments may ultimately prove cost-effective by delivering higher-quality data and more robust economic statistics in the long-term. RESET is currently working on a demonstration project to implement their methods and data into actual production

What’s next?

The federal statistical system faces real pressure to change from a variety of internal and external forces. But change without a clear standard for evaluation—such as optimizing for cost savings or technical novelty at the expense of the accuracy, accessibility, privacy, and usability—risks compromising the federal statistical system that these data innovations are meant to support.

Any proposal to reform how federal data are collected, stored, shared, analyzed, or disseminated should consider a holistic approach that spans the entire data life cycle and is grounded in the core data governance values of accuracy, accessibility, privacy, and usability. Other recent innovations such as the Urban Institute and SOI’s Safe Data Technologies project, Census’s NEWS, and UChicago’s CID demonstrate that progress is possible when agencies and academics cooperate in developing modern infrastructure, adopting adaptive policies, and fostering collaboration across disciplines.

Not all proposed innovations will address every challenge or core value, but accurately assessing their benefits and costs will help identify gaps and create a roadmap for future innovation. A sustained commitment to modernizing data infrastructure, developing and investing in workforce skills an educational resource, and upholding responsible data governance are essential to ensuring that federal economic statistics remain robust, trusted, and responsive to the evolving needs of society.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

What does successful innovation in headline economic statistics look like?

May 5, 2026