A viral blog post recently compared the current moment in artificial intelligence (AI) to February 2020, just before COVID-19 turned the world upside down. While that analogy may be flawed, it’s hard to ignore recent developments with AI coding agents,1 which prompted our colleague Andy Hall to post that AI agents are coming for the social sciences “like a freight train.”

Here’s why we agree: in just the past month, we’ve used AI coding agents to do the following: (1) transform a minimal implementation of a method to analyze heterogenous treatments and treatment effects into a fully functional, modular, and well-documented R package in a just over a day; (2) produced a twenty-page summary for our own edification of business responses to the Russian invasion of Ukraine based on materials found on this website, including data visualizations, statistical analyses, and a complete replication file in under an hour; (3) developed the infrastructure, data collection, analysis, and reporting pipeline for a pilot study examining what kinds of political prompts LLMs refuse to address across five languages and five frontier models.

Yes, those of us working in the academy have been wrestling with what generative AI means for teaching for a few years now, and lots of us have begun to integrate generative AI into routine tasks like summarizing papers and even coding assistance. But the current moment feels like it could be quite different, and we suspect many things in the academy are about to change.

What it is and how it works

For readers who have not yet used AI coding agents—such as Anthropic’s Claude Code, Google’s Jules and Antigravity, and OpenAI’s Codex—we begin with a conceptual overview of this new approach to conducting research. Working with an AI coding agent is in some ways similar to interacting with a chatbot such as Claude or ChatGPT. Like a chatbot, you can describe what you want done and respond to output with further requests, assessments, tweaks, etc. But whereas chatbots respond in a web interface, an AI coding agent can create and edit all sorts of different outputs: R and Python scripts for data analysis and scraping; LaTeX, Markdown, and MS Word docs for literature reviews and paper drafts; and databases and datasets (or even just .csv files) for storing data scraped or collected online. Agents can also execute those files, identify errors or potential improvements, and iterate, with heavy or light supervision depending on your setup.

While this workflow was designed for tasks related to writing code (e.g., data collection, data analysis, data visualization), you can also give instructions regarding literature reviews, writing papers, and critiquing (reviewing) papers, including the one it is drafting. You can also set it up to do multiple tasks at once—this is what is meant by “agentic coding”. The term was originally coined as a means of using generative AI to write software, but the concept can be applied just as easily to other facets of research.

As we write this, scholars are experimenting with all sorts of ways to most effectively deliver these instructions, including extensive recursive processes where you provide an overarching set of instructions that subsequent AI-written instructions then reference. Others (including us) are essentially developing personalized instruction templates on which we then iterate depending on the task, including key instructions that need to be included in all cases; these instructions are then placed in a text or markdown file that you instruct an AI coding agent to read. And of course, it is possible to provide instructions to agents the same way you would with any other chatbot, in an interactive format using natural language.

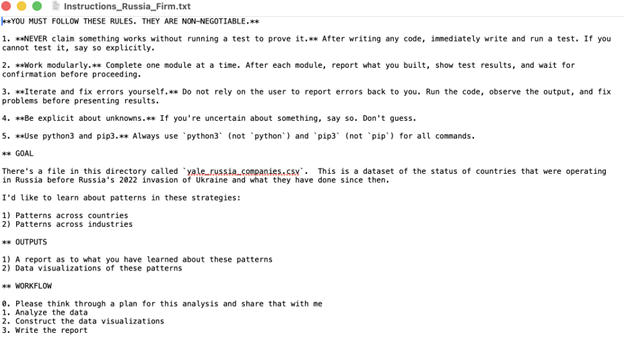

We are going to eschew giving specific advice here about what needs to be included in any instruction file, but here is an example of a very simple instruction file that was provided to Claude Code, one of several AI coding agents, to produce the aforementioned 20 page summary of cross-country and cross-industry patterns in the decisions made by companies choosing whether or not to exit Russia after the February 2022 Russian invasion of Ukraine. We prompted Claude Code as follows:2

Following the provision of instructions like these, Claude Code provides a detailed plan for the project, which can include searching online for research papers (for literature reviews) and data, building out folder structures for managing files, writing Python and R files for data collection, analysis, and visualization, and all sorts of written output (LaTex/MathTex/Markdown/TikZ documents) for reports, documentation, and more. The user then has the option to approve the plan or suggest modifications. We want to emphasize that this is an iterative process in which we rely on the AI coding agents to look over what has been produced, and handle new questions, instructions, requests for validation, etc.

Clear-eyed downsides

While going from zero to 60 for projects involving writing code is undoubtedly faster with an agent, it may not be universally faster for all tasks. A recent study from METR, a research non-profit dedicated to understanding AI capabilities and risks, found that using AI for fixing small bugs in a familiar codebase actually slowed down professional developers, though a recent update to this research shows the opposite is now true in the wake of model improvements and agents (and in fact they had trouble recruiting coders who would do the non-AI “control” tasks!). Setting subtle bug fixes aside, using an AI coding agent is going to mean spending a lot more time reviewing AI output, whether it’s code or prose. What’s more is that it is possible that our technical skills might atrophy if AI is always at the tip of the spear, or at the very least we may not be as prepared to power through a difficult set of scientific research tasks when we’ve become accustomed to an agent doing so much of the heavy lifting.

Along with supervisory overhead, there are other risks associated with agentic AI use. First, while frontier AI companies have incentives to keep their agentic products as secure as possible, the level of access needed for maximal agentic productivity involves serious tradeoffs. One of us (Messing), in the course of asking an agent to clean up some code and data files, found that it deleted half of the data while “editing” the file (though the data were eventually recovered). And while there are reports that agents can incidentally ingest security keys stored in local directories—which we suspect AI companies will eventually mitigate—a more stubborn security risk arises from users who pass agents security keys out of convenience. Furthermore, AI coding agents often interrupt their workflows to ask for permissions, which users can skirt by passing a flag instructing it to “dangerously skip” permissions.

The risks are not hypothetical. For example, OpenClaw, an AI agent that was itself created using agentic AI, became an overnight phenomenon at the end of January 2026. The tool exposed dozens of critical security issues—including unsafe storage of sensitive data that would expose thousands of users in the event of just one malicious incident.

Importantly, the longer you use an AI agent for a single task in a single session, the more the quality of the agent’s output can suffer. This happens long before you reach the end of an AI agent’s context window—the maximum number of tokens of which it can keep track. There are a wide array of solutions emerging to help deal with this, most of which are aimed at offloading as much of the planning and logging as possible. These range from clear prompting instructions and software that offloads task tracking to complete systems that, among other things, prompt models to log their output and key decisions.

Of course, it is also worth considering agentic AI’s energy consumption relative to other digital and real-world activities. Prompting a large language model uses more energy than web search, and AI agents use more resources still. Estimates from a Google AI paper are that the “median Gemini Apps text prompt uses less energy than watching nine seconds of television (0.24 Wh [watt-hours]).” Other estimates are much higher (2.9 Wh), but even so, the UK’s non-profit National Centre for AI concluded that streaming Netflix for one hour consumes more energy than 25 LLM prompts. AI’s carbon emissions are difficult to estimate, but it has been suggested that an average prompt from ChatGPT emits about as much CO2 as using a laptop for a minute or driving a sedan about five feet. AI agents entail significantly higher energy consumption, however, and while scientific estimates are hard to come by, one anecdotal estimate suggests a coding session might use on the order of 25-50 Wh (akin to toasting bread for three minutes), while a full day might use over 1 kilowatt-hour (kWh), which is on the order of the power consumed running a dishwasher.

What may be possible

Before we consider the likely disruptive implications for academic researchers, we want to underline some of the possibilities that this technology might make possible. First, we are now far less limited by a lack of software engineering resources/talent to implement methods and/or build large data collections. Most social scientists tend not to be talented software engineers or have the kind of access to these sorts of resources that are often found in industry jobs, so this is especially likely to be beneficial to the community—the code one of us (Messing) wrote with an agent for his R package was much closer to industrial quality code than the collection of R scripts created when writing the original paper with co-authors.

Of course, academics are generally not directly incentivized to create relics for the public beyond their research papers. However, AI agents now make it far easier to create interactive websites and dashboards to facilitate public engagement with research, which could be just the boon to public engagement that funders and administrators have long sought.

There’s a world where the science is more robust as well. Researchers could iterate quickly on pre-analysis plans with an agent to assist and provide feedback. And with fewer constraints on data analysis projects, researchers could use coding agents to validate their own analyses, perform robustness checks they might have previously skipped, and more easily replicate the work of other researchers. Researchers who struggle to explicate formal models could use AI agents to improve the quantitative rigor of their work. Those without cutting edge quantitative training might be able to create more robust quantitative work. And higher quality code tends to be more robust to errors that scientists make across the board.

Of course, with this new hammer, there’s a danger that researchers only try to answer questions with nails—it will be so much faster and cheaper to produce research using data sets that have already been or can easily be collected. Already, research is finding that the use of machine learning and non-agentic AI increases productivity but narrows researcher focus. You can see the problem with this if we take it to the extreme—consider a world where hundreds of researchers are using agentic AI to study a single data set. Not only will the scope and generalizability of the sum total of their findings be limited, but the odds that the research community “finds” signal in noise, call it “collective p-hacking,” may increase as well.

So, what does this all mean for social science research when we have an endless supply of low-cost engineering/data science resources? It is possible that we are approaching a fundamental paradox for the profession: agentic AI may simultaneously turbo-charge our research productivity—and even unleash whole new types of research streams that were hitherto impossible to implement or even imagine, perhaps especially in the hard sciences—while at the same time undermining the institutional structure that we have in place to support academic research.

Let’s start with short-term implications. While it’s still an open question whether AI-assisted research can be of the same quality as non-AI-assisted research,3 we wouldn’t bet against it based on the dramatic model improvements we’ve seen recently. If we have indeed lowered the bar to produce high-quality research, it follows that there should be a notable increase in the production of reasonably high-quality research. That will have two immediate impacts on the field.

First, if the surge in manuscript uploads to pre-print servers is any indication, journals are going to see more submissions. One study, published in Science, estimates a 36% increase in productivity among authors who used LLMs to assist in writing—and the study ends in July 2024, long before AI agents and their underlying models became as capable as they are today. Related work estimates that by September 2024, 22.5% of computer science preprint abstracts were LLM-assisted. One of us (Messing) estimates that after February 2025 (when Claude Code launched) submissions to that same preprint server are from 6-13% above seasonally adjusted expectations. Political scientist and journal editor Kevin Munger has gone on the record predicting 50% increases in submissions to top political science journals in the coming year.

With fields already straining under the weight of submissions, this could potentially make it even harder to find reviewers and to get reviews done in a timely manner. This latter point seems particularly unfortunate given the speed at which the same developments we’ve described previously increase the speed at which new knowledge is produced. This, in turn, may potentially need to push journals toward new models of peer review, including the crucial question of the role of AI in the peer-review process, a possibility that is already attracting the attention of journal editors. While there is sure to be a great deal of resistance to the idea of replacing humans in the peer-review process, it also seems equally likely that we will begin to see experimentation in this regard. This raises a number of questions: can we even call it peer-review if the reviewers are AI agents? What happens when it’s AI turtles all the way down?

It will not only be journals that have to deal with a proliferation of new research—it will be the actual scholars themselves. Here, the use of AI to process newly emergent research seems likely to be less controversial, even if only because it is something people already do (figure out what to read, how much to read of it, etc.) largely in a private manner. Indeed, some of the most exciting tools that we’ve seen people build with AI coding agents involved exactly these kinds of knowledge synthesis applications.

There is also, of course, the question of declarations of AI usage in research production, which we expect will become as ubiquitous as conflict of interest declarations. Researchers already use AI in the scientific process, sometimes without any declaration, so it is not necessarily clear how or where to draw the line with AI coding agents at the moment. Some uses of AI—such as next word prompting to complete sentences from word processors—almost certainly do not warrant declarations, while others—using AI to actually draft papers and run analyses—almost certainly do. We hope fields can align on some sort of common standard in the near-term future. Getting into the potential pros and cons of different disclosure approaches is beyond the scope of this article, but we point to one such proposal as a potential focal point.

Another likely development relates to the economics of hiring research assistants in an era when it has suddenly become possible for people unfamiliar with various programming languages to now produce high-quality code in a matter of minutes. As long as agentic coding AI remains priced as it is, it will inevitably be much cheaper to use it for many tasks commonly fulfilled by both undergraduate and graduate-level research assistants. This, of course, will vary by field and skills set of the research team, but tasks such as literature searches and reviews, some types of manual data labelling, building out public-facing resources such as websites and dashboards, conducting code review, creating data visualizations, and running statistical analyses are all tasks that agentic AI very soon may be—or quite possibly already is—able to perform at lower costs than human research assistants. And even if senior researchers do not choose to use agentic AI themselves, the research assistants they hire will likely be more productive when using agentic AI than in years past.

The implications of driving down the cost of research assistance are not simply positive or negative. On one hand, agentic AI may prove to be a leveling force across scholars with different levels of access to research funds. This applies both to researchers at differently endowed universities or research institutes (both within countries and across countries) and researchers at different stages of career development: even undergraduates will be able to harness their own agentic AI research assistants. On the other hand, the training opportunities associated with being a research assistant may be harder to come by in the future, leaving open the possibility of students getting less hands-on training doing research at precisely the moment when the barriers to starting on their own research have never been lower. We suspect that the way in which we teach undergraduates and train graduate students will need to adapt to this new reality.

As the roles of senior and junior researchers shift, we may have to rethink how we assess merit in the social sciences. The ability to produce high-quality research papers and attribute the quality of these papers to their authors may no longer suffice in a world where AI agents are able to produce them so quickly. We’ve been down this road before, moving from single-author papers to co-authored papers to large multi-author papers (and even more so in the natural sciences), but we have to wonder whether effectively having “AI co-authors” might prove a step too far? If you can write a high-quality paper in an hour, what “credit” will you deserve for coming up with the idea and a clever set of instructions to an AI agent? How might such developments undermine hiring and promotion? Some have suggested placing greater weight on talks that reveal whether a candidate deeply understands their work. On the other hand, new technologies have always brought the fear that learning would somehow suffer. Even writing was not without its critiques: Socrates “faulted writing for weakening the necessity and power of memory, and for allowing the pretense of understanding, rather than true understanding,” and knowledge production certainly seems to have survived the invention of writing.

Policy implications and recommendations

One common refrain in talking with other social scientists who have started using AI coding agents in the past couple of months is that this has felt like the “eureka moment” for social science research. However, as a profession, it’s time to start thinking about what kinds of changes this will bring.

First, resources—these models are fairly expensive to use to their full potential, and we see a possibility for a “rich get richer” scenario where well-resourced universities manage to prioritize access, time, and resources to experiment with agentic coding, while researchers at other institutions are left behind. For now, monthly plans are affordable for light usage, but at the current moment, this is only possible due to venture capital pouring into these firms, offsetting losses. Despite the notable breakthroughs and efficiency gains, prices may rise in the future as demand for the best agentic models increases.

Second, IT and security policy need to be considered carefully in light of agentic AI. IT policies that bear on how AI and third-party apps can be used vary widely across research institutions. The very real security and legal implications of allowing unfettered access for agentic AI to computing resources need to be carefully weighed against the potential productivity gains that could be prevented by policies that restrict agentic AI access to such resources.

Institutions also need to think through guidance on the issues we highlight above: AI declarations of usage in research, how to evaluate social science research candidates for jobs in an age of AI, and how to appropriately use AI in the context of peer review, if at all.

Shifting the focus from research institutions to broader government regulations is a complex topic that deserves its own full discussion. We will simply close by noting that it is always hard to know when it is the right time to create “appropriate” regulation: move too early and there may not be enough information to create the most beneficial regulation; move too late and preventable damage may already have been done. We believe that informing effective policymaking will continue to depend on ensuring access for independent researchers to the necessary data and information so that they can inform the public and policymakers about how the technology works and its downstream consequences—this has been the case for social media companies and will just as much be the case for the newly emergent AI giants.

-

Acknowledgements and disclosures

Disclosure: Ironically, no AI tools were used in the writing of this article.

The authors acknowledge the following editorial support for this article: Robert Seamans, Sanjay Patnaik, Mike Wiley, and Tyler Hernandez.

-

Footnotes

- Coding agents are powerful, semi-autonomous large language models that can generate code, read and write files, search the web, and execute system commands and other programs. See https://missing.csail.mit.edu/2026/agentic-coding/.

- These top five instructions under “You must follow these rules…” were taken verbatim from a post from political scientist Andy Hall, a sign of how agentic coding advice is spreading organically among researchers.

- While it’s difficult to know how much academic research is “AI slop,” internet security research “bug bounty” programs are drowning in technical sounding but ultimately incorrect bug reports, leading cURL to cancel its bug bounty program.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

The train has left the station: Agentic AI and the future of social science research

March 3, 2026