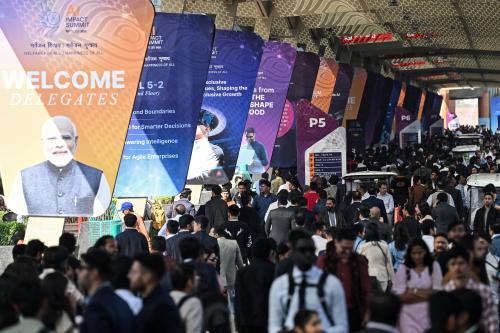

In February, we participated in the AI Impact Summit in New Delhi, the fourth in a series of summits that have evolved alongside artificial intelligence (AI). While the first iteration was a closed event focused on AI risks, India opened its doors to 300,000 people this year, spotlighting its own AI strategy and development and generating excitement among the thousands of young engineers in the crowd.

Ministry of Electronics and Information Technology (MeitY) Secretary S. Krishnan said this was part of a deliberate effort to democratize the summit and give more people access to leading thinkers in AI. Multiple summit discussions focused on AI sovereignty, the growing significance of Global South actors, and AI safety and testing—themes that reflect recommendations from a report about AI sovereignty that we released in conjunction with the event with colleagues at Brookings and the Centre for European Policy Studies (CEPS).

Talking AI sovereignty

The widespread desire for “AI sovereignty” was a major theme of the summit, with India’s approach a frequent example. The Brookings-CEPS report argues that full-stack AI sovereignty is structurally infeasible for most countries and proposes “managed interdependence”: mapping AI stack dependencies, prioritizing feasible interventions, diversifying suppliers and partners, and embedding interoperability through standards, procurement, and governance. Our report pointed to India’s AI strategy as a pragmatic and effective example of such strategic approaches; it aims to leverage India’s vast digital public infrastructure to support public services and interoperability across India’s 22 languages rather than creating an entirely independent AI stack. Several panels discussed aspects of AI sovereignty from a variety of standpoints, and we found that many panelists resonated with recommendations from our report by recognizing the barriers of scale in developing foundational models and focusing instead on targeting specific national needs and strengths. Expanding AI capabilities to more languages and cultures was an often-mentioned use case.

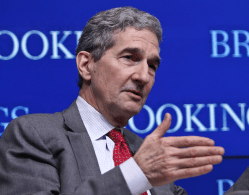

In his remarks, Michael Kratsios, the director of the White Office of Science and Technology Policy and an architect of the Trump administration’s AI Action Plan, took into account the salience of global aspirations for sovereign AI in his speech to the summit’s plenary session for governments. He led off by touting AI services and chips in the U.S., saying “[t]he gold standard in AI is made in America.” But, rather than promoting the “full American AI stack” as a one-size-fits-all package, Kratsios framed his pitch around AI sovereignty, speaking of “framing your national destiny” and “strategic autonomy” as “a necessity for independent nations.” He presented “best-in-class technology” as a pathway for “independent partners” to achieve these goals. Our report recommended that the U.S. needs to be adaptive and attuned to local needs in promoting AI exports. Kratsios’ explicit nods to AI sovereignty and partnership in his remarks suggest willingness to pitch U.S. technology to local constraints.

The summit also showcased the Trump administration’s American AI Exports Program, aimed at enabling partners to build “sovereign” capabilities on top of U.S. chips, cloud, and models while keeping sensitive data within national borders. New initiatives include a Commerce Department-led National Champions Initiative to incorporate partner countries’ leading AI firms into customized export stacks; a U.S. Tech Corps (within the Peace Corps) to support deployment; and new channels to finance exports of the American stack through the U.S. International Development Finance Corporation, Export-Import Bank of the United States, U.S. Trade and Development Agency, and Millennium Challenge Corporation. A U.S. National Institute of Standards and Technology Center for AI Standards and Innovation AI Agent Standards Initiative to develop interoperable, secure standards for agentic AI was also mentioned.

The U.S. joined 91 countries and international organizations in signing the “Delhi Declaration” propounded by India, with other notable endorsers including all G7 countries, China, and the EU, along with several of its member states. U.S. endorsement is noteworthy considering that it did not join the statement emerging from last year’s AI summit in Paris. In fact, the Trump administration has broadly criticized multilateral organizations and withdrawn from several. For example, Kratsios’ New Delhi speech reiterated that the U.S. “totally reject[s] global governance of AI,” but also described both regulatory and non-regulatory frameworks as a “necessity” and the Trump administration as working with Congress toward a national policy framework.

The Delhi Declaration addressed cooperation in other areas, however, and contains references to “voluntary” and “non-binding” frameworks. Kratsios’ speech also spoke of regulatory frameworks as well as non-regulatory ones as a “necessity” and spoke of the need for regulatory certainty. In this light, the U.S. endorsement of soft commitments might reveal some openness to voluntary and non-binding cooperation, even as the administration rejects binding international rules or new international governance bodies.

AI and the Global South

The shift in international focus from safety to diffusion reflects the desire of much of the world to get in on AI and the fear that they may be excluded by economic and regulatory barriers or AI systems that do not fit their needs. India made a point of the impact summit being the first in this series hosted by a Global South country that can operate as a bridge. The country’s application of AI to digital public infrastructure and pragmatic approach were very much on display. This was evident not only in many panels throughout the summit but also a side event, organized by the Indian think tanks Observer Research Foundation and Carnegie India, which brought together Indian AI leaders with representatives from Africa and Asia along with funders and others to explore use cases and models.

AI safety abides

The India AI Impact Summit, which was more of a tradeshow than a policy conference, apotheosized the AI summit trajectory from “safety” in the U.K. in 2023 and South Korea in 2024 through “action” in Paris toward a focus on AI diffusion and development around the world. The Delhi Declaration is focused on sharing the benefits of AI widely: “Wide-scale adoption of AI and AI-based applications hold unprecedented potential to drive economic and social development.”

Even so, safety still played a part in New Delhi discussions. The declaration states that “secure, trustworthy and robust AI is foundational to building trust and maximizing societal and economic benefits.” While J.D. Vance referenced “hand-wringing about safety” at the Paris summit, the April 2025 Office of Management and Budget (OMB) AI guidance gives force to the AI Action Plan’s general language on “secure” and “trustworthy” AI. That guidance directs federal agencies to improve services “while maintaining strong safeguards for civil rights, civil liberties, and privacy”—prioritizing “the use of AI that is safe, secure, and resilient.” The U.S. AI Safety Institute established in the wake of the U.K. summit was renamed to the Center for AI Standards and Innovation and still convenes an international network of safety institutes.

Prior to the India summit, the U.K. released an International AI Safety Report that included the perspectives of a panel of international experts led by Turing Award winner Yoshua Bengio, who presented the report in New Delhi. The new report synthesizes recent science on capabilities and emerging risks of general-purpose AI (including agentic systems) and discusses practical approaches such as model evaluations, dangerous-capability thresholds, and “if-then” safety commitments. The findings are notably concrete about test-and-mitigation tool chains and institutional readiness (including for the Global South), while being clear about uncertainty, immature benchmarking, and remaining evidence gaps.

Another important contribution to international cooperation on AI safety has been the 2023 Hiroshima AI principles and voluntary code of conduct for model developers. To reinforce this code of conduct, the G7 developed the “Hiroshima process,” a framework for transparency and disclosures implemented by the Organisation for Economic Co-operation and Development (OECD) and rolled out at the Paris AI summit. The responses from companies and organizations have undergone evaluation and were included in a Brookings report done in partnership with the Center for Democracy and Technology on how to strengthen the framework and the quality of disclosures.

The OECD panel on advancing AI transparency in practice discussed the Hiroshima AI Process (HAIP) reporting framework and noted that work is underway on a second version of the HAIP reporting framework, which would incorporate some recommendations from our earlier paper. Other civil society organizations, like the Centre for Communications Governance, Global Network Initiative, AI Safety Connect, and The Future Society, engaged conversations during the conference around AI safety, governance, and evaluation. These discussions largely revealed the challenges of testing emerging capabilities as models saturate existing benchmarks and their capabilities outstrip these measures. Despite the difficulties of investigating unknown capabilities, testing for reliability against known capabilities provides a more tractable starting point.

Switzerland announced it will host a follow-on summit in Geneva in 2027. This will follow the first UN global forum on AI in July 2026—an outgrowth of the UN’s 2024 Global Digital Compact. Overall, this year’s summit reinforced three dynamics we trace in our recent work: Sovereignty debates are becoming more pragmatic, diffusion is reshaping the global agenda, and safety is persisting as an implementation challenge for testing and standards. We wonder what will come next year after safety, action, and impact.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

Sovereignty, safety, and scale: Takeaways from the India AI Impact Summit

March 12, 2026