There is a troubling hidden pattern behind success stories of high school graduation: though the percent of students earning a diploma is at an all-time high (82 percent), college completion rates continue to stagnate at best, exacerbated by a throng of college-bound students ill-prepared for advanced courses.

For instance, California’s massive community college system reports being overwhelmed by the more than 50 percent of inbound students who need remedial training in math and English, contributing to a meager 52 percent completion rate.

While there are certainly many economic and cultural factors in long-term dropout rates, we argue that an overlooked hurdle to solving the problem is short-sighted measurement: education leaders too often judge high school success by high school metrics, not whether students end up with the knowledge and perseverance to attain a degree.

For instance, in 2009, the U.S. Department of Education’s research arm, the Institute of Education Sciences, conducted an exhaustive review of research on college preparation and found “low evidence”–the weakest category–that academic preparation for college was effective at improving classroom outcomes. The reviewed studies included a wide variety of methods of college preparation, from increasing the difficulty of academic standards to matching curricular topics to known college courses.

These methods also notably included increasing the quantity of advanced coursework taken in high school, such as Advanced Placement classes. None of the methods was found to have a strongly predictive positive impact on college readiness.

Indeed, in 2013, Dartmouth stopped accepting Advanced Placement credits after 90 percent of students who scored a perfect “5” on the AP Psychology exam reportedly failed the university’s own test.

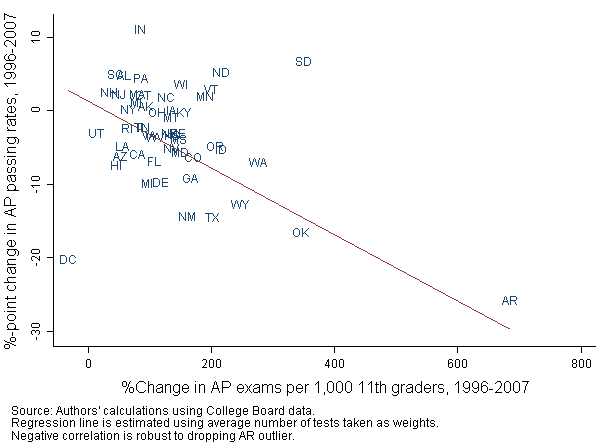

Despite this backlash, some states are still looking to dramatically expand access to AP courses, mandating that they be accepted as college credit as a way to reduce college costs for students. The available evidence suggests this brute force strategy could be misguided, shoving students into the deep end of advanced courses without adequate preparation. In fact, as Figure 1 shows, states that increased AP-taking rates faster between 1996 and 2007 saw larger declines in passage rates.

Figure 1: State-level changes in AP taking and pass rates, 1996-2007

University faculty at institutions far more academically diverse than Dartmouth have long harbored suspicions that many of their students were not being appropriately prepared for their classes, despite previous high school coursework in the same subject. In the U.S. and abroad, many decided to study the issue. Various professors have conducted more than 20 small-scale studies since 1980, using their own students to investigate how much high school knowledge predicted performance in their college courses.

These published studies collectively show that the effect of high school course-taking on college grades ranges from -5.3 to +6.7 points on a 100-point scale. When comparing students of similar race, gender, standardized test scores, and socioeconomic background, most of the papers find that high school course-taking makes no more than a two percent difference in the final college grade, even when high school courses include Advanced Placement.

For example, in one aptly titled study, “Student Performance in First-Year Physics: Does High School Matter?”, researchers found that at their home institution of the University of Sydney, “students with no background in senior high school physics are generally not disadvantaged.” In a separate study, researchers examined what happened after some Canadian provinces completely eliminated their fifth year of high school. The average college GPA of students who completed four years of high school fell by about two percent relative to earlier cohorts who had five years of high school.

While alarming, it is not clear that these studies generalize to all college students, since grading can vary dramatically from college to college and instructor to instructor. The students sampled also may not be representative of all students, as faculty drawing a class of students unusually ill-prepared might be the ones more likely to investigate the problem.

We thus examined whether these patterns held up in a nationally representative database of U.S. students progressing from high school to college in the 1990s. Analyzing thousands of transcripts from the Department of Education’s National Educational Longitudinal Study, we found confirmatory evidence that advanced high school courses apparently do little to prepare students for success in college coursework.

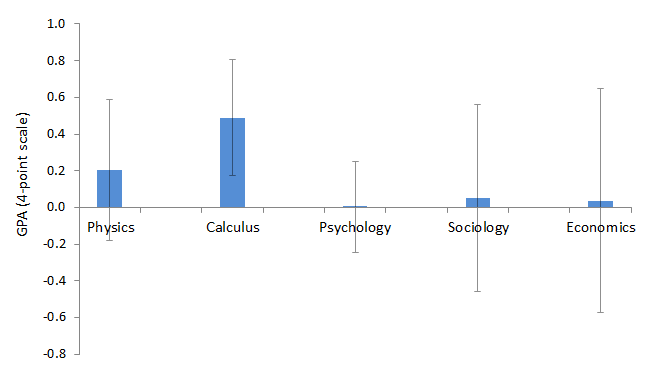

Specifically, we showed that students with one more year of high school instruction in physics, psychology, economics, or sociology on average have grades in their first college course in the same subject just 0.003 to 0.2 points higher on a four-point scale. For example, for students of similar race, socioeconomic status, and high school standardized test scores, those who took a year of high school economics earn a final grade in their college economics class 0.03 points higher than students who have never encountered that subject before. What’s more, these trivially small differences hold even for students who took exactly the same college course.

Figure 2: Relationship between college grades (first course) and high school course-taking

Source: Table 4 from Ferenstein and Hershbein (2013).

Note: Whiskers show 90-percent confidence intervals. Estimates are based on within-college-course analysis using transcript data from the National Education Longitudinal Study, 1988, and include controls for race, sex, socioeconomic status of student, high school standardized test score, and loci of self-control.

Calculus, the exception, mildly benefits from high school instruction, probably because it is based on cumulative learning to a greater extent than other subjects commonly taken in both high school and college.

Of course, as with any statistical analysis, there is some uncertainty in our estimates, but for all subjects, we can rule out a year of high school instruction increasing college grades by any more than about 0.65 points on a 4-point scale, and for psychology–the most common subject taken in both high school and college–we can rule out an increase as small as one-quarter of a letter grade. (We also note that we cannot rule out moderate negative effects in each subject except calculus, as shown by the error bars.)

What can explain why high school course-taking is so weakly related to college grades, both in our study and in previous ones? It is not that high school students are not learning. Rather, it is more likely they often learn the wrong things, do not sufficiently focus on the critical thinking commonly needed in college, or simply forget much of what they learned.

For instance, the ability to analyze evidence and pen a persuasive essay is central to much of college. Most colleges mandate at least a semester course of intensive writing and argument, as it is presumed that even students from top high schools are insufficiently prepared with this essential skill.

Additionally, studies on long-term retention of high school coursework suggest that students forget much or most of what they learn. Students who remember a few basic concepts may hold a head start that quickly diminishes as college classes rush toward advanced material. The little information that is retained from high school may explain the very slight advantage from prior coursework that we observed in our study.

In theory, the Common Core’s emphasis on nonfiction argumentative writing could be a step in the right direction. Yet, it appears at least some top universities doubt whether high schools have developed the capacity to train students for college-level writing. Harvard, for instance, does not allow any substitution for its entry-level writing requirement, even from students who score a perfect 5 on the AP English exam and come from elite schools.

Instead, our evidence suggests a different approach for high schools. Because we are more confident in what is not working than in what is working, we would argue that there is room to try something different.

First, policymakers need to explicitly link high school and college performance. National assessments need to follow students through college graduation to understand what works–and what does not–over the long term. To date, many standardized tests (including international assessments) simply assume that performance in high school necessarily predicts later success, without revealing how students use such knowledge and skills in college classes or to finish their degree.

Second, since there appears to be little opportunity cost to forgoing advanced course content mastery–at least as it is commonly taught today–schools could have more freedom to experiment with innovative and experimental courses that may be more useful to students in the long term. For example, non-cognitive skill development and technical education in high school may be a more productive strategy to promote college completion than traditional advanced courses. Certainly, many educators would love to imbue their classrooms with richer experiences that inspire students and teach them valuable life skills.

To be clear, we are not advocating a wholesale rejection of subject content in high school coursework. Rather, we believe that freeing up some curricular time to pilot alternative pedagogical approaches that would be studied may yield more evidence on what works for whom without the worry that students will be shortchanged by less drilling on subject content.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).