We cannot govern what we cannot measure.

Overview

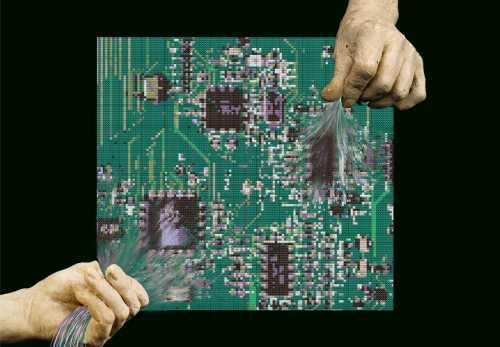

Effective governance of agentic AI depends on the ability to measure, evaluate, and compare system behavior in contexts that resemble real-world deployment. However, many existing evaluation practices were developed for static or narrowly scoped models and are ill-suited to new AI systems that act autonomously over time, interact with users and environments, and pursue open-ended goals. The resulting gaps in measurement limit the capacity of policymakers, developers, and deployers to assess risk, establish appropriate safeguards, and make informed decisions about deployment, accountability, and compliance. Addressing evaluation challenges is therefore not only a technical concern, but a foundational prerequisite for credible governance of agentic AI.

On October 14, 2025, over 40 experts from government, academia, civil society, and industry convened in person and online at the Brookings Institution in Washington, D.C., to address a core challenge in agentic AI governance: that we cannot govern what we cannot measure. Jointly hosted by Carnegie Mellon University (CMU), the Brookings Institution, and the University of California, Berkeley, this workshop (the first in a series) brought together stakeholders to establish shared definitions of agentic AI and chart paths toward closing the gap between capabilities measured through controlled benchmarks and those required in real-world deployment contexts. This brief summarizes four key themes and areas for further research explored at the conference. As agentic systems advance, developing a research roadmap for measurement is critical to build evidence-based governance frameworks that can keep pace with technological change.

There is no consensus on what precisely defines “agentic AI.” The pace of technological development continues to outstrip efforts to stabilize the term. Some accounts emphasize tool use as a defining feature, while more classical formulations center on an AI systems’ capacity to perceive, plan, and act.12 Other definitions require continual learning as a condition for agency.

Given this definitional plurality, an understanding of agency as a spectrum rather than a binary property offers more analytical and practical value than attempts to enforce convergence on a single definition. The terminology applied to a system does not change its capabilities or the ways in which it interacts with the world. An excessive focus on whether a system qualifies as “agentic,” or what constitutes “agency” risks diverting attention from the more policy-relevant and analytically tractable question: What functions can the system perform? What does its deployment enable? What vulnerabilities or failure modes does it introduce? What impacts follow from these capabilities? And how can practitioners evaluate systems in ways that are meaningful, scalable, reliable, and interpretable to both users and deployers?

Characterizing AI systems along dimensions such as autonomy, learning capacity, and goal-directedness rather than through a single definition offers greater robustness over time. Such a framing supports governance and evaluation frameworks that can adapt as systems evolve. By contrast, over-indexing on a definitional scheme based on the current state of the art risks entrenching governance and evaluation approaches in outdated conceptual models—models that may prove inadequate as AI capabilities continue to advance.

Theme 2: Gaps in the measurement and evaluation of agentic AI

Current approaches to evaluating agentic AI exhibit several structural gaps. Some challenges arise specifically from the interactive, goal-directed nature of agentic systems, while others reflect broader limitations in the evaluation of large language models that are amplified in agentic settings. The following sections address each in turn.

Challenges distinctive to or substantially heightened for agentic AI

- Reliability and behavioral consistency are poorly captured by existing benchmarks. Agentic systems often exhibit stochastic, non-deterministic behavior across runs, environments, and time horizons. A single accuracy score on a static benchmark is insufficient to capture this variability. The field remains at what might be described as a “Wright Brothers” stage: impressive demonstrations under controlled conditions fall well short of the reliability required for sustained real-world deployment, particularly in higher-risk domains. Even strong performance in laboratory settings does not guarantee dependable behavior in practice, underscoring the need for standardized, systematic approaches to reliability testing.

- Benchmark–based evaluation cannot substitute for real-world, in-context assessments. Because agentic systems operate through sustained interaction with environments and users, their behavior cannot be fully characterized through contained benchmarks alone. Field testing in deployment-like settings, such as controlled pilots, red-teaming exercises, or monitored trials with real users is needed to understand how agents pursue goals, respond to unanticipated conditions, and interact with organizational workflows. Ongoing monitoring of deployed systems is equally important, as agentic behavior may shift over time in response to changing environments, user interactions, or updated objectives. Together, these approaches can reveal structural and procedural constraints on adoption that are invisible to static benchmarks but critical for assessing real-world viability. This gap also carries implications for governance and accountability: If benchmark performance does not reliably predict deployed behavior, the evidentiary basis for assurance or certification of agentic systems will remain an open challenge.

- General–purpose benchmarks fail to reflect domain- and context-specific performance. Relatedly, while general-purpose benchmarks provide some insight into baseline capabilities, agentic AI performance is highly sensitive to domain and context of use, user behavior, and environment structure. Meaningful evaluation therefore requires customization to specific deployment scenarios and engagement with domain experts. Without such grounding, benchmarks risk measuring abstract competence rather than practical effectiveness and reliability.

Challenges inherited from LLM evaluation, but exacerbated in agentic systems

- Training data contamination undermines generalizability. As with LLMs more broadly, benchmark tasks may be inadvertently included in training data, particularly when models are trained on internet-scale corpora. This can lead to inflated performance that reflects memorization rather than generalization (the ability to apply learning to new data). For agentic systems, this problem is compounded by the difficulty of designing evaluations that reflect genuinely novel environments, workflows, and user objectives. Evaluations should therefore incorporate explicit generalization tests, including holdout environments, that differ meaningfully from training conditions.

- Overfitting to benchmark tasks may be incentivized by competitive evaluation regimes. In line with Goodhart’s “When a measure becomes a target, it ceases to be a good measure,” once benchmark performance becomes a target, it ceases to be an informative metric. Developers may optimize systems to perform well on specific evaluations without improving real-world performance. This risk is particularly acute for agentic AI, where benchmark success may mask brittle or unsafe behavior outside narrowly defined test conditions, since benchmarks cannot account for the full range of inputs, edge cases, and environmental variability that systems encounter in real-world practice.

- Accuracy-focused metrics can obscure cost-performance tradeoffs. As with LLMs, improvements in agentic tasks can often be achieved by increasing inference time (when the model is actively generating a response), such as token usage (the amount of text the model reads and writes) or compute (the processing power used to generate each response). Evaluations that fail to jointly consider performance, cost, and other metrics provide an incomplete basis for deployment decisions.

- Evaluation itself is resource intensive. Designing, maintaining, and running meaningful evaluations, especially those involving field testing or domain-specific benchmarks, can be expensive. These costs constrain both who can perform evaluations and which evaluation mechanisms are sustainable. As with performance metrics, the feasibility and scalability of evaluation frameworks must be assessed alongside their methodological rigor.

Theme 3: Areas for further research for effective agentic AI evaluation and governance

Meaningful governance of agentic AI requires evaluation frameworks that extend beyond model-centric benchmarks to encompass system behavior, socio-technical impacts, and public interest risks. Areas for future research can be organized into three interrelated layers: (1) technical foundations of agentic systems, (2) socio-technical impacts and deployment contexts, and (3) government- and public interest-led safety and oversight needs. Across all three layers, there is a common need for cost- and benefit-oriented measurement and evaluation approaches to support decisionmaking by developers, deployers, and regulators.

Evaluating the technical underpinnings of agents

- Applying principles of measurement science to agentic AI evaluation: Concepts from measurement science such as construct validity (does the measure capture the intended concept?), content validity (does it cover the full scope of the construct?), and predictive validity (does it forecast real-world performance?), have been used to evaluate complex abstract phenomena in political science, psychology, and medicine. Rather than developing ad hoc evaluation approaches, AI researchers can adopt and adapt these mature methodologies to agentic systems, grounding evaluation in well-established theory.

- Simulating human-agent interaction: Automatic evaluation of agents often requires assumptions about how humans will interact with agents. However, current approaches to simulating real-world environments are poor proxies. Human actions are not easily reducible to probabilistic models, and even expert understanding of human learning, decisionmaking, and adaptation is limited. Developing statistically rigorous alternatives to simulating human behavior—or entirely different evaluation paradigms that do not rely on simulating human behavior—remains an open research challenge.

- Evaluating memory-enabled personalized agents: Agents that adapt to individual users over time can exhibit markedly different behaviors depending on interaction history and user preferences. Single-score benchmarks designed for generic agents cannot capture this variability. New evaluation methods are needed to assess personalized performance, distributional outcomes across users, and potential inequities introduced by personalization.

- Evaluating long-horizon tasks: Most existing benchmarks assess agent performance over short time horizons, ranging from minutes to hours. In practice, deployed agents may operate over extended periods, currently sustaining tasks of hours, with projections suggesting week-long autonomous operation within a few years. Over these longer horizons, agents can accumulate state (the context and information they’ve gathered from earlier steps, which shapes what they do next) from prior actions, interact with evolving environments, and compound errors. Systematic and feasible methods for evaluating long-term reliability, behavioral drift, and failure models over extended horizons remain largely unexplored.

- Evaluating multi-agent and distributed systems: As agentic AI becomes more widespread, agents will increasingly interact with other agents and humans across organizational and platform boundaries. Predicting system-level behavior in such settings is difficult, particularly when agents pursue partially aligned or competing objectives. Research is needed on how to evaluate individual agent behavior within networks of agents, as well as emergent properties of multi-agent systems. Continual monitoring of deployed multi-agent systems may also help detect emergent behaviors and interaction patterns that are difficult to anticipate through pre-deployment evaluation alone.

- Evaluating system-level security and robustness: Agentic systems that perform well on model-level safety benchmarks often fail under red teaming or adversarial testing. Security in agentic AI is layered, encompassing the model, scaffolding, tools, interfaces, and environment. Open questions include how vulnerabilities propagate across layers, whether security at one layer can compensate for weaknesses at another, and how to reason about compositional security properties in complex agentic systems. Continual monitoring of deployed systems may also prove important, as vulnerabilities can emerge under real-world conditions and evolve as system components are updated or reconfigured.

Evaluating agentic AI’s impacts, deployments, and decisionmaking

- Establishing predictive validity for real-world deployment: Research is needed on evaluation designs that genuinely test generalization, including novel environments, workflows, and user behaviors, and better predict real-world performance.

- Developing context-specific evaluations for high-risk domains: General purpose benchmarks are insufficient for high-stakes and specialized domains, such as health care, finance, and critical infrastructure. Effective evaluation in these contexts requires collaboration with domain experts and the development of tailored protocols that balance standardization with sensitivity to local constraints and risks.

- Preserving meaningful human control as agents accelerate: As agents become more autonomous and capable of executing many actions rapidly, users risk losing meaningful control. When agents can act faster than humans can review or intervene, evaluation must consider interface design, alerting mechanisms, and decision-support tools that enable effective oversight without turning humans into bottlenecks.

- Evaluating organizational readiness: Most organizations have historically been designed for human-only workflows, optimized through specialization and division of labor. Integration of agentic AI often requires organizations to combine roles, redesign processes, and manage significant organizational change. This transformation challenge is as much of an organizational change management problem as it is a technology problem. Research is needed on how to evaluate organizational readiness, measure transformation costs and benefits, and assess workforce impacts over time.

- Assessing economic and societal effects of agentic AI adoption: Agentic AI capabilities cannot be evaluated independent of their downstream consequences. Evaluation frameworks should extend beyond system-level performance to consider broader economic, social, and institutional effects, possibly drawing on methods from economics, sociology, and organizational theory.

- Accounting for environmental costs: Agentic systems, particularly those using advanced reasoning and long-horizon planning, can be substantially more resource-intensive than simpler models. Environmental impact reporting remains limited, and this dimension is largely absent from current evaluation practices. Research is needed on how to incorporate environmental cost into cost-benefit analyses and deployment decisions.

Agentic AI governance and accountability

- Apportioning liability and responsibility: AI systems lack legal personality, so accountability ultimately rests with humans and organizations. When agents act on a user’s behalf, it becomes unclear where liability lies. Open research questions include how to allocate responsibility among developers, deployers, platforms, and users, and whether new legal theories or statutory frameworks are needed for distributed, cross-jurisdictional action.

- Designing logging auditability mechanisms: Credible oversight requires detailed telemetry and audit trails that capture not only what actions an agent took, but also what alternatives it considered and why. Research is needed on what to log, at what level of abstraction, and how to present information in ways that support effective audits, investigations, and compliance without overwhelming human reviewers.

- Establishing funding models and institutional models for evaluation: Ongoing evaluation and monitoring of agentic systems is costly. Grant funding alone is unlikely to sustain the ecosystem of third-party evaluators, safety auditors, and assessment bodies. Research into and experimentation with more viable funding models will be critical to ensuring the health of the nascent evaluation industry.

Theme 4: Developing credible, independent safety assessments

Governments and public interest institutions increasingly require trustworthy evaluations of agentic AI safety and security, particularly for systems deployed at scale or in high-risk contexts. Emerging efforts by AI safety and security institutes, as well as independent assessment platforms, highlight the need for standardized, transparent, and reproducible evaluation methods that can inform regulatory decisions and public trust.

Conclusions

Evaluating agentic AI is a fundamentally sociotechnical challenge that must evolve alongside both technological capabilities and the organizational contexts in which they are deployed. Improving the real-world usefulness of evaluation frameworks needs sustained collaboration across disciplines, including computer scientists, social scientists, legal scholars, domain experts, as well as close engagement with those who deploy and operate these systems. A human-centric approach to evaluation necessitates incorporating the perspectives of stakeholders who use, govern, and are affected by agentic AI. Rather than reinventing measurement theory, evaluation efforts should draw on established practices from disciplines experienced in assessing complex systems, while remaining attentive to their known limitations.

To advance a coherent and durable research agenda, the workshop series will establish ongoing communication and solicit community input to shape the program for a convening at Carnegie Mellon University in spring 2026, followed by a subsequent workshop at the University of California, Berkeley in fall 2026. Broadening participation beyond the initial workshop is a priority, particularly to incorporate perspectives from AI communities, system deployers, legal scholars, and policymakers. In the interim, smaller, topic-focused discussions will sustain momentum, deepen engagement on specific challenges, and lay the groundwork for longer-term research on the evaluation of agentic AI.

-

Footnotes

- Here is a non-exhaustive list of proposed definitions of an “agent” that include tool use: Mitchell et. al, Hugging Face, Google Cloud, Dawn Song, Kapoor and Stroebl et. al, Acharya et. al, Lei et. al

- Stuart J. Russell and Peter Norvig. “Intelligent Agents,” in Artificial Intelligence: A Modern Approach, 3rd ed. Upper Saddle River, NJ: Prentice Hall, 2010.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).