This report is part of “A Blueprint for the Future of AI,” a series from the Brookings Institution that analyzes the new challenges and potential policy solutions introduced by artificial intelligence and other emerging technologies.

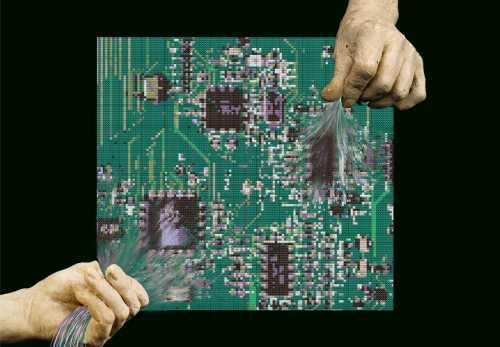

Few concepts are as poorly understood as artificial intelligence. Opinion surveys show that even top business leaders lack a detailed sense of AI and that many ordinary people confuse it with super-powered robots or hyper-intelligent devices. Hollywood helps little in this regard by fusing robots and advanced software into self-replicating automatons such as the Terminator’s Skynet or the evil HAL seen in Arthur Clarke’s “2001: A Space Odyssey,” which goes rogue after humans plan to deactivate it. The lack of clarity around the term enables technology pessimists to warn AI will conquer humans, suppress individual freedom, and destroy personal privacy through a digital “1984.”

Part of the problem is the lack of a uniformly agreed upon definition. Alan Turing generally is credited with the origin of the concept when he speculated in 1950 about “thinking machines” that could reason at the level of a human being. His well-known “Turing Test” specifies that computers need to complete reasoning puzzles as well as humans in order to be considered “thinking” in an autonomous manner.

Turing was followed up a few years later by John McCarthy, who first used the term “artificial intelligence” to denote machines that could think autonomously. He described the threshold as “getting a computer to do things which, when done by people, are said to involve intelligence.”

Since the 1950s, scientists have argued over what constitutes “thinking” and “intelligence,” and what is “fully autonomous” when it comes to hardware and software. Advanced computers such as the IBM Watson already have beaten humans at chess and are capable of instantly processing enormous amounts of information.

The lack of clarity around the term enables technology pessimists to warn AI will conquer humans, suppress individual freedom, and destroy personal privacy through a digital “1984.”

Today, AI generally is thought to refer to “machines that respond to stimulation consistent with traditional responses from humans, given the human capacity for contemplation, judgment, and intention.” According to researchers Shubhendu and Vijay, these software systems “make decisions which normally require [a] human level of expertise” and help people anticipate problems or deal with issues as they come up. As argued by John Allen and myself in an April 2018 paper, such systems have three qualities that constitute the essence of artificial intelligence: intentionality, intelligence, and adaptability.

In the remainder of this paper, I discuss these qualities and why it is important to make sure each accords with basic human values. Each of the AI features has the potential to move civilization forward in progressive ways. But without adequate safeguards or the incorporation of ethical considerations, the AI utopia can quickly turn into dystopia.

Intentionality

Artificial intelligence algorithms are designed to make decisions, often using real-time data. They are unlike passive machines that are capable only of mechanical or predetermined responses. Using sensors, digital data, or remote inputs, they combine information from a variety of different sources, analyze the material instantly, and act on the insights derived from those data. As such, they are designed by humans with intentionality and reach conclusions based on their instant analysis.

An example from the transportation industry shows how this happens. Autonomous vehicles are equipped with LIDARS (light detection and ranging) and remote sensors that gather information from the vehicle’s surroundings. The LIDAR uses light from a radar to see objects in front of and around the vehicle and make instantaneous decisions regarding the presence of objects, distances, and whether the car is about to hit something. On-board computers combine this information with sensor data to determine whether there are any dangerous conditions, the vehicle needs to shift lanes, or it should slow or stop completely. All of that material has to be analyzed instantly to avoid crashes and keep the vehicle in the proper lane.

With massive improvements in storage systems, processing speeds, and analytic techniques, these algorithms are capable of tremendous sophistication in analysis and decisionmaking. Financial algorithms can spot minute differentials in stock valuations and undertake market transactions that take advantage of that information. The same logic applies in environmental sustainability systems that use sensors to determine whether someone is in a room and automatically adjusts heating, cooling, and lighting based on that information. The goal is to conserve energy and use resources in an optimal manner.

As long as these systems conform to important human values, there is little risk of AI going rogue or endangering human beings. Computers can be intentional while analyzing information in ways that augment humans or help them perform at a higher level. However, if the software is poorly designed or based on incomplete or biased information, it can endanger humanity or replicate past injustices.

Intelligence

AI often is undertaken in conjunction with machine learning and data analytics, and the resulting combination enables intelligent decisionmaking. Machine learning takes data and looks for underlying trends. If it spots something that is relevant for a practical problem, software designers can take that knowledge and use it with data analytics to understand specific issues.

For example, there are AI systems for managing school enrollments. They compile information on neighborhood location, desired schools, substantive interests, and the like, and assign pupils to particular schools based on that material. As long as there is little contentiousness or disagreement regarding basic criteria, these systems work intelligently and effectively.

Figuring out how to reconcile conflicting values is one of the most important challenges facing AI designers. It is vital that they write code and incorporate information that is unbiased and non-discriminatory. Failure to do that leads to AI algorithms that are unfair and unjust.

Of course, that often is not the case. Reflecting the importance of education for life outcomes, parents, teachers, and school administrators fight over the importance of different factors. Should students always be assigned to their neighborhood school or should other criteria override that consideration? As an illustration, in a city with widespread racial segregation and economic inequalities by neighborhood, elevating neighborhood school assignments can exacerbate inequality and racial segregation. For these reasons, software designers have to balance competing interests and reach intelligent decisions that reflect values important in that particular community.

Making these kinds of decisions increasingly falls to computer programmers. They must build intelligent algorithms that compile decisions based on a number of different considerations. That can include basic principles such as efficiency, equity, justice, and effectiveness. Figuring out how to reconcile conflicting values is one of the most important challenges facing AI designers. It is vital that they write code and incorporate information that is unbiased and non-discriminatory. Failure to do that leads to AI algorithms that are unfair and unjust.

Adaptability

The last quality that marks AI systems is the ability to learn and adapt as they compile information and make decisions. Effective artificial intelligence must adjust as circumstances or conditions shift. This may involve alterations in financial situations, road conditions, environmental considerations, or military circumstances. AI must integrate these changes in its algorithms and make decisions on how to adapt to the new possibilities.

One can illustrate these issues most dramatically in the transportation area. Autonomous vehicles can use machine-to-machine communications to alert other cars on the road about upcoming congestion, potholes, highway construction, or other possible traffic impediments. Vehicles can take advantage of the experience of other vehicles on the road, without human involvement, and the entire corpus of their achieved “experience” is immediately and fully transferable to other similarly configured vehicles. Their advanced algorithms, sensors, and cameras incorporate experience in current operations, and use dashboards and visual displays to present information in real time so human drivers are able to make sense of ongoing traffic and vehicular conditions.

A similar logic applies to AI devised for scheduling appointments. There are personal digital assistants that can ascertain a person’s preferences and respond to email requests for personal appointments in a dynamic manner. Without any human intervention, a digital assistant can make appointments, adjust schedules, and communicate those preferences to other individuals. Building adaptable systems that learn as they go has the potential of improving effectiveness and efficiency. These kinds of algorithms can handle complex tasks and make judgments that replicate or exceed what a human could do. But making sure they “learn” in ways that are fair and just is a high priority for system designers.

Conclusion

In short, there have been extraordinary advances in recent years in the ability of AI systems to incorporate intentionality, intelligence, and adaptability in their algorithms. Rather than being mechanistic or deterministic in how the machines operate, AI software learns as it goes along and incorporates real-world experience in its decisionmaking. In this way, it enhances human performance and augments people’s capabilities.

Of course, these advances also make people nervous about doomsday scenarios sensationalized by movie-makers. Situations where AI-powered robots take over from humans or weaken basic values frighten people and lead them to wonder whether AI is making a useful contribution or runs the risk of endangering the essence of humanity.

With the appropriate safeguards, countries can move forward and gain the benefits of artificial intelligence and emerging technologies without sacrificing the important qualities that define humanity.

There is no easy answer to that question, but system designers must incorporate important ethical values in algorithms to make sure they correspond to human concerns and learn and adapt in ways that are consistent with community values. This is the reason it is important to ensure that AI ethics are taken seriously and permeate societal decisions. In order to maximize positive outcomes, organizations should hire ethicists who work with corporate decisionmakers and software developers, have a code of AI ethics that lays out how various issues will be handled, organize an AI review board that regularly addresses corporate ethical questions, have AI audit trails that show how various coding decisions have been made, implement AI training programs so staff operationalizes ethical considerations in their daily work, and provide a means for remediation when AI solutions inflict harm or damages on people or organizations.

Through these kinds of safeguards, societies will increase the odds that AI systems are intentional, intelligent, and adaptable while still conforming to basic human values. In that way, countries can move forward and gain the benefits of artificial intelligence and emerging technologies without sacrificing the important qualities that define humanity.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).