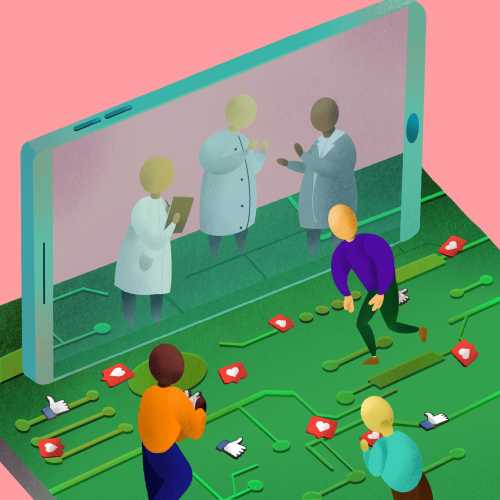

Since February 2022, Ukrainians have produced streams of live videos of warfare, including missile launches, explosions of critical assets, and the horrendous effects of Russian military aggression on civilians. Such collective documentation has appealed to the humanitarian empathies of those watching and sharing these vignettes on broadcast television and social media platforms, while reflecting an apparent lack of diplomacy and conscience of Russian leadership. Modern-day communications historically have been used or manipulated to share the egregious nature of military action or the posture of political zealots, from the War in Vietnam that was broadcasted over television networks to videos showing the aftermath of chemical attacks in Syria. Similarly, social media and other technological tools are not only providing real-time narration of the Ukrainian conflict to ramp up viewers’ disapproval of Russian President Vladimir Putin, but are also displaying calls for public diplomacy, which has been exhibited in the recent video appeals by Ukraine’s President Zelensky before the U.S. Congress and the U.S. media. The fact that private tech and telecom companies are also creating the rules on how technology is being leveraged during the Ukrainian-Russian conflict is also interesting, particularly since there has been little to no government oversight of their digital undertakings. In this blog, we touch upon three areas where private industry has been most busy in the technological arena—social media, internet infrastructure, and content moderation—and propose questions on how the laissez faire approach to technology during war or serious global confrontations can impact future diplomatic efforts.

Social media’s role in the Russia-Ukraine conflict

Prior to invading Ukraine, Russia increased its disinformation and propaganda capacities on social media to push false narratives supporting an invasion of Ukraine, speaking of the necessity to “denazify” the country and making claims of Ukrainian aggression. During the early days of the invasion, Russian state media were quick to recharacterize the conflict; blaming their brutality in Mariupol on neo-Nazis “hiding behind civilians as a human shield;” attacking an area by a nuclear complex while claiming to protect it; saying that it had been Ukraine, not Russia, that had indiscriminately attacked the residential neighborhoods of Kharkiv; and more. While the Ukrainian government has not actively launched comparable mis/disinformation efforts, some stories of war, such as the confrontation at Snake Island and the Ghost of Kyiv, have skirted the boundaries of fact and fiction.

External to both countries, global citizens have also flooded social media with accounts of destruction from other conflicts over the last few weeks, including Russia’s previous annexation of Crimea, the ongoing conflict in Syria, the Israeli-Palestinian conflict, and more, making it difficult for the average online user to know the authenticity of the content they are seeing. Followers of the American far-right have also participated in the dissemination of disinformation, including debunked conspiracies of American-funded biolabs in Ukraine that Russia invaded to take over.

As the Russia-Ukraine conflict continues, social media platforms, such as Meta and Twitter, have identified and de-platformed inauthentic activities and campaigns. While these companies have sought to increase their content moderation, they have also faced criticism for failing to act more promptly on such potentially dangerous content. For example, Facebook faced criticism for failing to take down conspiracies of America-funded biolabs in Ukraine promoted by Russian and Chinese state media, and for overlooking Spanish-language disinformation. In response to the misinformation on Tik Tok, the Biden-Harris administration recently intervened to prep influencers on their biggest questions surrounding the ongoing events in Ukraine.

With much of the activities occurring on privately-owned platforms, the broad debate should be focused on the greater responsibility of private tech companies to step up their content moderation strategies of rapidly evolving events, and whether governments should provide greater oversight. Examining the proactive role that the EU played in immediately banning Russian news outlets suggests a role for government, but also sets a new precedent for the handling of technology during these times.

Here in the US, political battles have raged over the monopolistic and deceptive controls that platform companies have in our democracy, especially via social media. While the slight uptick in de-platforming divisive content has been beneficial in disarming unnecessary divisions, it does beg further inquiry into whether these privately held, online companies should be solely responsible for making such decisions, especially in real-time during international crises. Or should social media companies consider governmental input consistent with First Amendment limitations in setting norms for content hosted on their platforms?

Internet backbone

Alongside the increased use of social media, the availability and use of internet backhaul have been a critical aspect of this international conflict. Following Russia’s invasion, Ukraine petitioned ICANN and RIPE NCC, two key internet organizations responsible for maintaining the core technologies that allow the world’s computers to talk to each other, to cut Russian internet services off from the rest of the world. Both organizations ultimately refused Ukraine’s request, claiming that despite the war, their core missions were to facilitate communications, not restrict access to portions of the internet. Similar calls from Ukrainian officials and activists for Cloudflare and Akamai, two key web infrastructure and webpage content delivery companies, to cease operations in Russia were similarly rebuffed. Echoing the sentiments of ICANN and RIPE, both companies said they would continue to operate in Russia so that ordinary Russians could continue to receive a secure means of accessing open and accurate information on the conflict.

Private infrastructure actors have also played unexpected roles in this conflict. Billionaire Elon Musk, the CEO of SpaceX, heeded calls to expediently provide Starlink satellite internet access terminals to Ukraine. While the Ukrainian usage of the service is currently unknown, the companion app used to access the internet became the top-downloaded app in the country in March 2022. In response to efforts that strengthen internet backhaul and connectivity, Russian forces have been allegedly attempting to jam satellite terminal signals. Similarly, a cyberattack on Viasat, a satellite internet provider and Western defense contractor, which occurred in the opening hours of Russia’s invasion into Ukraine, attempted to permanently disable access terminals in Ukraine and other parts of Europe, with some success.

While cyber experts had anticipated widespread cyberattacks from Russia, the country had only conducted limited attacks on Ukraine’s communications infrastructure and the scale has been small. This has demonstrated the limitations of cyberwar in modern warfare, showing that warfare will remain physical and bloody. Questions of infrastructure in warfare will also continue to center around who should and should not have access to international resources in staying connected, even if some type of compromise is reached between the two countries. And with private sector companies emboldening themselves to respond to the sabotages of internet backhaul, increased national security concerns surrounding such novel developments will continue to surface.

Content segmentation

Following the Russian invasion of Ukraine, many Western companies have begun to retreat from the country. Western tech companies have also limited their operations within Russia and have placed restrictions on what Russian internet users can do on their platforms. For example, YouTube has blocked all Russian state media globally, while TikTok had prevented users in Russia from posting on the platform. In return, Russia blocked access to Facebook, Twitter, and Instagram in retaliation against measures taken by the platforms. The country also passed a law criminalizing the uploading of “false information” surrounding the invasion of Ukraine.

As Russia’s internet isolation grows, many have begun wondering if the Kremlin would move to create controls similar to China’s Great Firewall. While the Kremlin had been able to establish oversight filters and trigger short-term internet blackouts, changing from a free internet to a more restricted one might be difficult. Russia’s attempt to ban Telegram in 2018 was largely ineffective, an order that even government officials did not abide by. Already, VPN use within Russia has been surging in recent weeks, while government attempts to block external access have had limited success. Regardless, these developments raise important questions going forward over the role of a free and open internet during times of global conflict, and how authoritarian countries can attempt to coopt the sovereignty over their digital space, resulting in increased online political propaganda or further restrictions on human rights and other liberties.

Going forward

The Russian invasion of Ukraine has brought forward many important questions on the role of technology and difficult decisions that must be made to ensure openness and accessibility for citizens. In the past, wartime decisions to disable electrical or financial grids went through the hands of the government and the military. Now, content on social media and internet infrastructure is left in the hands of these private actors, adding another dimension to diplomatic decisionmaking. This can result in two potential pathways. The first will embolden closed internet architecture and communications systems that contribute to global turmoil. The other will enable information exchanges that condone acts of aggression both passive and explicit, while engaging the outside world audience as observers. While Congress debates national arguments about the role of technology in democracy, they may want to consider what it means to democratize internet governance and keep companies accountable for decisions made during this international crisis.

Thanks to James Seddon for his research assistance.

Facebook and Google are general, unrestricted donors to the Brookings Institution. The findings, interpretations, and conclusions posted in this piece are solely those of the authors and not influenced by any donation.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

Is there too little oversight of private tech companies in the Russia-Ukraine conflict?

March 30, 2022