Rep. Paul Gosar of Arizona has long drawn criticism for his extremist views. He has added fuel to the spread of conspiracy theories surrounding the Jan. 6 attack on the U.S. Capitol and the deadly 2017 white-nationalist rally in Charlottesville. Most recently, Gosar’s tweet and Instagram post of a video depicting him killing Rep. Ocasio-Cortez, threatening President Biden, and advocating for hostile anti-migrant action on the border raised the menace of online racial violence in politics.

The Sunday-night post is not the first time immigrants have been portrayed as an apocalyptic threat by white nationalists. Nor is it rare for such views to be spread through online networks, and even by people in power. Social networking sites enable people to share connections and view content generated by other users that participate in this virtual social system. So when then President Donald Trump tweeted on March 16, 2020 that “the United States will be powerfully supporting those industries, like Airlines and others, that are particularly affected by the Chinese Virus,” his message of blame toward the Asian community was disseminated across millions of people. A recent study published in the American Journal of Public Health indicates this tweet precipitated anti-Asian sentiments through hashtags like #covid19 and #chinesevirus. Twitter permanently suspended Trump in January 2021 due to “the risk of further incitement of violence.”

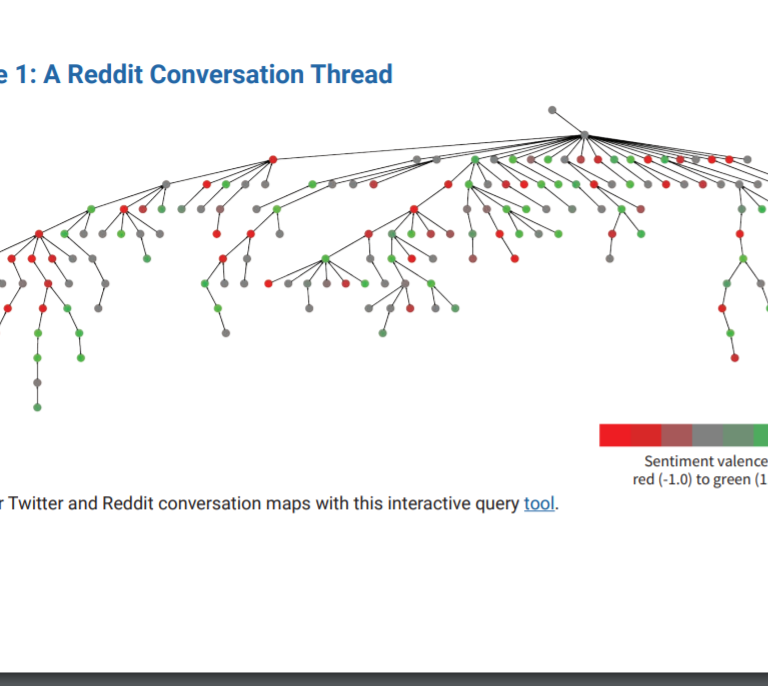

Our recent report, “Bystander Intervention on Social Media,” highlights the need for online interventions against the very real threats that can grow out of online abuse. We identify effective strategies that bystanders can use to combat hate speech and misinformation online. We conducted a quantitative and qualitative analysis of five themes in Twitter tweets and Reddit posts: systemic racism, police brutality, education inequality, employment, and wealth. Some of the conversations were small (n=2) whereas others were large (n=43,186). Each post was assigned a sentiment score using Vader, a tool uniquely designed to be used with micro-blog size social media posts. A sentiment analysis evaluates how positive or negative the words used in a particular tweet or reddit post are. Our analysis was visualized and color coded on a gradient scale from red (-1.0 being the most negative) to green (1.0 indicating the most positive) to indicate the sentiment score (Click here to access tool similar to the figure below).

Understanding how people respond to racial abuse online has broader implications for effectively constructing healthy communication, calming anger and frustration, and changing attitudes. Unfortunately, we found that only one in six Twitter discussions and slightly less than 40 percent of Reddit discussions featured prosocial bystander action (evidence and education as well as content moderation). In the following sections, we describe five lessons learned from our research that hopefully encourage people to pay attention to their online networks and work to address perpetrators of hate speech and misinformation.

1. White Supremacy in a Digital Era

We found that people of color are being targeted by organized misinformation efforts using digital technologies. We identified four primary racist discourses that operate on social media: stereotyping, scapegoating, allegations of reverse racism, and echo chambers. For example, Trump’s March 2020 tweet involves scapegoating in that he blames Chinese people and China for the spread of coronavirus in the U.S., thereby absolving his government of responsibility. Addressing racism on social media requires understanding that users who spread racist misinformation do so differently, sometimes compounding multiple forms of racism in just one post.

2. How to take Action Against Online Abuse

Our research revealed bystander intervention includes callouts, insults, efforts to educate or offer proof, and content moderation. Like Twitter deleting Trump’s post, content moderation reduced or eliminated online racist dialogue. Users who objected to Trump’s tweet may have also used the reply feature to express their disapproval. Others prefer to belittle the intelligence or morals of those who make racist remarks. Finally, people responded to racist misinformation by linking to external sources like Brookings Institution studies. This variety of responses implies social media users have varied perspectives on how best to online racism.

3. Different Platforms, Different Strategies

Despite comparable techniques on Twitter and Reddit, our research found that users utilize different ways to combat online racism. While both sites emphasized education and evidence, Twitter reactions featured more callouts, insults, and mockery than Reddit posts. However, Reddit’s features allowed for more significant moderation, such as moderator bots or downvoting, to respond to racist Reddit posts. Since platforms differ in features that shape online interactions, users have to consider the limitations and possibilities for meaningful intervention based on the social networking software they use. Accordingly, Reddit has built in accountability mechanisms that increase prosocial strategies and reduce antisocial strategies.

4. Not All Bystander Intervention Strategies Work Equally

While there are several techniques for combating online racism, not all of them are equally effective. For instance, our study reveals that education and evidence-based or content-moderated discourse are prosocial techniques. These reactions to racist posts foster dialogue in the same way that they seek to debunk racist rhetoric. On the other hand, some methods, such as callouts, ridicule, and insults, were antisocial. These methods failed to minimize the hostility amongst users or against persons of color. Therefore, Internet users who want to speak out against online racism must consider the purpose of their interactions. If they want to reduce the presence of racism on social media, they must keep in mind that certain approaches may have the opposite effect.

5. We Need More Bystander Intervention

While we discovered several intervention tactics, we also discovered that users on both platforms tended to refrain from intervening in racist conversations entirely. Only around one in every six Twitter conversations and somewhat less than 40% of Reddit discussions included bystander behavior. Considering that “90 percent of teenagers report witnessing bullying online,” bystander intervention is vital. Our report documented the consequences of bullying on mental health outcomes, including depression, anxiety and, at its worst, suicide.

We also suggest that racial minorities may face different types of cyberbullying, experience less empathy from bystanders, and, in turn, have fewer people engage in bystander intervention when they are bullied. The tragedies that occur as a consequence of under-intervention are what we see in the cases of children like 9-year-old McKenzie Adams and 10-year-old “Izzy” Tichenor. The very people meant to be protecting Izzy from abuse stood by as she, and many other Black students across Davis School District, Utah were called the N-word and taunted with references to monkeys, apes, slavery, lynching, and other racial harassment. A continued lack of bystander intervention from her peers or school administrators alike contributed to Izzy committing suicide.

“Silence and inaction do nothing but cause biased perpetrator behaviors to proliferate as they feel unquestioned.” This is one of the most important implications from our analysis. Targeted aggressions can have real consequences on a victim’s mental and physical health. When bystanders step in and help to make aggressions visible, disarm the situation, educate the perpetrator, and seek external reinforcement or support, these approaches provide crucial support in preventing some of the most detrimental effects. Understanding the best strategies for online bystander intervention is the first step in targeting aggression online. If we want to see a genuine change in how social media users discuss racism, we must foster a digital culture that values prosocial discourse.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).

Commentary

Combating racism on social media: 5 key insights on bystander intervention

December 1, 2021