Artificial intelligence (AI) presents a unique opportunity to transform core governmental functions in the face of constrained resources and increased public demand, enabling governments to do more with less. Governments, businesses, universities, nonprofits, and other entities are already integrating AI models into organizational processes to improve tasks and services, such as health care provision, language preservation, economic development, research, and administrative planning. Tribal Nations occupy a unique position within this landscape to thoughtfully explore, shape, and lead the use of AI in ways that advance Tribal sovereignty, strengthen governance, and reflect community values.

As of December 2025, there are 575 federally recognized Tribal Nations in the United States that each maintain a nation-to-nation relationship with the federal government. While distinct in their respective histories, languages, cultural practices, and people, each is a sovereign nation with the inherent authority to self-govern and make decisions about how to manage its internal affairs. The right to self-governance and self-determination extends beyond traditional government functions, such as providing services to Tribal citizens like health care and education. Tribal Nations retain other sovereign powers, including the authority to tax; pursue economic development ventures; determine how its lands, territories, and natural resources are used; manage its own infrastructure, including digital networks; and manage the use of emerging technologies when it implicates internal interests.

As AI becomes increasingly embedded into organizational structures worldwide, AI governance has evolved into a foundational component of a Tribal Nation’s digital sovereignty strategy. The term Tribal Digital Sovereignty (TDS) describes a Tribal Nation’s authority to control and manage its digital ecosystem by establishing governance structures for how assets, resources, and technologies are collected, stored, used, and shared both within the community and beyond. This includes managing data, physical infrastructure, such as wireline or wireless networks, digital assets, such as wireless spectrum, and the use of software and other emerging technologies within its lands and territories.

Drawing on research, discussions, and feedback from the recent AI in Indian Country Conference, this paper explores AI’s opportunities and risks for Tribal Nations. The terms Tribal Nation, Tribe, and Tribal government are used interchangeably throughout this paper for stylistic purposes. While describing communities, identities, and populations connected or adjacent to Tribal Nations, the terms Indigenous, Native American, American Indian, and Alaska Native are not used in this paper. Rather, the intention of the authors is to emphasize the political and sovereign identity of a Tribe as a nation—a role “Tribal Nation” captures more fully.

This paper examines how AI implicates data privacy, bias, misrepresentation, and unauthorized use of sensitive cultural information of Tribal Nations, along with use cases among Tribal Nations that are actively engaging emerging technologies. This paper also examines other themes from the conference, including sovereignty as a design principle; data governance; capacity and competence; interconnected digital ecosystems; and ethics and cultural continuity—all of which are aspects of AI governance. Each of these factors requires careful consideration by tribal nations leveraging AI. At the end of the paper, the authors share recommendations for Tribal leaders, government, and industry that reflect feedback from conference attendees about Tribal leaders’ role in governance, use cases, and policies that comply with Tribal code, while regulating to limit systemic biases in design and implementation.

Background

The data and recommendations presented rely on the engagements at the one-day conference on AI in Indian Country on Sept. 26, 2025, which was hosted by the American Indian Policy Institute at Arizona State University’s Sandra Day O’Connor College of Law. The conference boasted 10 panels, 30 speakers, one keynote, and 200 attendees, most of whom were from or represented Tribal Nations. The speakers, panels, and discussions were recorded, and transcripts were created that were later summarized using NotebookLM, which generated an overview and synthesis of the content discussed.

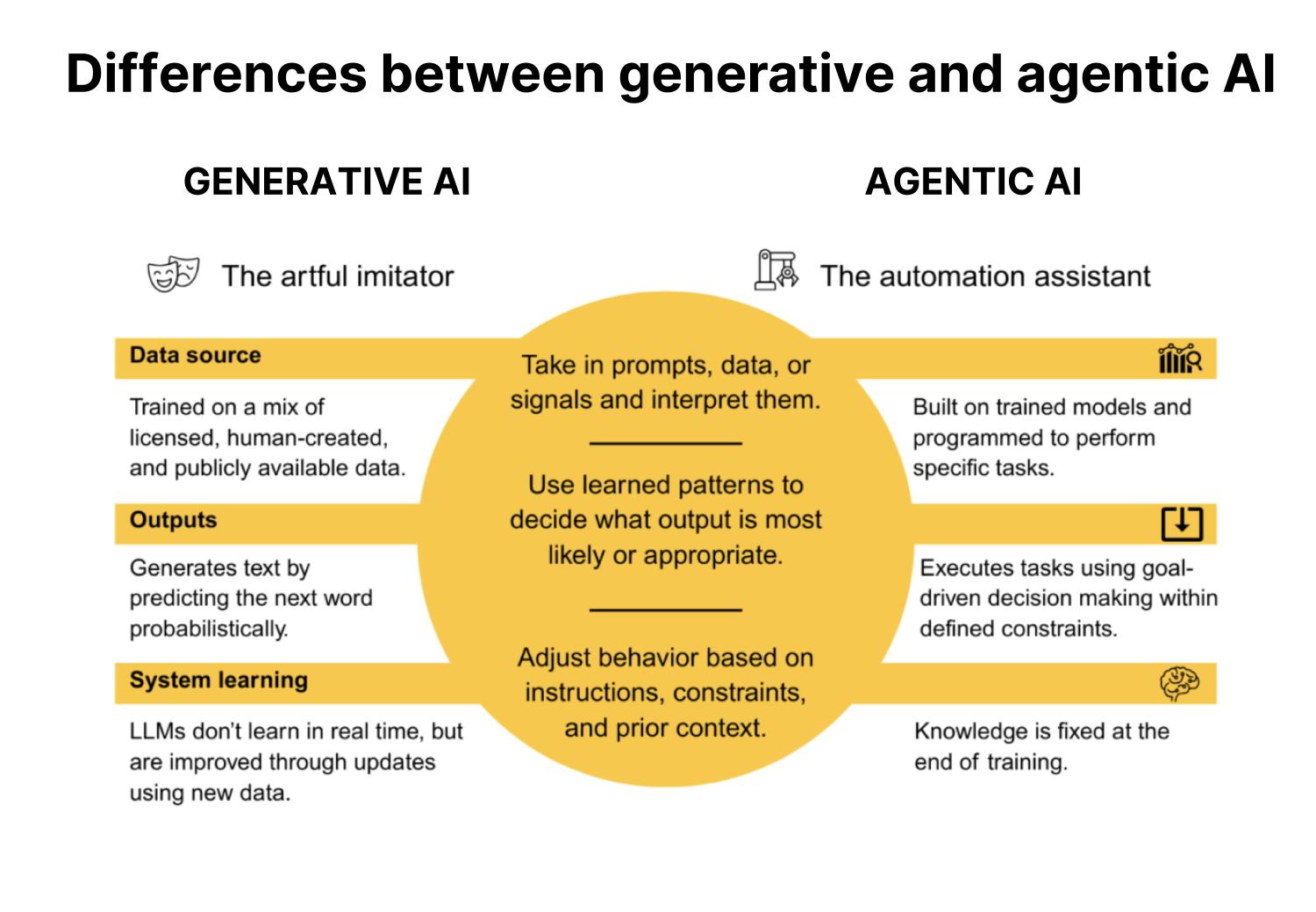

This paper makes distinctions between two types of AI: generative and agentic. Generative AI describes models that create new content or outputs. Large language models (LLMs) like ChatGPT are a type of generative AI that analyzes large datasets, acting as a sophisticated pattern matcher to predict the next word in a sequence using probability and statistical models. Agentic AI is coded for a specific purpose and trained to perform tasks autonomously. Unlike generative AI, agentic models are not limited to generating responses to specific prompts; instead, these systems mimic human decisionmaking processes and can adapt to changing circumstances.

While modeled after human thought processes, generative AI does not replicate human thought; it makes a statistically informed guess in response to a user-generated prompt. A generative model’s data corpus is often frozen at the point in time that it completed its training—the implication being that if, for example, a new law was enacted or a major new event occurred, the model would not be aware of those developments without additional training. The model cannot autonomously adapt to new developments as they occur. While agentic AI relies on generative models, it differs in that it is entirely autonomous and dependent on a chain of chatbots to complete a task (see Figure 1).

The conference panels and resulting dialogue demonstrated a shared vision for Tribal Nations seeking to develop an AI adoption strategy and governance policies that align with community values, priorities, and elevate Tribal sovereignty. The discussions revealed five general themes that can serve as a roadmap for Tribal Nations embarking on an AI deployment journey; these themes that are shared below guide the considerations and inform the recommendations explored in the final section of this white paper.

The definition and significance of sovereignty for Tribal Nations

While the U.S. recognizes Tribal Nations as sovereign today, the relationship between the federal government and Tribal Nations is informed by a complex and often difficult historical relationship spanning centuries that included harmful eras of federal policy when Tribal Nations were “subject to enduring efforts to strip them of their land, their possessions, and even their identities.” As the relationship between the sovereigns evolves, so do the opportunities for Tribal self-governance. Yet the primary objective remains unchanged: At the heart of Tribal sovereignty is a duty to protect what is most valuable and precious to the Tribal Nation. In the digital era, that means protecting Tribal data, such as sensitive Tribal citizen information, financial records, or recordings featuring sacred cultural practices and traditions.

Data provides the currency for AI models’ utility. AI programs are trained on data, referred to as a data corpus, consisting of publicly available and proprietary information. A public AI model’s corpus can consist of information scraped from sources, such as books, journal articles, news articles, websites, and social media posts. Public AI programs are typically available at no cost, but information uploaded into the system when drafting a prompt then becomes part of the model’s data corpus; it is used to further train the model and can inform future outputs generated by other users. Alternatively, organizations may opt for a secure AI model trained only on the organization’s internal information. Secure AI models are often more expensive to build and require technical expertise to maintain, but they also offer more protections for sensitive data an organization, or Tribal Nation, may want to protect from public access. Whether publicly available or proprietary, data is an integral component of AI technology.

For Tribal Nations, the role of data in AI models reflects more than just simple data points in a larger data corpus. One of the conference participants observed, “data is kin” because it represents people, as well as individual and shared histories. It reflects a community’s unique institutional, cultural, and economic priorities, bridging its core mission and values with the policies adopted to advance those goals. Tribal Nations have endured government-sponsored efforts to dispossess them of valuable resources in the past, such as land, natural resources, and cultural and religious practices, and survived attempts to rob them of their futures by stealing their children. Among a Tribal Nation’s most valuable resources are its people, and when data represents people, the consequences of data-dependent technology like AI permeating a Tribal community emerge.

Despite AI’s modernity, history is rife with information and data-based exploitation of Tribal Nations. Nineteenth and 20th century anthropologists routinely dispossessed Tribal Nations of sacred artifacts, human remains, and funerary objects without consent—many of which remain in U.S. museums today. It was not until the 1980s, for example, when the Passamaquoddy Tribe regained access to an 1890 recording of their language created by a researcher visiting the community and eventually held by Boston’s Peabody Museum. More recently, the Standing Rock Sioux Tribe filed suit against the Lakota Language Consortium, an organization that the Tribe claims exploited its elders and community members for Lakota language recordings that it now refuses to share with the Tribe.

Tribal Nations and their citizens also experienced dispossession of personal health and genetic data. The U.S. military’s iodine-131 experiment in the 1950s was an attempt to improve service member capabilities in the Arctic and raised significant ethical concerns. Alaska Native test subjects were instructed to ingest radioiodine capsules and endured invasive medical procedures where biological data, including blood, urine, and saliva, were collected to study whether the test subjects’ thyroid glands somehow made them better equipped to survive in arctic conditions. President Clinton issued a formal apology for the study in 1995, but contemporary examples of health and genetic data dispossession remain. In 2004, the Havasupai Tribe filed suit against the Arizona Board of Regents and Arizona State University after learning that Tribal citizen blood samples originally collected as part of a Type 2 diabetes research study were used in unrelated and different genetic studies. The case ultimately resulted in a settlement agreement in favor of the Tribe. And most recently during the COVID-19 pandemic, Tribal Nations learned that sensitive member and government data provided to the U.S. Treasury to access COVID-19 funds were leaked to other federal agencies.

These instances constitute just some of the myriad examples of Tribal Nations experiencing misuse of sensitive government and member data by outside entities, underscoring the unique considerations AI adoption and use presents for a sovereign nation committed to protecting its citizens. Data remains the foundation for AI models, and Tribal Nations know firsthand the consequences of data misuse and loss. For Tribal Nations, AI governance extends beyond optimizing government functions and increasing operational efficiency. AI, and any emerging technology dependent on information, necessarily implicates the Tribal Nation’s survival. The historic and nonconsensual use of Tribal Nation resources and data demonstrates the need for intentional data stewardship by Tribal Nations in the age of AI, and the adoption of robust governance systems that reflect the Tribal Nation’s values.

The risks and rewards of AI

There is an inherent tension between AI’s powerful potential as a tool for efficiency and the threat of unintended consequences resulting from its use. That is why the objective for Tribal Nations is to make a value judgment on how to delicately balance the opportunities with the risks, and craft an AI strategy that reflects its values while proactively mitigating vulnerabilities deemed unacceptable.

Opportunities

Tribal Nations are already contemplating, planning, and—in some cases—using AI models for its time-saving and streamlining capabilities. According to a survey, 84% of the 200 conference attendees were already using AI in some capacity. To increase efficiency of government functions, Tribal Nations whose internal processes feature traditional siloed decisionmaking and rely on isolated datasets can utilize AI to facilitate data integration and synthesis through knowledge mining and retrieval. Some examples are included below.

- The Cherokee Nation created an AI legal agent using Microsoft Copilot to unify fragmented legal sources, such as Tribal court decisions and executive orders issued by chiefs, into a single data repository.

- The Morongo Band of Mission Indians created an internal chatbot to more efficiently cross-reference cultural customs with various internal sources of legal authority, like Tribal council resolutions, ballot referenda, and ordinances.

Beyond data integration, AI models offer additional opportunities for Tribal communities. The Cherokee Nation is using commercial AI for cultural preservation by facilitating 3D printing of turtle shells used in stomp dance regalia. Anishinaabe roboticist Danielle Boyer utilized AI to create the “Skobot,” a physical bot designed to help children learn endangered languages by generating responses to questions in a targeted language. IrrigOpt AI developed an AI platform to provide hyperlocal irrigation guidance to Yakama Nation communities to help lower farming costs, reduce water waste, and address the impacts of regional droughts.

More broadly, attorneys and Tribal advocates are using AI to help speed up the legal research process and draft court filings. Tribal health care providers are thinking beyond generalized patient care and brainstorming ways AI can facilitate targeted and personalized care, reflecting a shift in values from managing symptoms of illness to holistic healing and well-being. In education, teachers are mapping out new ways that AI can improve lesson planning. AI can also be used to vet business opportunities and enhance economic development in Tribal communities. For example, Tribal Nations could develop a prompt detailing the terms of an offer, the industry, and relevant financial information with instructions to evaluate its advantages and vulnerabilities.

In summary, AI offers Tribal Nations a multitude of potentially transformative benefits. It simultaneously poses significant risks that, without proactive mitigation efforts, may undermine a Tribe’s core values and underlying digital sovereignty strategy.

Risks

AI remains a highly technical, complex, and abstract technology due to a lack of both transparency and insight into its exact internal decisionmaking process. The opacity of decisionmaking brings little clarity to the possible biases that are embedded in AI models from design to implementation. Tribal Nations are uniquely suited to ensure AI use remains grounded in fairness and accountability.

Data deficiencies and exploitation also create angst when it comes to representative and trustworthy AI models. Among the most concerning weaknesses of generative AI is the possibility that a model may generate an output based on incomplete information, or produce answers that lack a factual basis, known as hallucinations. Hallucinations pose risks for all users because they are born out of a generative AI system’s design mandate: These systems are programmed to provide a definitive answer to a prompt, even when the internal confidence level on the answer’s accuracy is low. Both forms of ungrounded answers pose significant threats to Tribal Nations because of their potential to undermine informed decisionmaking or compromise the success of specific use cases.

During the conference, a panelist speaking to AI’s potential to influence language and culture revitalization shared a hallucination example that illustrates the potential to undermine these efforts without additional scrutiny. A fluent Navajo language speaker used ChatGPT to test the program’s ability to learn and speak the language. The speaker found that the program not only generated incorrect pronunciations of existing words but confidently generated false words and phrases that simply did not exist in the Navajo language.

Similarly, Anishinabek News recently reported that an AI-generated book purporting to contain resources on Anishinaabemowin, or Ojibwe, language verbs was listed on Amazon. Inaccurate language outputs like these risk polluting and curtailing language revitalization programs intended to remedy the long-term effects of harmful national policies, such as Indian boarding schools, where Native children were forbidden from and often punished for speaking their languages. As a result, these languages are endangered and at risk of extinction altogether. The Anishinabek Nation’s Anishinaabemowin Coordinator Laurie McLeod-Shabogesic explained that “inaccurate resources such as [the book] not only hurt learners who later have to unlearn this misinformation, but the resources also compromise the long-term integrity of our languages.”

The need for Tribal Digital Sovereignty

Beyond hallucinations, additional data protection concerns arise in the context of cybersecurity, such as prompt injection—when a malicious user prompts the AI model to ignore its default safety programming to generate prohibited content, such as sharing sensitive information or generating misinformation. Because data remains a valuable resource for Tribal Nations, the risk of Tribal data loss, unauthorized use, or misrepresentation is significant and demonstrated by a multi-century effort to divest Tribal Nations of their most valuable resources.

These risks compound when utilizing open or public AI models, which can eliminate the ability of Tribal Nations to protect sensitive cultural and citizen data from unauthorized use and from reaching unintended audiences. Inaccuracies in core cultural values resulting from ungrounded AI answers risk misrepresenting a Tribe’s unique history, values, and belief systems as well as perpetuating bias and harmful stereotypes in decisionmaking processes that rely on AI to analyze data. Proactive governance featuring robust safeguards is a necessity.

Tribal Digital Sovereignty provides a foundation for Tribal Nations to develop governance systems that both protect the Tribe’s inherent sovereign authority to control how AI exists within its jurisdiction and reflect the Tribe’s unique cultural and institutional values. Instituting governance through AI acceptable use policies or Tribal codes without responsible and careful planning is not without risks. While this section is not an exhaustive list of the potential challenges associated with AI adoption and use, it serves as a guide to Tribal Nations thinking about mitigation strategies that can minimize, if not altogether eliminate, those risks.

Developing more inclusive AI for Tribal Nations

Currently, Tribal Nation leaders are underrepresented in national discussions on AI, leading to either underinclusive data or practices that omit the lived experiences of Tribal Nations. As Tribal communities contemplate how to respond to the rapid rise in AI technology, the proposed recommendations in the next section reflect feedback provided at the conference by Tribal Nation leaders and community members. They demonstrate the value of establishing data infrastructure reflective of Tribal Nation values, norms, and practices that return value to the Tribes. The recommendations also assume that Tribal Nations are diverse. There will be variance on what is important to a particular Tribe, geography, and populations; much of that will be determined by a Tribal Nation’s existing governance policies. The proposed recommendations include implementing data hygiene practices, defining core values, establishing strong governance structures, engaging in thoughtful policy development rooted in community values, and implementing robust risk mitigation measures—all of which are detailed below.

Proposed recommendations to achieve a community-centric AI strategy

This section is divided into recommendations for two separate audiences: (1) Tribal leaders seeking to better understand how to develop an AI strategy; and (2) non-Tribal entities, such as government and AI companies, seeking to partner with Tribal Nations in ways that respect community values. These recommendations elevate Tribal sovereignty to the forefront of the decisionmaking process.

Proposals for Tribal Nations

Organize and protect data to train AI models for Tribal communities

With a general understanding of both the benefits and risks of AI use, Tribal Nations seeking to establish an AI adoption strategy should begin with a foundational concept in mind: An AI model is only as effective as the data used to train it. Clean, accurate, and organized data contributes to more accurate AI outputs. Tribal Nations can begin by taking inventory of existing internal data and new data as part of both model prep and general data prep and classification. The data classification process can include identifying sensitive personally identifiable information (PII) or protected health information (PHI) a Tribal Nation may seek to secure from use in an AI model and data that it deems acceptable for AI use. Standardizing or digitizing existing datasets may also be helpful. When creating or adapting AI models to Tribal Nation needs, integrating privacy-by-design early in the planning process is equally important, especially when dealing with sensitive or historically protected information.

Embed community values in AI models and governance

The Cherokee Nation of Oklahoma’s AI acceptable use policy, and the three guiding principles informing it, are a groundbreaking example of values-focused governance in the digital space that could serve as a model of how to embed community values in AI governance. The Tribe is no stranger to utilizing technology to enhance its operations and has created a digital access portal to improve service delivery to its over 450,000 Tribal citizens. With an information and technology (IT) team consisting of 59 employees, Cherokee Nation leadership shared at the conference that they recognized AI could alleviate some of the pressure on IT to manage the Tribe’s extensive digital footprint. The Tribe established a taskforce with the purpose of ensuring that AI policy development was grounded in Cherokee community values and identified three core beliefs relevant to AI adoption: (1) resourcefulness, (2) protection, and (3) outreach and engagement.

According to Cherokee Nation leadership, Tribal Nations are inherently resourceful because they are experienced at doing more with less and at their strongest when they embrace principles of adaptability, creativity, and resiliency. AI is, in many ways, a tool that embodies resourcefulness and ingenuity. It can expand the reach of existing resources by expediting and streamlining internal processes, improving service provision outcomes through enhanced data analytics, and expanding citizen access to vital Tribal government programs.

But as the taskforce recognized that AI’s potential benefits cannot outweigh the Cherokee Nation’s mandate to serve in accordance with its other core values, Tribal leadership has resolved to use the technology in a manner that avoids outsourcing human labor to machines or for purely cost-saving purposes. Outreach and engagement in the AI adoption process is also critical, suggesting that AI use policies be implemented gradually and gently, through clear instruction, and with thoughtful encouragement. Since the taskforce’s creation, the Cherokee Nation has adopted a robust AI acceptable use policy, identified multiple use cases, and continued to scale its AI use as community needs evolve. This example of leadership and community engagement in AI planning and adoption may provide lessons to others attempting to do the same.

Develop AI literacy tools and raise awareness of AI among Tribal Nations collectively and by individual community

Tribal Nations should consider how much people know about AI and its individual and community implications. But rather than skipping ahead to policy development, Tribal leaders can take action to acquire a basic level of understanding and technical knowledge, or general literacy, on AI. For the Morongo Band of Mission Indians, the learning process involves more than just watching a short training video: It requires developing a level of proficiency in the subject matter before regulating it. Modeled after language learning methods, the Tribe explained at the conference that it follows a 30% competence rule, which recognizes that a learner reaches proficiency in a new subject area when they master about 30% of the content. Striving for a baseline level of understanding among Tribal leadership reduces the possibility of AI misuse and lays the foundation for optimization throughout the planning process. Within the community, increased Tribal citizen awareness of AI technology improves transparency in governance by helping community members understand the ways in which the Tribal Nation is using AI to perform government functions.

Establish governance practices and policy development strategies

Armed with an understanding of AI, Tribal Nations can create governance structures to guide AI policy formation. This may begin with establishing a group of AI leaders to form an advisory board, steering committee, taskforce, or otherwise dedicated position tasked with contemplating data-centered actions on a global scale—similar to how the Chickasaw Nation reimagined its internal data planning processes when it created a director of data stewardship position. The structure of the group or leadership team itself matters less than the talent and viewpoints within it. The goal is to form a visionary group that is representative of the community, multigenerational, and inclusive of expertise in diverse policies, such as commerce, cultural preservation, education, health care, and public safety.

A Tribal Nation might begin by identifying Tribal government employees working across various departments with subject matter expertise in specific policy areas, with or without AI experience. For example, a health care provider at an Indian Health Care (IHS) facility who lacks experience using AI may nevertheless have valuable insight into how health care provision can be improved for patients. Viewpoints from non-Tribal government employees, such as elders and traditional knowledge keepers, may also provide critical feedback on how a Tribal government’s efforts to deploy AI impact the greater community.

The group leading this effort, whether limited to elected Tribal government leadership, an advisory board, or a combination of the two, should clearly define roles and responsibilities. The group should first establish which people can exercise decisionmaking authority or if group-based decisionmaking—such as by consensus or a majority vote—will be relied upon instead. Then the planning process can shift to identifying the vehicle through which a Tribal government AI policy will be communicated and memorialized, and whether existing Tribal government policies should be updated to reflect an evolving technical landscape.

To start, a Tribal Nation can create an internal acceptable use policy that clearly defines when AI use in government processes is allowed and when it is prohibited. The substance of the policies adopted will largely vary depending on a Tribal community’s unique cultural values. The policy can formally reference those values and include relevant definitions of technical terms used, an explanation detailing the policy’s scope, examples of authorized and prohibited use, and user responsibilities. It can also identify educational resources for employees seeking to improve their understanding of AI generally.

A Tribal Nation may also need to create or update an existing internal data use policy to define what Tribal data will be used to train AI models, if any. When internal AI use is clearly defined, a Tribal Nation’s legislative body may also consider updating existing Tribal codes or enacting new laws and regulations that govern the use of AI by outside entities and third parties and that clearly define what data is off limits. Doing so is not only an exercise of Tribal Digital Sovereignty and self-determination—it also creates transparency and sets expectations for outside organizations looking to form partnerships with Tribal Nations or conduct business on Tribal lands.

Integrate mitigation strategies into AI adoption plans to minimize risk

AI offers substantial benefits, but it also raises significant concerns, including a lack of transparency into a model’s decisionmaking process, data privacy standards, tendency to hallucinate, and susceptibility to cyberattacks. However, Tribal Nations can proactively reduce these risks by adopting robust mitigation strategies, beginning with how Tribal government employees use AI in the workplace. Best practices demonstrate that generative AI should be used only as a tool; it is not a substitute for human judgment. Tribal leadership may consider cautioning or altogether limiting the use of publicly available AI applications in the workplace to insulate the organization from an increased risk of hallucinations or outputs based on incomplete information. Rather than prohibit their use altogether, a mitigation strategy might instead specify that public models be used only for limited purposes such as research synthesis, outline organization, or brainstorming—with the caveat that sensitive Tribal data should not be used when entering prompts, and that any output must be verified independently for accuracy.

Tribal Nations can reduce risk by opting for secured AI applications trained only on internal data. For example, some companies that operate publicly available AI models will create private or “locked-down” versions accessible only to the requesting entity. However, secure models can be costly to build and maintain. Formal partnerships with AI companies might also require lengthy negotiation processes to ensure enhanced data protection measures that honor Tribal Digital Sovereignty are incorporated into formal legal agreements memorializing a partnership. Tribal Nations might consider joining forces and leveraging their collective bargaining power, such as through a formalized AI Tribal consortium, to negotiate more favorable terms in AI agreements across Tribal communities more broadly.

Tribal Nations also can take additional steps to enhance internal cybersecurity measures to protect sensitive data. Before subjecting internal data to an AI model, Tribal governments may consider engaging in identity and access management. Through active directory assessments, this practice aims to limit access to an organization’s digital resources, such as sensitive data and secured applications, to the right users. A hypothetical user’s account may mistakenly contain permissions to access certain highly sensitive Tribal government data that might inadvertently become part of the AI model’s data corpus. Ensuring that user permissions are up to date and accurate protects the organization from unauthorized data use.

Lastly, Tribal Nations might also consider the type of infrastructure they use for data storage, whether cloud-based or local on-premises storage solutions. A cloud-based solution featuring remote servers managed by a third-party entity, while typically less expensive, limits a Tribal government’s ability to control data privacy and security. While it requires a hefty up-front investment, on-premises solutions that feature local servers can be erected on Tribal lands and managed directly by a Tribal Nation or Tribally owned entity—a solution that reflects fundamental digital sovereignty principles.

Proposals for governments and industry

Entities that seek to work with Tribal Nation data in AI models—or who endeavor to partner with a Tribe to adopt, deploy, and use AI in Tribal communities—must recognize that Tribal Nations remain sovereign governments. Accordingly, their status as a nation creates additional requirements that governments and AI companies should consider when working alongside Tribal Nation partners.

The trust responsibility lays the foundation for good partnerships between governments

The federal government is obliged to uphold the federal trust responsibility, a legally enforceable duty to act pursuant to a Tribal Nation’s best interest. The U.S. Supreme Court has upheld the trust responsibility time and again, and it is informed by the multi-century historical relationship between the two sovereigns. The doctrine dates back to an 1831 Supreme Court case, Cherokee Nation v. Georgia, where the Court recognized the relationship between the United States and the Tribes was akin to that of a “ward to his guardian.” Today, the federal trust responsibility is a legal obligation to protect Tribal lands, resources, assets, treaty rights, and the right to self-governance and self-determination.

The right to self-govern internal matters includes the authority to enact laws and regulations around AI use that a Tribal Nation has deemed acceptable within its jurisdiction. While a comprehensive set of federal AI laws and regulations do not yet exist, the current presidential administration has signaled an intention to prioritize eliminating barriers to AI development and deployment through executive orders. The trust responsibility, however, obligates the federal government to protect and respect a Tribal Nation’s authority to govern its own AI use and the use of AI by others when it implicates Tribal Nation data.

Furthermore, as federal agencies continue to adopt AI models in programs that provide services to Tribal Nations or that analyze Tribal citizen data, the trust responsibility imposes a duty to meaningfully engage with Tribal Nations through formal Tribal consultation sessions on how sensitive data is used. Good faith efforts to ensure Tribal leaders can guide federal policy development when federal agency AI use concerns Tribal Nation data and resources lays the foundation for strong government-to-government partnerships.

Tribal values should inform industry practices when it comes to the design and deployment of AI

As each federally recognized Tribal Nation is a unique and distinct sovereign, AI companies planning business partnerships with Tribal Nations should familiarize themselves with the Tribal Nation’s unique laws, as well as general federal Indian law principles that inform business relationships with sovereign nations. When AI models touch Tribal Nation data or operate within Tribal lands, the importance of negotiating each entity’s roles, responsibilities, and obligations in good faith is crucial. In addition to general best practices for crafting transactional documents, AI companies should be amenable to legal agreements that employ sweeping data protection provisions, ideally with language that honors Tribal sovereignty. Such provisions might detail the scope of permitted Tribal data use with specificity and provide remedies for the Tribal Nation in the event of a breach.

Furthermore, AI companies may consider developing a legal agreement template specifically for Tribal Nations. These may include baseline language with detailed and robust data protection measures that can be adapted to the specific needs of a Tribal Nation. Good faith gestures such as these foster enduring business relationships built on mutual respect.

Conclusion

Tribal Nations are inserting digital sovereignty into AI design, governance, and deployment—transforming past struggles over data ownership into proactive regulation and innovation. The Cherokee, Chickasaw, and Morongo examples and others outlined in this paper demonstrate applied AI governance, blending law, culture, and administrative innovation. These examples can serve as templates for future action, complementing additional safeguards, such as ensuring that legal agreements with third parties include robust data protections grounded in tribal consent and that tribal codes prioritize data security policies.

But before Tribal communities focus on governance planning, Tribal Nations will benefit from an upfront investment in AI literacy and capacity building. A greater understanding of the subject matter will support a more targeted and intentional governance strategy, helping Tribal Nations better understand AI’s potential to help community-led systems improve their operations. By viewing AI as one part of a greater digital ecosystem, Tribal Nations can integrate AI into existing policies involving broadband, cybersecurity, and data stewardship, forming one overarching digital sovereignty framework. Governments and the AI industry can position themselves as good partners by respecting Tribal sovereignty and working with Tribal Nations to adopt AI models in good faith. Grounding AI implementation and adoption plans with Tribal sovereignty at the forefront ensures that technological innovation advances in harmony with, rather than at the expense of, core Tribal community values. In this way, AI can augment the experiences of Tribal citizens rather than perpetuate historical cultural exploitation.

-

Acknowledgements and disclosures

Amazon and Microsoft are general, unrestricted donors to the Brookings Institution. The findings, interpretations, and conclusions posted in this piece are solely those of the authors and are not influenced by any donation.

The authors would like to thank Josie Stewart for her assistance with this report.

The Brookings Institution is committed to quality, independence, and impact.

We are supported by a diverse array of funders. In line with our values and policies, each Brookings publication represents the sole views of its author(s).